Listen to our podcast 🎧

Introduction

Digital transformation has made industries, enterprises, and organizations more efficient through the adoption of connected systems. Technologies such as the Internet of Things (IoT) and Industrial Internet of Things (IIoT) have expanded access to data and automated processes. While these advances improve operational efficiency, they also introduce new vulnerabilities, increasing exposure to cyber threats. Traditional security measures often struggle to keep pace with sophisticated attacks, especially within internal networks.

The Growing Complexity of Hybrid Cloud Environments

As organizations adopt hybrid cloud architectures, managing data, applications, and AI models across public and private clouds becomes more complex. Ensuring AI governance, regulatory compliance, and operational security requires real-time visibility into network traffic, data flow, and AI-driven decision-making.

Why Explainable AI (XAI) Matters ?

Explainable AI helps organizations understand the reasoning behind AI predictions. In hybrid cloud environments, XAI ensures transparency, reduces operational risk, and builds stakeholder trust. It is particularly vital for regulated industries, where demonstrating accountability and compliance is non-negotiable.

The Role of AI Risk Management

Effective AI risk management enables organizations to detect anomalies, monitor AI models, and assess risk in real time. CISOs, risk teams, and IT security leaders rely on these practices to safeguard operations, ensure compliance, and prevent potential disruptions caused by flawed or biased AI decisions.

This blog explores practical methods for improving cloud security, hybrid cloud risk management, and AI model explainability. It provides actionable insights and best practices for regulated industries, helping security and risk teams manage AI responsibly while maintaining operational efficiency.

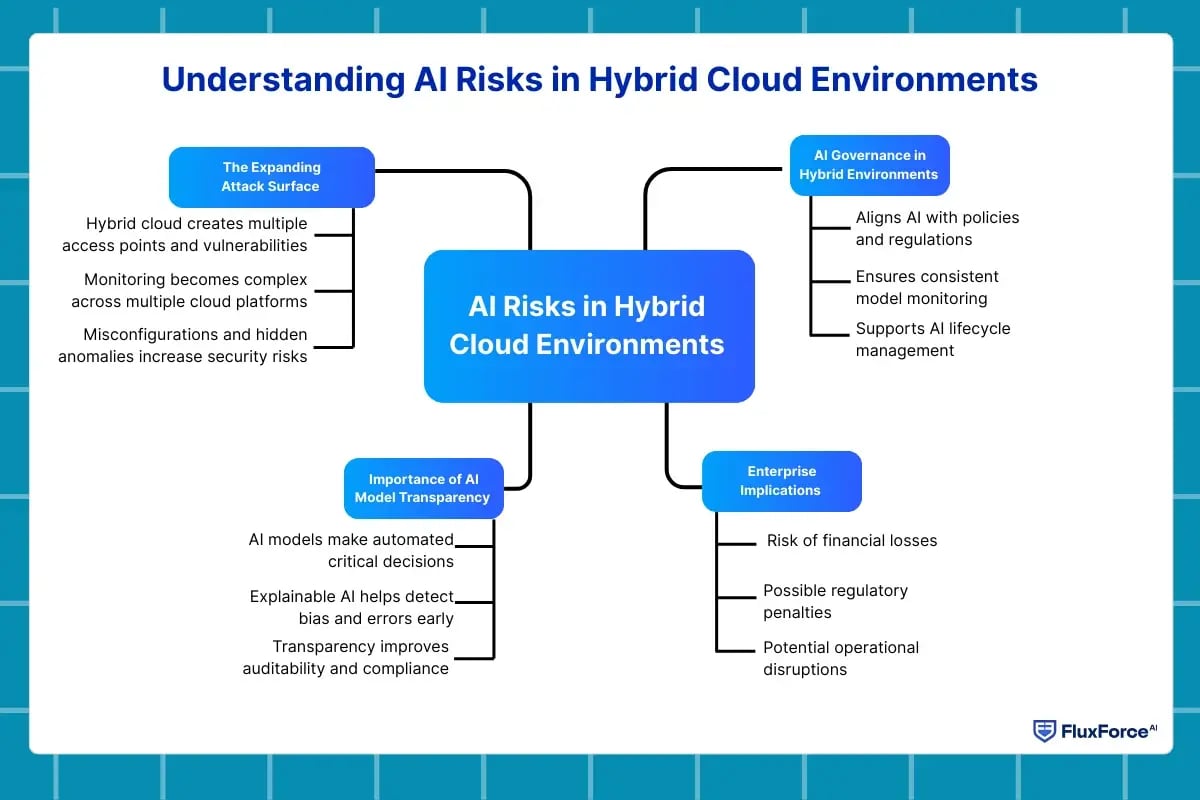

Understanding AI Risks in Hybrid Cloud Environments

As organizations adopt hybrid cloud infrastructures, the complexity of managing AI systems increases.

AI models handle sensitive operational and business data, and errors in these models can translate into significant financial, operational, or regulatory risks. Effective AI risk management ensures that organizations maintain visibility and control over AI-driven decisions while safeguarding against both external and internal threats.

The Expanding Attack Surface

Hybrid cloud environments combine on-premises systems with public and private cloud resources, creating multiple points of access. Each node introduces potential vulnerabilities, making hybrid cloud security a priority. Without comprehensive monitoring, subtle anomalies in network traffic, authentication logs, or system behavior can go undetected, allowing intrusions or unauthorized access to escalate.

- The average enterprise operates across 5–7 cloud platforms, increasing the complexity of monitoring AI models.

- Misconfigurations alone account for nearly 30% of cloud security incidents in large organizations.

Importance of AI Model Transparency

AI models in hybrid cloud setups often make critical decisions without human intervention. Lack of transparency can hide biases, misclassifications, or inefficiencies. This is where AI model explainability and model risk management become essential. Explainable AI allows stakeholders to understand why a model made a particular decision, identify weaknesses, and implement corrections before a minor error escalates into a serious risk.

- Transparency reduces regulatory exposure by ensuring auditability of AI-driven decisions.

- Explainable models help risk analytics teams validate predictions against historical patterns.

AI Governance in Hybrid Environments

Strong AI governance aligns AI operations with corporate policies, compliance requirements, and ethical standards. In hybrid cloud environments, governance frameworks must span multiple systems and datasets, ensuring consistency in model performance, version control, and risk mitigation.

Key governance activities include:

- Monitoring model drift to detect changes in AI behavior over time.

- Ensuring AI compliance with regulatory standards across jurisdictions.

- Applying AI lifecycle management to track model development, deployment, and decommissioning.

Enterprise Implications

Failure to manage AI risks in hybrid clouds can have serious consequences:

- Financial loss from incorrect automated decisions.

- Regulatory penalties due to insufficient audit trails.

- Operational disruption if anomalous model behavior goes undetected.

Adopting explainable AI approaches not only mitigates these risks but also builds trust with internal stakeholders and external regulators. Organizations equipped with robust AI observability can proactively detect anomalies and respond faster.

Explainable AI for Hybrid Cloud Environments

Hybrid cloud architectures host AI models across multiple platforms, which can obscure how decisions are made. Explainable AI (XAI) provides transparency by clarifying model logic, allowing teams to act on predictions with confidence.

Understanding Explainable AI

Explainable AI reveals the reasoning behind model outputs, making complex algorithms interpretable for stakeholders. This transparency supports AI risk management by highlighting potential errors, biases, or inconsistencies before they affect operations.

Audit and compliance teams can use XAI insights to verify that predictions meet regulatory standards, while improving AI observability to detect unusual model behavior in real time.

Explainable AI for Hybrid Cloud Environments

In hybrid cloud environments, XAI enhances both security and operational efficiency:

- Risk identification: Teams can trace decision pathways, ensuring robust model risk management.

- Compliance assurance: Transparent AI simplifies reporting for audits, reinforcing AI compliance and cloud compliance.

- Performance optimization: Feature importance and prediction drivers help refine models without disrupting workflows.

How Explainable AI Improves Cloud Risk Models

Cloud-hosted AI models benefit from explainable AI in predictive accuracy and bias reduction. By understanding how models make decisions, risk and compliance teams can take preventive actions before issues escalate.

Implementing explainable AI also strengthens hybrid cloud AI governance frameworks by embedding transparency into corporate policies, making accountability measurable and actionable.

Key Considerations for Deployment

When applying XAI in hybrid clouds:

- Ensure models are auditable across all environments.

- Monitor for drift to maintain consistent accuracy.

- Align explainability metrics with operational and regulatory requirements.

Robust deployment of explainable AI supports proactive decision-making and reinforces AI governance across hybrid cloud infrastructures.

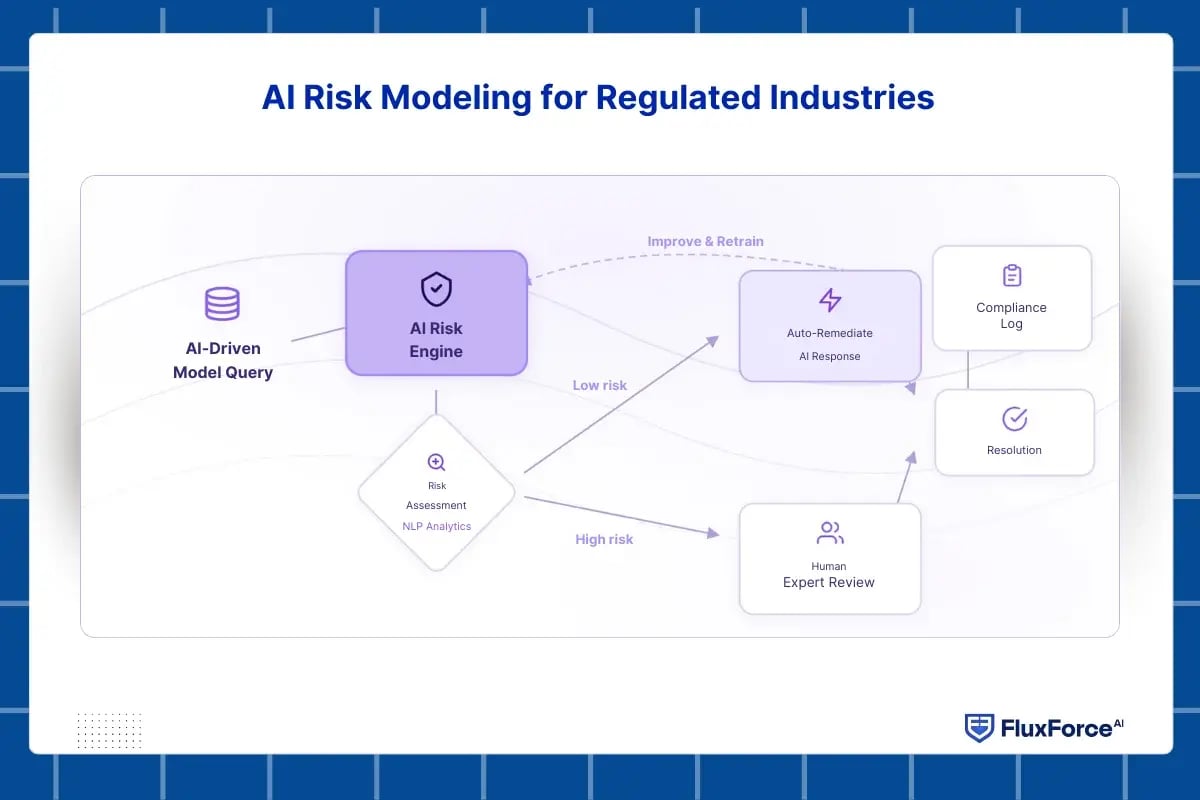

AI Risk Modeling for Regulated Industries

Organizations in highly regulated sectors face significant challenges in managing risks associated with AI models.

Strong AI risk management ensures that AI-driven decisions remain compliant with legal, operational, and ethical standards while reducing potential disruptions and losses.

Banks need to understand the reason behind every alert before taking action. Explainable AI connects detection with reasoning, helping teams see what the model observed and why a payment was flagged. This clarity improves speed, accuracy, and customer trust while keeping controls aligned with compliance.

The Role of AI Risk Management

AI models can produce unexpected outcomes due to biased data, misaligned objectives, or unmonitored drift. Effective AI risk management helps organizations detect anomalies early, maintain compliance across jurisdictions, and build confidence among stakeholders by ensuring AI model transparency.

Incorporating AI observability allows continuous monitoring of model behavior, delivering real-time insights into performance and highlighting areas that need corrective action. This creates a proactive framework for managing AI risks.

Managing Risks in Hybrid Cloud Environments

Deploying AI across hybrid cloud environments introduces additional layers of complexity. Hybrid cloud risk management ensures that AI models perform consistently across public and private clouds while maintaining AI compliance and protecting sensitive data.

Integrating model risk management with explainable AI (XAI) allows organizations to evaluate the impact of predictions, identify vulnerabilities, and uphold accountability across multi-cloud systems. This approach helps secure AI operations without compromising agility or scalability.

Enhancing Decisions with AI Model Explainability

Understanding the rationale behind AI predictions is crucial in regulated industries. AI model explainability provides transparency by showing which factors influence a model’s decisions. This enables teams to verify compliance with regulatory and ethical standards, reduce operational risks, and communicate AI outcomes clearly to non-technical stakeholders.

Techniques like SHAP and LIME support AI model transparency, offering measurable insights into feature importance and guiding model refinement. These tools strengthen trust in AI while improving decision-making accuracy.

Leveraging Risk Analytics

Risk analytics enhances decision-making by combining AI outputs with statistical and operational insights. It highlights areas where model predictions deviate from expectations, prioritizes risks for immediate action, and provides decision-makers with actionable intelligence on AI operations.

Integrating risk analytics into AI governance frameworks ensures a holistic understanding of AI-driven risks, strengthening both compliance and hybrid cloud security. This approach allows organizations to anticipate and prevent potential disruptions proactively.

Explainable AI for Compliance and Audit

In regulated industries, Explainable AI (XAI) ensures AI decisions are transparent, accountable, and compliant.

Strengthening Compliance

Explainable AI for compliance and audit helps organizations:

- Understand model predictions.

- Verify adherence to regulatory standards.

- Detect risks before they cause non-compliance.

Observability and Monitoring

AI observability provides real-time insights into model behavior, enabling early detection of drift, biases, and anomalies. Continuous monitoring is crucial for hybrid cloud deployments.

Hybrid Cloud Oversight

Hybrid cloud risk management ensures consistent compliance across public and private clouds. Combining it with AI model explainability allows teams to validate predictions and maintain accountability in complex environments.

Audit-Ready Operations

Maintain detailed logs of model inputs, outputs, and changes. Use XAI tools to generate interpretable explanations for auditors and stakeholders. By integrating explainability, observability, and risk analytics, organizations strengthen compliance, transparency, and control over AI systems.

How to Ensure Safe and Compliant AI in Hybrid Cloud

Deploying AI across hybrid cloud environments can introduce uncertainty. Teams need practical strategies to ensure AI models remain secure, transparent, and reliable without adding unnecessary complexity.

Following structured practices improves hybrid cloud risk management while supporting compliance and operational efficiency.

Monitor AI Models Continuously to Avoid Surprises

Continuous observation is essential for safe AI operations. AI observability provides real-time insights into how models perform across private and public clouds, helping teams detect anomalies, unusual predictions, or emerging bias.

Benefits include:

- Immediate detection of potential operational risks before they affect processes.

- Ability to track model drift and data changes that might compromise accuracy.

- Proactive issue resolution that prevents service disruptions or regulatory breaches.

By integrating observability into hybrid cloud setups, organizations maintain confidence in their AI systems and reduce reactive firefighting.

Make AI Decisions Transparent for Compliance

Regulated industries require that AI-driven decisions be explainable and accountable. Explainable AI (XAI) enables teams to understand why a model makes specific predictions, providing evidence for audits and regulatory reporting.

Transparent AI systems:

- Build stakeholder trust by clearly showing the reasoning behind predictions.

- Simplify compliance processes by documenting model decisions.

- Reduce operational and reputational risks through accountable AI usage.

Making models understandable is not just a regulatory requirement—it improves decision-making across teams.

Use Risk Analytics to Guide Action

Integrating risk analytics with AI outputs allows decision-makers to identify potential threats and prioritize interventions.

Key advantages include:

- Highlighting areas where model behavior deviates from expected results.

- Quantifying operational impact to focus on high-priority risks.

- Supporting faster, informed actions to maintain hybrid cloud security and operational resilience.

This combination strengthens AI governance, helping teams respond to risks before they escalate.

Practical Steps for Effective AI Governance

To ensure consistent and safe AI operations in hybrid cloud environments, teams should follow these practices:

- Maintain detailed logs of model decisions to facilitate audits and accountability.

- Regularly validate models for bias, drift, and performance degradation.

- Apply standardized compliance and security policies across all cloud platforms.

These steps ensure AI risk management is proactive, reliable, and aligned with both operational goals and regulatory expectations.

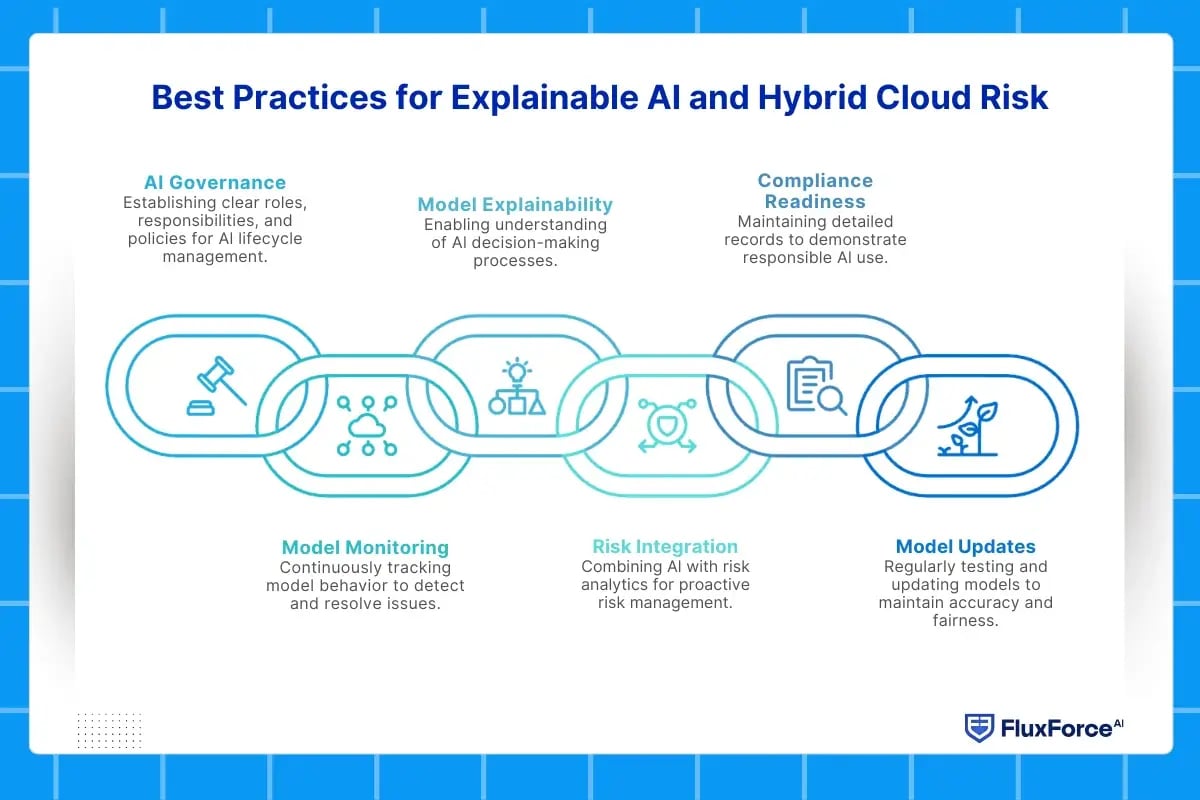

Best Practices for Explainable AI and Hybrid Cloud Risk

Implementing explainable AI (XAI) in hybrid cloud environments is not just about technology—it requires clear processes, governance, and ongoing monitoring to ensure AI systems remain trustworthy, compliant, and reliable. Organizations that follow structured practices can reduce operational risks, maintain regulatory compliance, and improve transparency for stakeholders.

Establish Strong AI Governance

Effective AI governance is the foundation of safe and responsible AI adoption. Governance involves defining clear roles, responsibilities, and policies for every stage of the AI lifecycle, from model development to deployment.

Strong governance ensures:

- Legal and regulatory compliance: Models meet standards set by industry regulations and internal policies.

- Consistent practices across hybrid cloud environments: Both public and private cloud systems follow the same risk and security protocols.

- Transparent decision-making: Teams and stakeholders can understand how AI models make predictions, increasing trust in AI outcomes.

Governance frameworks should include periodic audits, approval workflows for model updates, and accountability mechanisms for AI-driven decisions.

Monitor Model Performance Continuously

Continuous monitoring through AI observability allows organizations to track model behavior in real time. AI models in hybrid cloud setups can face issues like data drift, concept drift, or performance degradation due to evolving inputs across platforms.

Key monitoring practices include:

- Tracking prediction accuracy and identifying anomalies before they escalate.

- Detecting bias in outcomes to prevent unintended consequences.

- Logging model inputs, outputs, and intermediate processes to provide a complete view for auditing and risk analysis.

By integrating observability tools into hybrid cloud infrastructure, teams gain insights into model performance across multiple environments, ensuring proactive issue resolution.

Prioritize AI Model Explainability

AI model explainability enables teams to understand why a model makes specific predictions. In regulated industries, this transparency is essential for accountability and compliance.

Practical approaches include:

- Using interpretability techniques like SHAP (Shapley Additive Explanations) and LIME (Local Interpretable Model-agnostic Explanations) to identify which features influence predictions.

- Creating dashboards that visualize model decisions in simple terms for business and compliance teams.

- Documenting decision logic to support regulatory reporting and audits.

Explainable models not only meet regulatory expectations but also improve stakeholder confidence in AI-driven decisions.

Integrate AI With Risk Analytics

Combining risk analytics with AI provides a deeper understanding of potential issues and their operational impact. This approach allows organizations to anticipate risks, prioritize mitigation efforts, and make informed decisions.

Benefits of integrating risk analytics include:

- Highlighting areas where AI predictions deviate from expected outcomes.

- Quantifying operational and financial impact of model errors.

- Supporting risk-based decision-making to maintain hybrid cloud security.

This integration helps teams act quickly, ensuring AI-driven processes remain reliable and secure.

Maintain Compliance and Audit Readiness

Proper documentation and logging are critical for demonstrating responsible AI use. AI compliance requires detailed records of model development, deployment, and decision outputs.

Best practices include:

- Maintaining end-to-end audit trails of AI decisions.

- Storing logs securely in a hybrid cloud setup to prevent tampering.

- Periodically reviewing and updating documentation to reflect changes in models or regulations.

Clear compliance records make audits efficient and help organizations demonstrate accountability to regulators and stakeholders.

Regularly Update and Validate Models

AI models are not static—they must evolve with changing data and operational conditions. Regular testing and updates help maintain accuracy, reduce bias, and prevent drift.

Effective strategies include:

- Conducting periodic bias and fairness assessments to ensure ethical outcomes.

- Validating model performance against real-world data and updating as needed.

- Implementing automated retraining pipelines where feasible to minimize downtime.

Consistent validation strengthens AI governance and ensures predictions remain reliable, reducing operational and regulatory risk.

Improve compliance, accuracy, and decision-making today.

XAI enhances hybrid cloud risk models

Conclusion

Adopting explainable AI (XAI) and robust AI risk management practices is essential for organizations operating in hybrid cloud environments. By combining AI observability, AI model explainability, and risk analytics, teams can ensure models are accurate, secure, and compliant.

Clear AI governance and AI compliance measures help maintain accountability, reduce operational risks, and build trust with stakeholders. Regular monitoring and model updates prevent drift and bias, safeguarding both data and business outcomes.

Organizations that integrate these practices not only meet regulatory standards but also gain a competitive advantage by making AI-driven decisions transparent and reliable.

Share this article