Listen to our podcast 🎧

Introduction

Why do real time payment systems still face compliance disputes and false alerts despite advanced AI adoption?

Financial institutions depend on AI Model Monitoring to evaluate every transaction in seconds. Yet many payment teams cannot clearly explain why a model blocked a genuine customer or approved a risky transfer. This lack of clarity creates operational delays, regulatory pressure, and loss of customer trust. Speed alone no longer defines success. Transparency and control now matter just as much.

The trust gap inside payment decisions

Modern AI in payment processing analyzes complex behavior patterns, but the reasoning often remains hidden from risk and compliance teams. When auditors ask for evidence, organizations struggle to justify model actions inside real time payment systems. This gap turns automated monitoring into a liability. Explainable AI (XAI) addresses this problem by revealing how models interpret risk signals and how those signals change over time.

Fraud methods evolve quickly, and models can drift without notice. Real time fraud detection requires continuous visibility into model behavior, not occasional reviews. Institutions need monitoring that shows what the model learned, why performance shifted, and how to correct it before customers are affected. AI Model Monitoring supported by explainability builds this discipline and helps payment leaders balance speed, accuracy, and compliance.

This blog explores how transparency-driven monitoring strengthens payment ecosystems and turns AI decisions into defensible, reliable actions.

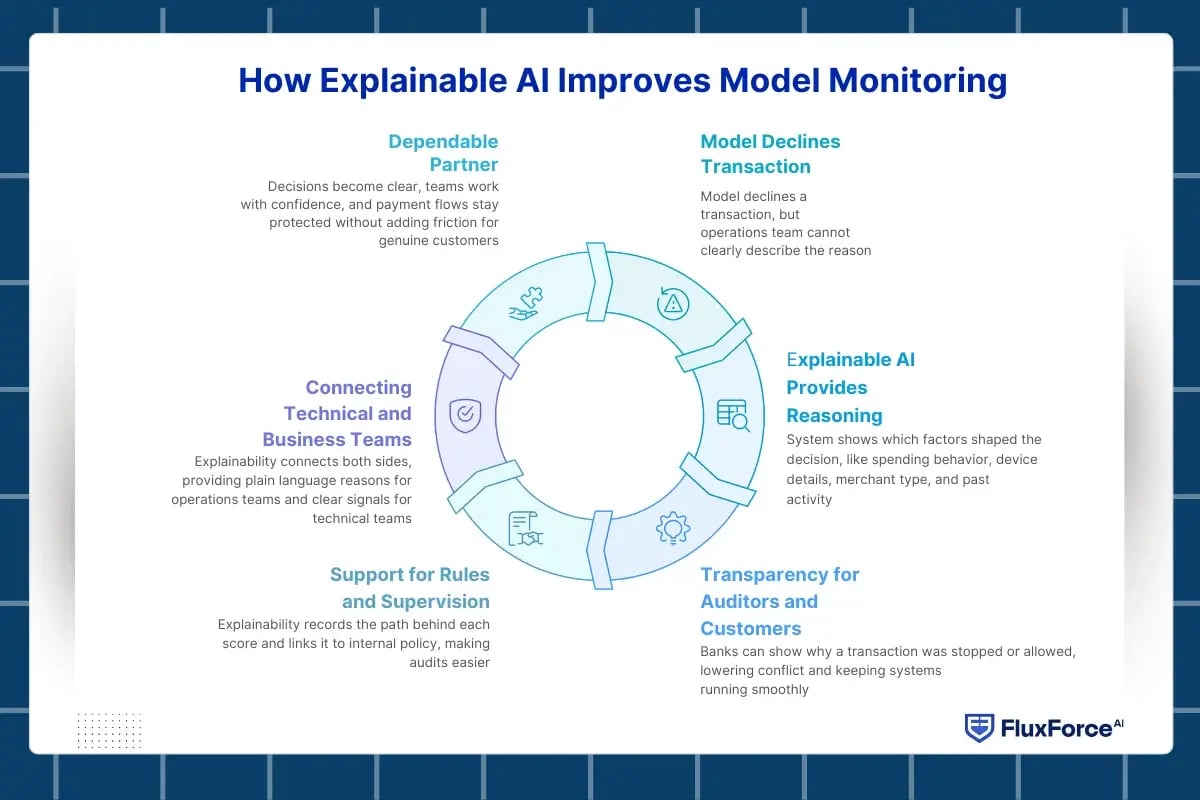

How Explainable AI Improves Model Monitoring ?

Why do teams still hesitate to trust AI decisions in real time payment systems?

Most monitoring tools show numbers but do not explain meaning. A model may decline a transaction, yet the operations team cannot clearly describe the reason to auditors or customers. This gap slows response and creates risk. How explainable AI improves model monitoring matters because monitoring must provide clear reasoning, not only alerts on a screen.

Explainable AI for payments changes the way teams view model results. Instead of a simple approve or decline signal, the system shows which factors shaped the decision. Spending behavior, device details, merchant type, and past activity become visible parts of the story. AI Model Monitoring then turns into a practical tool that helps investigators decide the next step.

From alerts to understanding

Explainable AI for payments changes the way teams view model results. Instead of a simple approve or decline signal, the system shows which factors shaped the decision. Spending behavior, device details, merchant type, and past activity become visible parts of the story. AI Model Monitoring then turns into a practical tool that helps investigators decide the next step.

Transparency as everyday protection

Payment teams need to act fast, but they also need to act with proof. AI transparency in payments allows banks to show why a transaction was stopped or allowed. When customers question a decision, staff can rely on clear explanations instead of guesswork. This approach lowers conflict between fraud teams and service teams and keeps real time payment systems running smoothly.

Support for rules and supervision

AI governance in financial services demands evidence that models follow those rules day after day. With model monitoring in real time, explainability records the path behind each score and links it to internal policy. Audits become easier because teams can present simple and direct reasons for their actions.

Bringing technical and business teams together

Data specialists speak in model terms, while compliance officers speak in risk terms. Explainability connects both sides. Operations teams receive plain language reasons, and technical teams receive clear signals about model behavior. This shared view strengthens control across real time payment systems and reduces long investigation cycles.

Explainable monitoring turns AI into a dependable partner. Decisions become clear, teams work with confidence, and payment flows stay protected without adding friction for genuine customers.

Real-Time AI Monitoring for Financial Transactions

Real time payment systems do not pause for reviews or weekly checks. Transactions flow across cards, wallets, and instant transfers throughout the day. If monitoring waits for end-of-day reports, the damage is already done. Real-time AI monitoring for financial transactions ensures that model behavior is examined at the same speed as money movement.

From an AI model’s dashboard point of view, understand how explainable AI strengthens model-driven fraud monitoring in live payment environments.

Beyond simple performance numbers

Many institutions still judge models only by approval rate or fraud percentage. These numbers alone hide important risks. True AI Model Monitoring looks deeper:

- Are risk scores stable across channels?

- Are certain merchants suddenly receiving unusual decisions?

- Is customer behavior being interpreted correctly?

- Are input data fields arriving with the same quality as before?

Answering these questions protects revenue and customer trust at the same time.

Understanding decisions instead of counting alerts

When alerts rise, teams often struggle to know the cause. Was it a new fraud pattern or a model misunderstanding? Explainable AI in real-time payment systems provides the context behind each decision. Investigators can see which transaction details influenced the score and whether those reasons make business sense

Model drift detection in real time

Payment environments evolve constantly. Holiday seasons, new products, or changes in customer habits can confuse even well-built models. Model drift detection in real time compares current outcomes with established behavior and highlights early warning signs such as:

- Gradual increase in customer complaints

- Sudden change in approval mix

- Different results for similar profiles

- Unusual concentration around specific devices or regions

With explainability, teams understand not only that drift occurred but also why it occurred.

Operational rhythm for payment teams

Effective monitoring follows a clear rhythm:

- Review explanations for high-risk decisions each day

- Compare today patterns with last week patterns

- Validate that model reasons match policy rules

- Feed investigator feedback back into the system

This discipline turns payment system risk monitoring into a controlled business process.

Linking monitoring with compliance needs

Every declined or approved payment may later be questioned by auditors or customers. AI compliance for payment systems requires that banks show a logical path from transaction data to decision. Real-time monitoring stores that path automatically, reducing manual effort and strengthening regulatory confidence.

Real-time monitoring supported by explainability protects both sides of the payment equation. Customers receive smoother experiences, and banks gain clear control over automated decisions without slowing innovation.

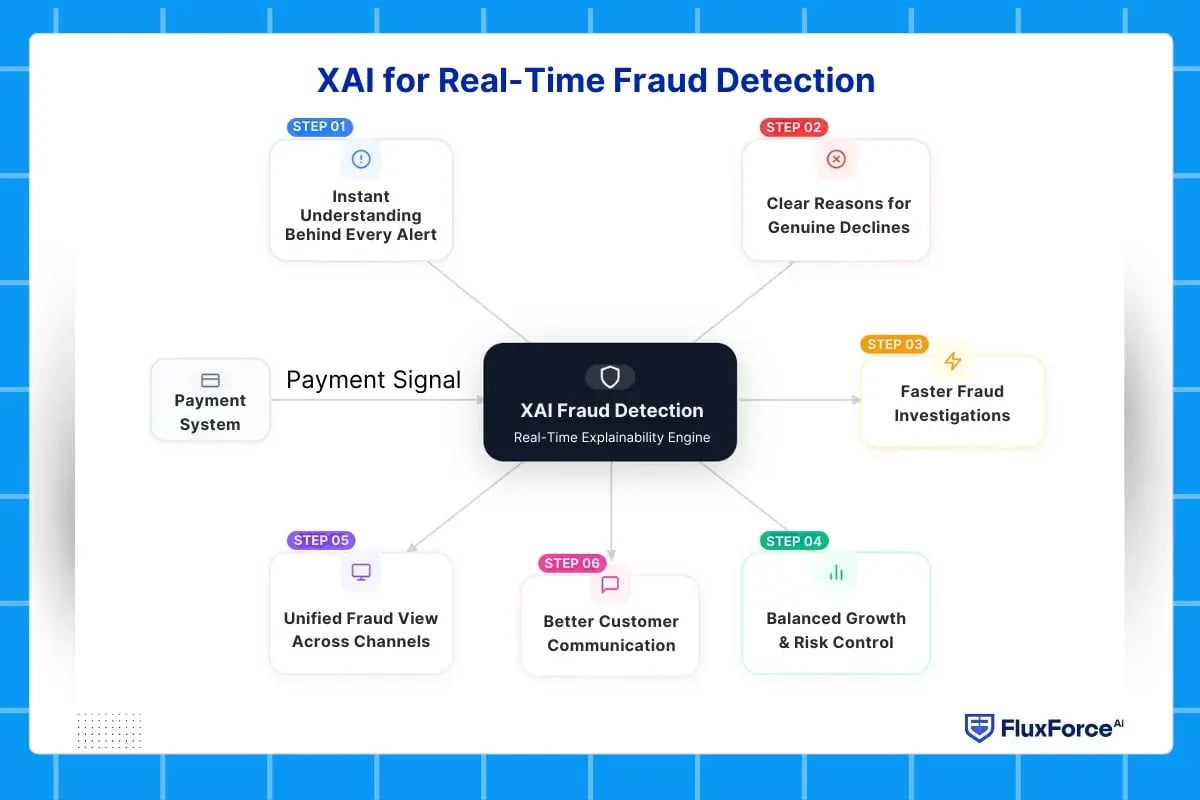

XAI for Real-Time Fraud Detection

Fraud detection in real time payment systems no longer depends only on identifying risky transactions.

Banks need to understand the reason behind every alert before taking action. Explainable AI connects detection with reasoning, helping teams see what the model observed and why a payment was flagged. This clarity improves speed, accuracy, and customer trust while keeping controls aligned with compliance.

Instant understanding behind every fraud alert

In real time payment systems, alerts arrive continuously, but a risk score alone does not guide action. XAI for real-time fraud detection reveals the exact signals that influenced the model, such as transaction behavior, device consistency, location patterns, and past activity. Operations teams move from reacting to alerts toward understanding the logic behind them.

Clear reasons for genuine payment declines

Legitimate payments often fail because models rely on broad correlations that remain hidden from users and analysts. Explainability exposes the specific factor that triggered the decision and shows whether that factor truly represents risk. This insight allows banks to refine controls instead of applying rigid blocks across all customers.

Faster investigations for fraud analysts

Analysts lose valuable time collecting context from different systems. With explainability embedded into monitoring AI models in payment gateways, the platform delivers structured information in one view:

- Main drivers behind the risk score

- Comparable historical transactions

- Customer behavior timeline

- Business-friendly explanation of the decision

This approach shortens review cycles and brings consistency across teams.

Better communication with customers

Customer support teams need simple and accurate explanations when a payment fails. Explainable AI for payments provides human-readable reasons without exposing sensitive model logic. Clear communication reduces frustration, repeat queries, and chargeback disputes.

Balanced growth and risk control

Payment businesses aim to increase approvals without inviting fraud. Explainability shows how each rule or model change affects outcomes before deployment. Product and risk teams can expand to new segments with confidence instead of trial-and-error decisions.

Unified fraud view across channels

Cards, instant transfers, wallets, and merchant payouts generate different risk signals. XAI creates a common interpretation layer so that all channels follow the same reasoning model. This consistency strengthens protection across the entire payment ecosystem.

Explainable AI turns fraud detection into a transparent and manageable process. Teams no longer depend on blind scores. They work with clear reasons, measured actions, and controlled decisions.

How to Detect Model Drift in Payment Systems ?

Payment behavior never stays constant. New merchants, seasonal spending, and changing fraud tactics slowly shift the data that AI models rely on.

When this shift goes unnoticed, approval rates fall and false alerts rise. Explainability strengthens AI model monitoring by showing not only that drift occurred, but also where and why it started.

Behavioral signals that indicate drift

Drift appears first in small changes. Transaction amounts, device patterns, and customer routines begin to differ from the training data. Model drift detection in real time uses explainability to compare present decisions with earlier logic. When the same profile receives a different risk explanation, the system receives an early warning.

Understanding the cause behind performance drop

Traditional monitoring only reports declining accuracy. XAI connects the decline to specific features such as merchant category, geography, or payment method. Teams can see whether the change comes from genuine customer behavior or from a new fraud strategy. This understanding prevents blind retraining.

Continuous validation inside payment gateways

Monitoring AI models in payment gateways requires daily validation. Explainability tracks which factors influence approvals across millions of transactions. If a single rule begins to dominate decisions, governance teams receive clear evidence that the model is losing balance.

Collaboration between risk and data teams

Explainability creates a shared view for different functions. Data scientists analyze technical metrics, while risk managers review business impact. The same explanation layer serves both groups, turning AI risk management in fintech into a coordinated process instead of isolated reviews.

Safe retraining decisions

Retraining a payment model without context can increase fraud exposure. XAI shows which segments require updates and which remain stable. Banks adjust only the necessary parts of the model, protecting revenue and customer experience.

Regulatory readiness through documented reasoning

Supervisors expect proof that institutions control model change. AI compliance for payment systems becomes simpler when every drift event includes an explanation trail. Reports show how the model behaved, what shifted, and which action restored stability.

Explainability transforms drift detection from a technical alert into a guided business decision. Real time payment systems remain reliable even when customer behavior and fraud methods evolve.

Conclusion

Real time payment systems demand speed, accuracy, and accountability at the same time. Traditional monitoring methods can detect anomalies, but they often fail to explain them. Explainable AI changes this by connecting every model decision with clear reasoning. AI model monitoring becomes a business capability instead of a technical checkpoint.

Banks that adopt explainability gain stronger control over fraud detection, compliance, and customer experience. Teams understand why a payment is blocked, how risk scores are formed, and when models begin to drift. This understanding reduces false positives, protects revenue, and builds trust with regulators and users.

The future of payments depends on systems that are both intelligent and transparent. XAI ensures that innovation does not come at the cost of governance. Financial institutions that combine real time analytics with explainability will manage risk with confidence and scale digital payments without losing visibility.

Share this article