Listen To Our Podcast🎧

Introduction

Automation, for businesses today, is a necessity that drives more growth with less investment. In regulated sectors, its impact is invaluable.

Meeting stringent compliance deadlines, completing volumes of complex checklists, and maintaining detailed document reports are a daunting array of pressure if AI compliance automation is not properly deployed.

McKinsey & Company reports that AI/automation is helping 88% of regulated companies in meeting regulatory targets and gaining up to 15-20% efficiency in operational costs.

But does offloading core workflows to autonomous machines remain safe?

It’s indeed not, unless your tools provide transparency behind every automated decision. Automation actions that themselves are explainable are the only way to satisfy modern auditors.

With the medium of this blog, we will show you the importance of Explainable AI (XAI) in regulated industries’ automation efforts and how to enable an ecosystem that aligns with regulatory bodies without the need for an extended human workforce.

XAI drives safer automation in regulated industries

by boosting transparency, compliance, and trust.

Why explainable AI is required for regulated industries

Whether banking, insurance, or any regulated enterprise, the idea of sustainable automation lies in:

- Transparency in actions

- Accountability of the decision

- Relevant legal compliances met

Across industries, 51% of organizations using AI have experienced negative consequences, McKinsey says. Not because the technology failed to perform, but because of explainability failures that rendered the automated outcomes indefensible during regulatory audits.

AI models often operate as “black-boxes,” processing complex data through hidden layers. This makes it impossible for human supervisors to justify specific outcomes. Deploying explainable AI in regulated industries ensure:

- Safe, automated compliance

- Trustworthy AI

- Optimized human-in-the-loop monitoring

- Quick and easy root cause analysis

How explainable AI improves regulatory compliance

For managing AI-driven regulatory compliance, an explainable layer follows a four-step process to transform operations from a "black box" to a regulatory-friendly ecosystem.

Here’s how explainability re-engineers automated compliance systems:

Step 1: Feature Attribution (The "Why" behind the Loan)

In regulated sectors like banking, a black-box model might reject a mortgage application without a clear reason. An explainable layer identifies the specific variables, such as debt-to-income ratio or recent credit inquiries, that triggered the rejection.

This level of AI model transparency ensures the bank can prove to regulators that the decision was based on financial risk, not prohibited discriminatory factors.

Step 2: Local Interpretability (Individual Accountability)

When a high-risk trade is flagged for potential money laundering, AI compliance automation must provide an immediate rationale for that specific alert. The explainable layer generates a "reasoning report" for the compliance officer, detailing the exact transaction patterns that deviated from the norm.

This allows for rapid human-in-the-loop verification, ensuring the bank meets Anti-Money Laundering (AML) requirements.

Step 3: Boundary Testing (Proactive AI Risk Management)

Before deploying a new trading algorithm, banks use XAI to perform "counterfactual" testing. By asking, "What if the market volatility increased by 5%?", the system shows how the AI's decision-making would shift.

This proactive AI risk management ensures the model doesn't "hallucinate" or take unauthorized risks during market stress, maintaining the integrity of the trade.

Step 4: Automated Audit Readiness

The final step converts these technical insights into standardized reports. Instead of manually reconstructing the logic for a Federal Reserve audit, the explainable layer provides an automated, timestamped trail of every decision and its justification.

This turns AI model transparency into a permanent record, allowing the bank to scale its operations without fearing an audit trail gap.

Benefits of XAI in compliance automation

Implementing explainable AI in regulated industries delivers tangible operational and strategic advantages:

1. Reduced Audit Preparation Time: Traditional audits require weeks of manual documentation. XAI systems automatically generate decision logs with complete reasoning chains, reducing audit prep time by up to 70%. Compliance teams can instantly retrieve the "why" behind any automated action from months prior.

2. Lower Regulatory Penalties: When regulators can trace every decision back to its logic, organizations avoid costly fines. A single unexplained discriminatory loan denial can result in millions in penalties. XAI provides the documented proof that decisions were made on legitimate criteria, not biased factors.

3. Faster Issue Resolution: When an automated decision goes wrong, XAI pinpoints the exact feature or data point that caused the error. Instead of spending days debugging a black-box model, teams identify and fix problems in hours. This speed prevents small issues from escalating into compliance violations.

4. Increased Stakeholder Trust: Customers, regulators, and internal teams all benefit from transparency. When a patient's insurance claim is denied, XAI can explain which policy terms triggered the decision. This clarity reduces disputes and builds confidence in your automated systems.

5. Scalable Governance: As organizations deploy more AI models, managing them becomes complex. XAI creates a standardized framework for oversight. Whether you're monitoring 10 models or 100, the explainability layer ensures consistent governance without proportional increases in compliance staff.

Real-World Implementations of Explainable AI in Regulated Industries

1. Wells Fargo: Transparent Credit Decisions with LIFE Algorithm

Wells Fargo developed the LIFE (Linear Iterative Feature Embedding) algorithm using NVIDIA's GPU-accelerated machine learning to enhance loan processing systems. The bank faced challenges in explaining complex AI decisions to both customers and regulators.

Results: Increased approval rates by using more comprehensive and sophisticated analysis techniques, allowing Wells Fargo to approve more applicants who would have been declined by traditional models.

2. HSBC: Dynamic Risk Assessment for AML Compliance

In partnership with Google Cloud, HSBC launched "Dynamic Risk Assessment (DRA)" in 2021, powered by machine learning models that analyse transaction patterns, network behaviours, and KYC data. Traditional rule-based systems were generating overwhelming false positives while missing sophisticated money laundering schemes.

Measurable impact: HSBC saw a 60% reduction in alerts and a 2–4× increase in true positive detection across retail and commercial operations. Investigations now conclude in about eight days (down from weeks) with better detection of illicit networks.

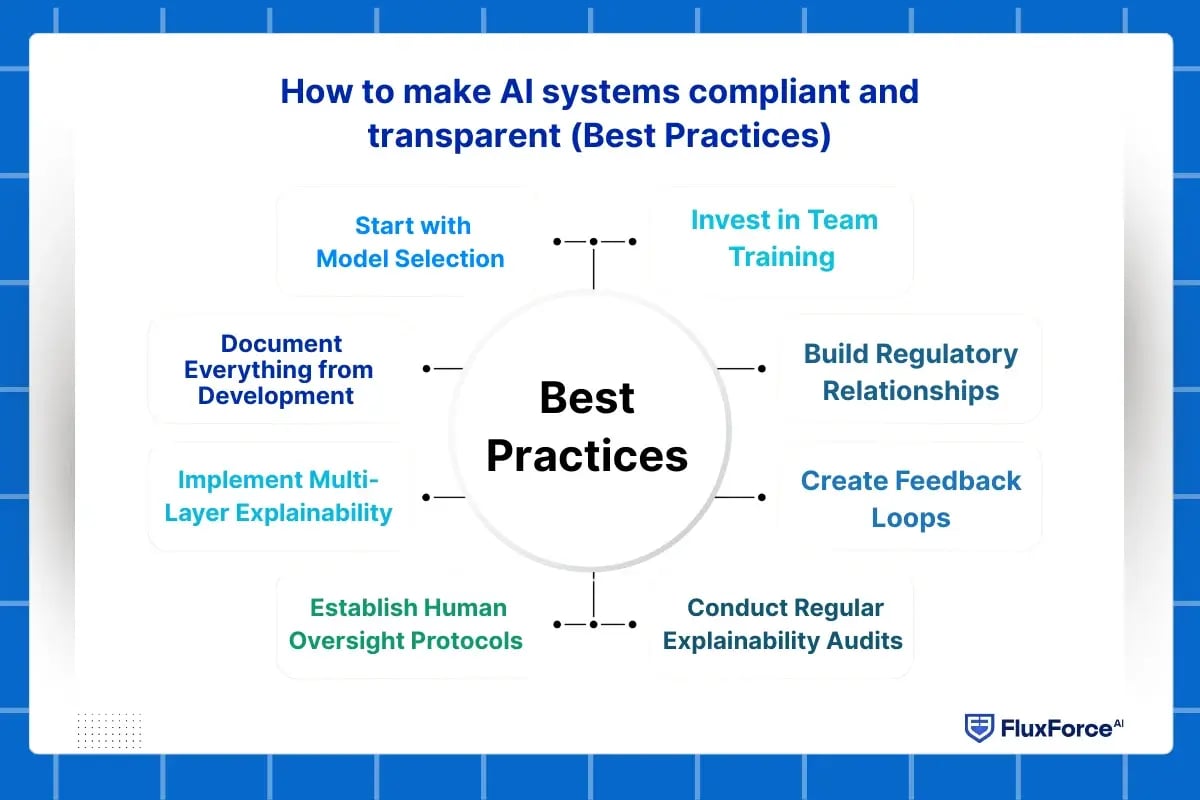

How to make AI systems compliant and transparent (Best Practices)

Building trustworthy AI requires more than adding an explainability tool. Here's a practical framework for regulated industries:

1. Start with Model Selection

Choose interpretable models when possible. Linear regression or decision trees may be less sophisticated than deep neural networks, but they're inherently more explainable. Reserve complex models only for problems where simpler approaches fail.

For complex models that you must use, implement XAI from day one.

2. Document Everything from Development

Create a decision log during model training:

- Which features were considered and why

- How training data was selected and validated

- What performance metrics matter for your use case

- Who approved the model for production

This documentation becomes your first line of defence during audits.

3. Implement Multi-Layer Explainability

Different stakeholders need different explanations:

- Technical teams need feature importance scores and model internals

- Compliance officers need plain-language summaries

- Regulators need standardized reports with legal references

- Customers need simple, jargon-free reasons

Your XAI system should generate all these formats automatically.

4. Establish Human Oversight Protocols

Define clear escalation rules:

- When does an automated decision require human review?

- Which types of outcomes need secondary approval?

- How quickly must a human respond to override requests?

For high-stakes decisions like loan denials or medical diagnoses, always include a human verification step.

5. Conduct Regular Explainability Audits

Test your XAI system quarterly:

- Can you explain every decision type your AI makes?

- Are explanations consistent across similar cases?

- Do explanations align with your business rules?

- Can non-technical staff understand the reasoning?

Fix any gaps before regulators discover them.

6. Create Feedback Loops

When humans override AI decisions, capture the reason. This data helps you:

- Identify systematic biases in your model

- Update training data with edge cases

- Refine decision thresholds

- Demonstrate continuous improvement to regulators

7. Build Regulatory Relationships

Don't wait for an audit to engage with regulators. Share your XAI approach proactively. Many regulatory bodies offer guidance on AI governance. Demonstrating transparency early builds trust and may give you input on evolving standards.

8. Invest in Team Training

Your compliance team needs to understand how AI works at a basic level. Your data science team needs to understand regulatory requirements. Cross-training ensures both groups speak the same language when building explainable systems.

XAI empowers safer automation in regulated industries

by enhancing transparency, trust, and decision-making.

Conclusion

The world has now moved to a world of autonomous efficiency, where manual processes can no longer keep pace with data growth. However, automation can either build or break the systematic pattern of regulations that govern our most critical institutions.

In sectors like banking and healthcare, automation decisions must be both fast and reliable. Traditional AI models often act as “black boxes,” making it difficult to understand the logic behind a specific outcome. Explainable AI in regulated industries solves this problem by providing clear, auditable reasoning for each automated action.

Share this article