Listen To Our Podcast🎧

Payment fraud prevention strategies are the dividing line between institutions that absorb 0.1% fraud losses and those writing off 0.6%. If you are still running the same rule-based thresholds you deployed three years ago, those numbers are not abstract. They are showing up in your quarterly reviews right now.

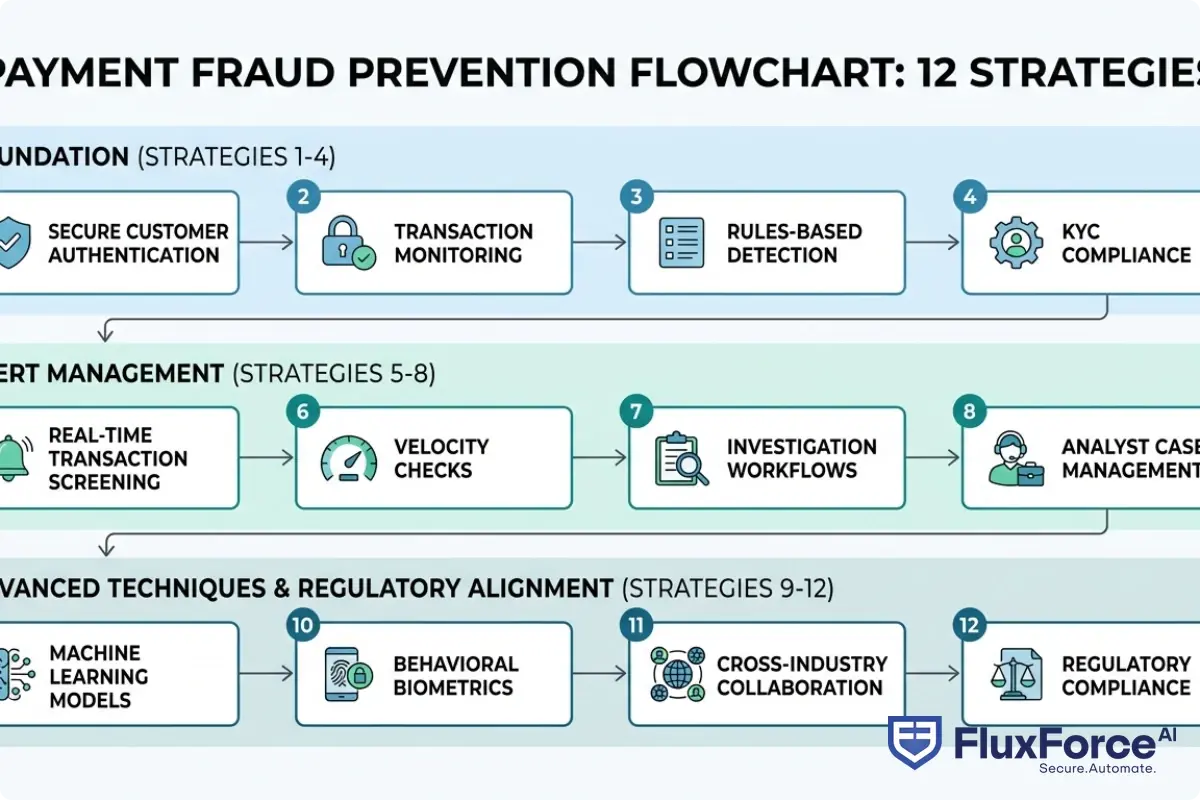

This post breaks down 12 specific approaches that financial teams are actually deploying in 2026. We cover AI fraud detection, the false positive problem that is burning out compliance teams, synthetic identity fraud, and how automated transaction monitoring changes the economics of the entire operation. Every strategy here has been field-tested, not just modeled.

Why Current Fraud Controls Are Breaking Down

The honest starting point is this: payment fraud losses globally exceeded $485 billion in 2023 according to the Federal Trade Commission, and the trajectory has not reversed. The detection tools most institutions rely on were designed for a fraud environment that no longer exists.

Fraudsters now operate with industrialized speed. Synthetic identities take 12-24 months to cultivate before a single fraudulent transaction occurs. Account takeover attempts happen in milliseconds. Card-testing bots run thousands of micro-transactions before a human analyst opens their review queue.

The False Positive Problem Is Worse Than You Think

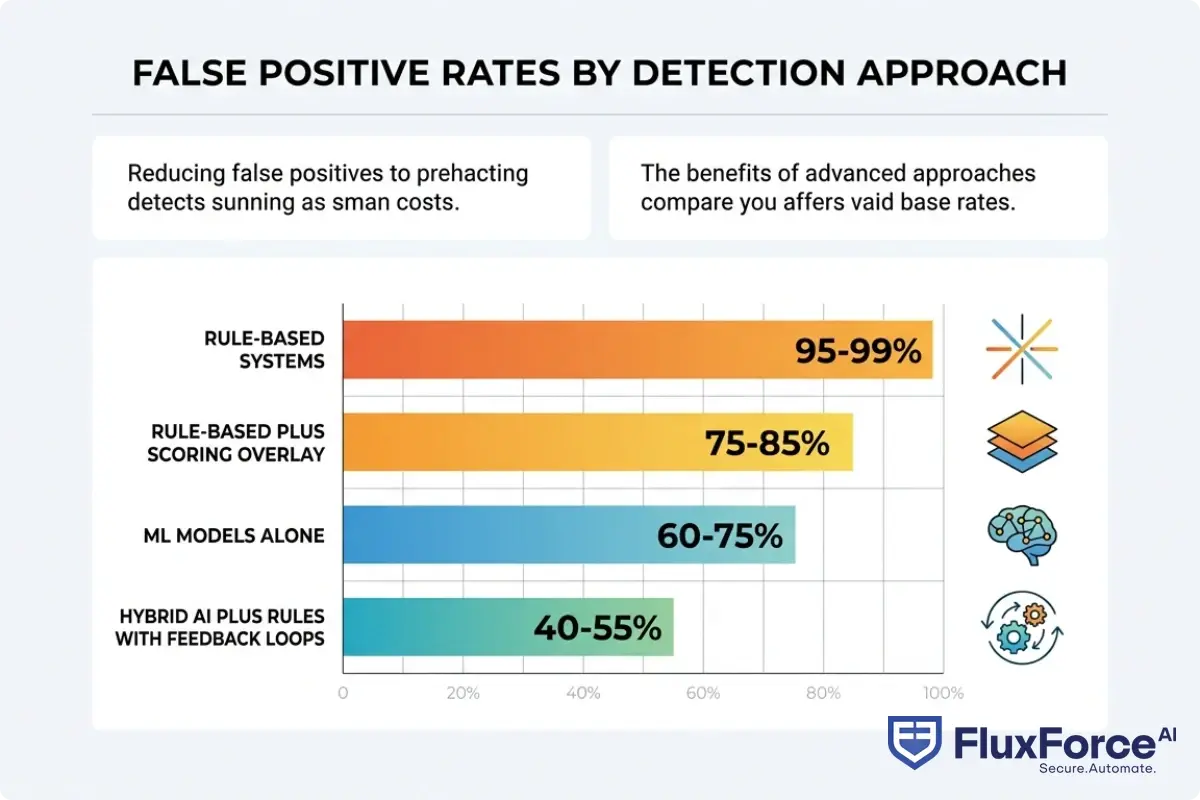

Here is the number that usually surprises risk officers: most transaction monitoring software produces a false positive rate between 95% and 99%. For every 100 alerts your team reviews, only 1 to 5 involve actual fraud.

This is not a minor inefficiency. A single analyst reviewing alerts can process roughly 15 to 20 cases per day. At 98% false positives, that analyst spends 19.6 of those reviews on legitimate customers. The cost compounds when you add customer friction, operational overhead, and the regulatory expectation that every alert requires documentation.

Fraud alert fatigue is the downstream consequence. Analysts stop looking carefully. Thresholds get loosened informally. Real fraud slips through not because the system missed it, but because the team had no bandwidth to act on the signal.

When Rule-Based Systems Hit Their Ceiling

Static rules work well when fraud follows predictable patterns. Velocity checks, geographic anomalies, and known BIN ranges catch a meaningful share of commodity fraud. The problem is that fraud patterns shift faster than rule update cycles.

AI vs. Traditional Fraud Detection: Key Differences Every Risk Officer Should Know covers this in depth, but the core issue is structural: rules require someone to have already seen the attack pattern to write the rule. Machine learning fraud detection finds the anomaly before the pattern has a name.

How AI Fraud Detection Actually Works

AI fraud detection is the application of machine learning and behavioral analytics to identify fraudulent transactions by learning statistical patterns rather than matching fixed rules. That definition is accurate, but it undersells how different the operational reality is from rules-based monitoring.

Machine Learning Fraud Detection: The Mechanics

Machine learning fraud detection models are trained on labeled transaction data. The model learns which combinations of features correlate with fraud versus legitimate activity: transaction amount, merchant category, time of day, device fingerprint, account age, velocity, location delta, and dozens more.

The critical difference from rules is generalization. A rule says "flag transactions over $5,000 from a new device." A model says "this combination of 47 features produces a 0.94 fraud probability, even though each individual feature looks normal."

Gradient boosted trees (XGBoost, LightGBM) dominate production fraud detection because they handle tabular financial data well and produce interpretable feature importance scores. Neural networks are gaining ground in sequence modeling for detecting account takeover through behavioral patterns across a session.

Real-Time Fraud Detection in Banking: What It Actually Requires

Real-time fraud detection in banking means a scoring decision must happen in under 100 milliseconds to avoid adding latency to the payment authorization flow. This creates real engineering constraints that shape every architectural choice.

Most production real-time fraud detection architectures separate the scoring layer from the case management layer. A lightweight model runs inline on the payment path. A heavier ensemble model runs asynchronously and can flag the transaction for analyst review after authorization, but cannot block it after the fact.

The tradeoff is deliberate. Real-time fraud detection banks use a tiered scoring approach: hard blocks for very high-confidence fraud signals, step-up authentication for medium-risk, and silent flags for low-risk transactions that feed analyst queues.

How Does AI Detect Fraud vs. Traditional Rules

The question "how does AI detect fraud" usually gets answered at a conceptual level. Here is what it looks like operationally:

- Feature engineering: Raw transaction data transforms into 100-500 features per transaction (rolling averages, velocity counts, graph centrality scores, embedding distances)

- Model scoring: The feature vector passes through a trained model, producing a probability score

- Threshold routing: Scores route transactions to block, review queue, or pass

- Feedback loop: Analyst outcomes and confirmed fraud labels retrain the model on a weekly or monthly cycle

The feedback loop is where most implementations fail. Without systematic label collection, model performance degrades 5-15% in precision over 12-18 months as fraud patterns evolve and training data goes stale.

Strategies 1-4: Building Your Detection Foundation

These four strategies address the infrastructure layer. Without them, the more advanced techniques do not hold.

Strategy 1: Deploy Automated Transaction Monitoring With Model-Based Scoring

Automated transaction monitoring is expected in 2026. The differentiator is whether your system uses static rules, configurable risk scores, or actual ML models for scoring. Transaction monitoring software that relies solely on rules will keep producing 95%+ false positive rates that drive fraud alert fatigue.

The first upgrade is adding a risk score to each alert based on historical patterns, even if the underlying detection still uses rules. This alone cuts analyst review time by 30-40% by surfacing the highest-probability alerts first.

Strategy 2: Layer AI Fraud Detection Software on Top of Existing Rules

The most practical path for most institutions is not to replace rule-based systems but to add a scoring layer above them. AI fraud detection software works well as an overlay because it can be validated in shadow mode before any production changes go live.

Run the ML model in parallel with your current rules for 60-90 days. Compare outputs against confirmed fraud cases and false positives. This gives you calibration data and a business case before you touch anything in production. See how agentic AI fraud agents cut false positives by 80% for a concrete example of shadow-mode validation in practice.

Strategy 3: Target Synthetic Identity Fraud at Onboarding

Synthetic identity fraud is the fastest-growing fraud category in financial services. Unlike account takeover, it is slow-burn by design. Fraudsters cultivate synthetic identities using combinations of real and fabricated personal data over 12-24 months before liquidating the account.

Detection at the transaction level is extremely difficult because synthetic identities behave like good customers right up until they do not. The only reliable intervention point is onboarding, through identity graph analysis that checks whether an identity element (SSN, phone, address, email) has unusual associations across multiple applicant records.

Detecting Synthetic Identity Fraud in Real-Time covers the identity graph approach in depth. The short version: single-applicant KYC is insufficient. You need to check identity element reuse across your entire customer base and against consortium data where available.

Strategy 4: Implement Device Intelligence and Behavioral Biometrics at Login

Device fingerprinting and behavioral biometrics (typing cadence, mouse movement, touch pressure patterns) add a passive authentication layer that requires no friction for legitimate users. This catches account takeover attempts that have already passed credential checks.

The combination of device ID, IP reputation, behavioral biometrics, and session anomaly detection flags account takeover attempts with 85-90% accuracy before any fraudulent transaction hits your monitoring queue.

Strategies 5-8: Eliminating Alert Fatigue Without Creating Blind Spots

Alert fatigue is the biggest operational risk in fraud programs right now. These four strategies address it directly without trading false positive reduction for missed fraud.

Strategy 5: Reduce False Positives Through Contextual Customer Scoring

The false positive cost in fraud detection is not just analyst time. Research from FICO indicates customers who experience a false decline are 2-3 times more likely to reduce card usage or close their account within 90 days.

The fix for a high false positive rate is not to lower thresholds, which trades false positives for missed fraud. The fix is better features: specifically, adding customer-level context so each transaction is scored against that customer's own behavioral baseline, not just population averages. This is the core insight behind how to reduce false positives in AML, and it applies equally to payment fraud.

Strategy 6: Tune Models on Your Own Data, Not Vendor Defaults

AI fraud detection software ships with default models trained on aggregate industry data. Those defaults perform reasonably well out of the box, but they are not calibrated to your customer base, your merchant mix, or your specific fraud patterns.

The first priority after deploying any fraud ML system is retraining on your own labeled data. Even six months of historical transactions with confirmed fraud labels significantly improves precision. The false positive cost in fraud drops measurably once the model understands what "normal" looks like for your specific population.

Strategy 7: Automate Case Triage, Not Just Detection

Most institutions automate detection but leave case management entirely manual. This is where the throughput bottleneck actually lives. An alert that takes 45 minutes of analyst time to resolve is expensive regardless of whether the detection was AI-powered.

Automated case management pre-populates relevant context (account history, prior alerts, related transactions), routes cases based on analyst specialization, and auto-closes low-complexity cases that meet defined criteria. If 35% of your alert queue meets clear auto-close criteria for false positives, automating that disposition frees analysts for the cases that genuinely require investigation.

Reducing False Positives: Rule-Based Systems vs. AI-Driven Solutions has a practical cost comparison that applies directly to case management automation decisions.

Strategy 8: Build a Feedback Loop That Analysts Will Actually Use

Model performance without feedback loops degrades 5-15% in precision over 12-18 months. The reason most feedback loops fail is not technical, it is procedural: label capture adds 30-60 seconds to every analyst disposition, and that friction compounds across thousands of cases per month.

The practical solution is recording analyst disposition (fraud confirmed / not fraud / needs escalation) directly in the case management interface with a single click, then exporting those labels to your ML pipeline on a weekly schedule. Weekly retraining is practical for most transaction volumes. Daily retraining is feasible at scale but adds operational overhead that few teams can sustain.

Strategies 9-12: Advanced Techniques for 2026

These strategies require more investment but address threats that the foundational layer systematically misses.

Strategy 9: Network Graph Analysis for Fraud Ring Detection

Individual transaction scoring misses coordinated fraud rings where each transaction looks clean in isolation. Network graph analysis maps relationships between accounts, devices, IP addresses, merchants, and beneficiaries to identify clusters of connected activity.

This is the primary technique for synthetic identity fraud rings and organized account takeover operations. When 12 accounts share a device ID or originate from the same IP subnet, individual scoring may clear all 12. Graph analysis flags the cluster as a structural anomaly regardless of individual scores.

Strategy 10: Real-Time Consortium Intelligence Sharing

Real-time fraud detection banks increasingly share signals across consortium networks. When a card-testing attack hits one institution, consortium members receive signals within minutes. This is qualitatively different from blacklist sharing, which operates with hours or days of lag.

The constraint is data governance. Contributing to and consuming from consortium intelligence requires legal review of what transaction data can be shared and under what framework. The FATF guidance on information sharing provides the international framework, though domestic regulatory requirements vary by jurisdiction.

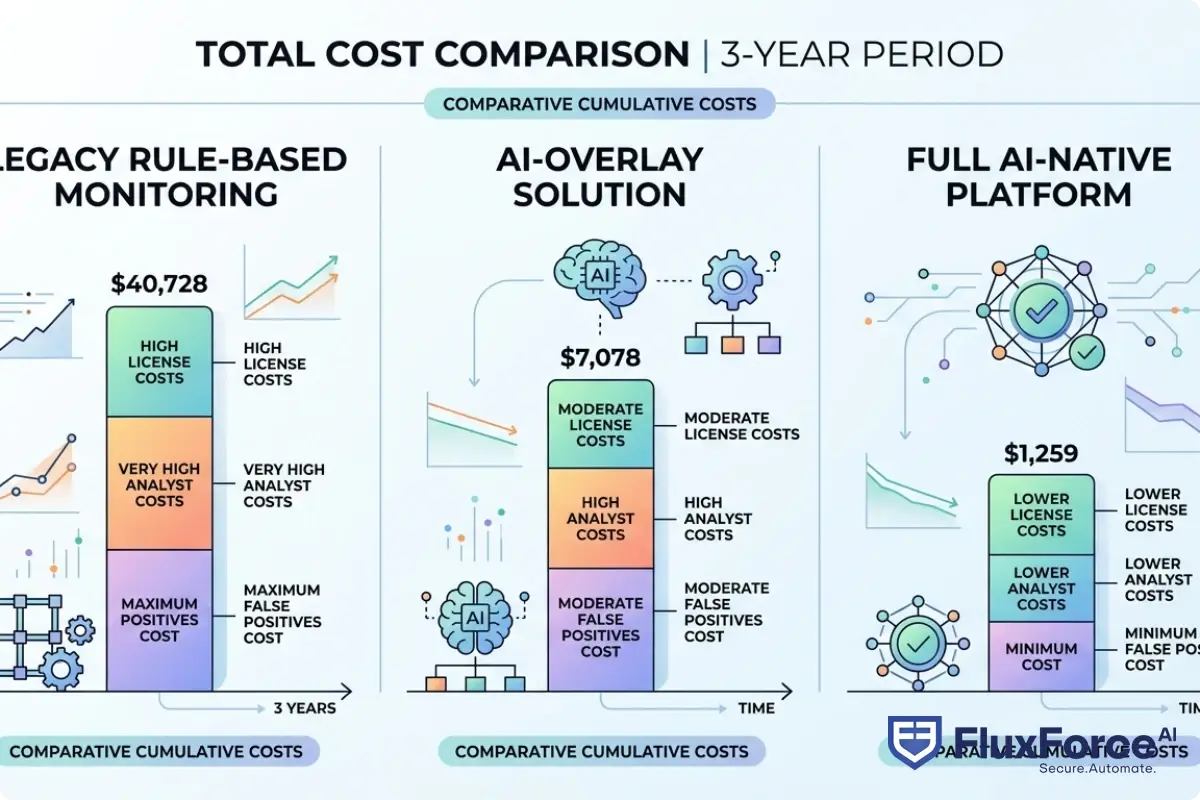

Strategy 11: Evaluate Transaction Monitoring Software on Total Cost

The sardine vs unit21 evaluation question comes up constantly in risk teams shopping for transaction monitoring software. The right comparison is not about feature lists alone but about total transaction monitoring cost: implementation, customization effort, analyst training time, integration overhead, and ongoing model maintenance.

Transaction monitoring cost at enterprise scale includes the hidden cost of custom rule development (typically $150-300/hour for specialized fraud engineering time) and the opportunity cost of analyst time spent on false positives. A system that costs 30% more in license fees but cuts false positive rate by 50% typically has better total economics over a 3-year horizon.

For insurance and regulated industries, the selection criteria also include how the system handles claims fraud patterns, which are structurally different from payment fraud. Policy Payment Security: Secure Payment Gateway Strategy for CISOs in Insurance covers the insurance-specific context.

Strategy 12: Align Fraud Controls With Regulatory Expectations Proactively

Regulatory scrutiny of fraud programs has increased significantly since 2023. The FinCEN Anti-Money Laundering Act requirements explicitly address technology standards for transaction monitoring, and examiners are asking increasingly specific questions about model governance, false positive rate documentation, and feedback loop procedures.

Proactive alignment means documenting your model's assumptions, training data, validation methodology, and performance metrics before an examination occurs. Institutions that produce a model risk management framework for their fraud controls consistently fare better in regulatory reviews than those reconstructing documentation under examination pressure.

The Real Economics of Transaction Monitoring in 2026

Transaction monitoring cost analysis rarely accounts for the full picture. License fees and implementation costs are visible. The cost of analyst time, customer friction from false positives, and regulatory remediation from inadequate documentation are not in most budget models.

Direct vs. Indirect Transaction Monitoring Costs

A mid-sized bank processing 2 million transactions per month with a 96% false positive rate and 15,000 monthly alerts spends roughly 750 analyst-hours per month on reviews that will clear as legitimate. At a fully-loaded analyst cost of $85/hour, that is $63,750 per month in pure waste, before counting the compliance overhead of documenting each disposition.

The False Positive Cost in Fraud: What the Numbers Show

The false positive cost in fraud extends beyond operations. FICO's research puts the annual cost of false declines for US issuers above $118 billion in lost transaction volume. That figure gets cited frequently, but the more actionable number is the customer-level abandonment rate: roughly 30% of customers who experience a false decline in a given quarter will reduce engagement with that payment product.

This is where the business case for investing in AI fraud detection software becomes straightforward. A 40% reduction in false positives on a portfolio with $20M in annual false-decline losses saves $8M annually. That number is larger than the license cost for most enterprise fraud platforms.

Onboard Customers in Seconds

Conclusion

Payment fraud prevention strategies in 2026 require layering AI fraud detection on top of procedural controls, not replacing one with the other. The institutions with the best outcomes have addressed false positive rates systematically, built real feedback loops for their detection models, and treated synthetic identity fraud as an onboarding problem rather than a transaction monitoring problem.

Start with the foundational layer: automated transaction monitoring with model scoring, and synthetic identity detection at onboarding. Then address alert fatigue through contextual scoring and automated case management. The advanced techniques in strategies 9-12 produce significantly better results once that foundation is in place.

If you want to see how AI-powered fraud detection performs against your institution's specific transaction mix, the right next step is a proof-of-concept on your own data, not a vendor demo on sanitized datasets.

Frequently Asked Questions

AI fraud detection is the use of machine learning models and behavioral analytics to identify fraudulent transactions by learning statistical patterns in historical data rather than matching fixed rules. Unlike rule-based systems, AI models can generalize to new fraud patterns they have not explicitly been programmed to recognize, and they produce a continuous probability score rather than a binary flag.

AI detects fraud in banking by scoring each transaction against a model trained on hundreds of behavioral features: transaction amount, merchant category, device fingerprint, location delta, account age, velocity across multiple timeframes, and behavioral biometrics. The model produces a fraud probability score in under 100 milliseconds, which routes the transaction to block, step-up authentication, or the analyst review queue based on configurable thresholds.

Machine learning fraud detection uses algorithms, most commonly gradient boosted trees or neural networks, trained on labeled transaction data to distinguish fraudulent from legitimate activity. The key advantage over rules is that ML models identify novel combinations of features that collectively signal fraud even when no individual feature triggers a rule. Models require periodic retraining as fraud patterns evolve.

Real-time fraud detection is the ability to score a transaction for fraud risk and make a block or pass decision within the payment authorization window, typically under 100 milliseconds. Banks use it by running a lightweight scoring model inline on the payment path, with a secondary asynchronous model that feeds analyst review queues for medium-risk transactions. Hard blocks are reserved for very high-confidence fraud signals to avoid false declines on legitimate transactions.

AI fraud detection software uses trained machine learning models to score transactions, whereas rule-based systems apply manually written if-then logic. The practical difference is that AI software can detect fraud patterns that were never explicitly programmed, produces fewer false positives when calibrated on institution-specific data, and improves over time through feedback loops. Rule-based systems require manual updates every time a new fraud pattern emerges.

Reducing false positives in transaction monitoring requires moving from population-level rules to customer-level behavioral baselines. Scoring each transaction against that specific customer's historical patterns rather than global thresholds is the most effective single intervention. Adding automated case triage that auto-closes low-complexity false positives and retraining ML models on your own labeled data also materially reduce the false positive rate without increasing missed fraud.

Synthetic identity fraud involves building a fictitious identity using a mix of real and fabricated data elements, typically a real Social Security number combined with a fabricated name and address. Fraudsters cultivate the identity as a normal customer for 12-24 months before liquidating the account. Detection requires identity graph analysis at onboarding that checks whether identity elements (SSN, phone, email, address) appear in unusual combinations across multiple applicant records.

Share this article