Listen To Our Podcast🎧

Fraud prevention ROI measurement is one of the hardest finance conversations in regulated industries, and most CFOs are having it with the wrong numbers. The typical fraud budget justification leans on loss prevention totals and incident counts, both of which undercount the real value of a modern prevention stack. This guide gives CFOs and finance leaders a structured framework for quantifying fraud technology returns across direct savings, compliance cost avoidance, and operational efficiency gains. Whether you're evaluating a first-generation deployment or justifying a platform migration, the framework applies.

Why Traditional Fraud Budgets Fail the ROI Test

The problem starts with how fraud losses get recorded. Most financial institutions track confirmed fraud write-offs but miss the adjacent costs: the analyst hours spent reviewing false positives, the fines from regulators when prevention controls are demonstrably inadequate, and the customer churn that follows a compromised account. When these costs stay invisible, the ROI denominator looks artificially small, making prevention investments appear expensive relative to their actual return.

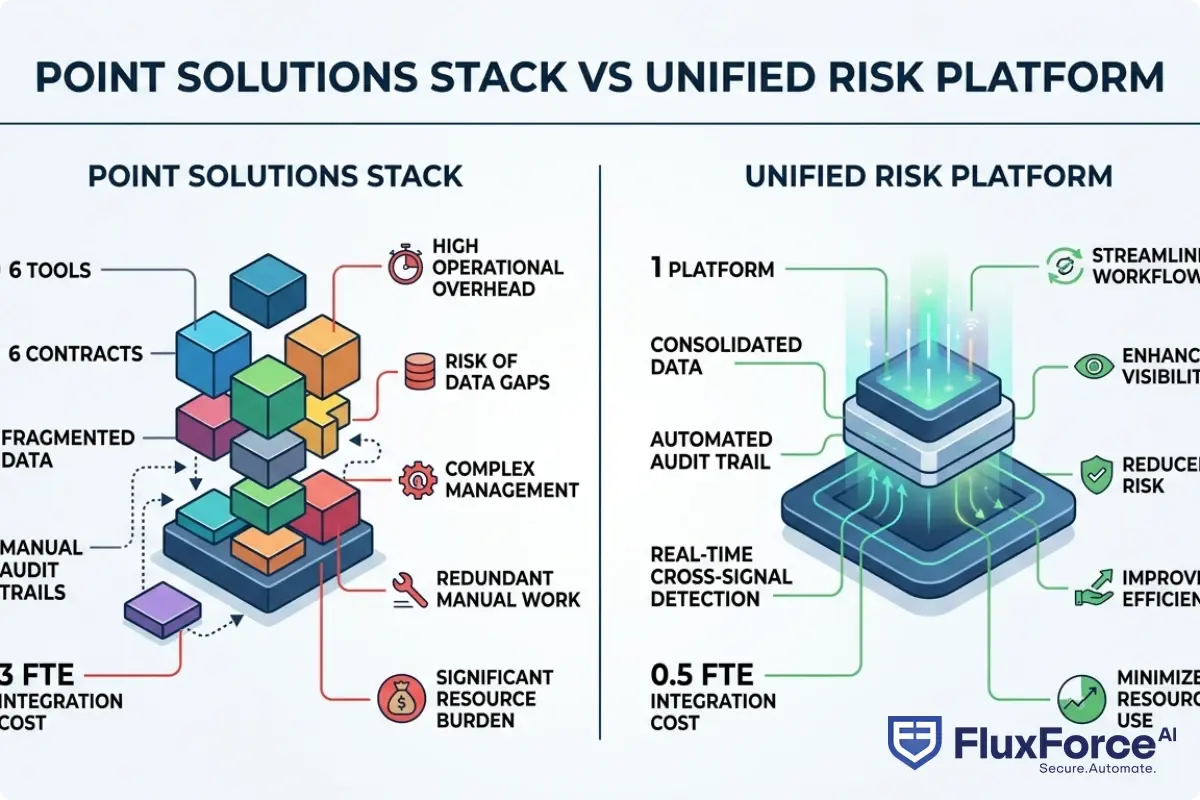

The point solutions vs platform financial services debate makes this worse. A bank running six separate vendor tools for transaction monitoring, identity verification, sanctions screening, device intelligence, behavioral analytics, and case management is paying six licensing fees, six integration maintenance costs, and six sets of compliance documentation overhead. Each tool has its own alert queue, its own data model, and its own support contract. CFOs rarely see the full cost until a vendor consolidation project forces an audit.

The Hidden Costs Most CFOs Miss

- Integration tax: Every point solution requires API maintenance, schema versioning, and break-fix work when vendors push updates. A conservative estimate puts this at 0.5 FTE per integrated tool annually for mid-sized institutions.

- Analyst context-switching: Fraud analysts toggling between five dashboards are slower and miss cross-signal patterns that a unified view surfaces immediately.

- Regulatory documentation burden: Each tool needs its own model governance documentation under SR 11-7, DORA, and equivalent frameworks. Multiplying this across six vendors adds weeks of compliance team time per audit cycle.

What the Full ROI Calculation Must Include

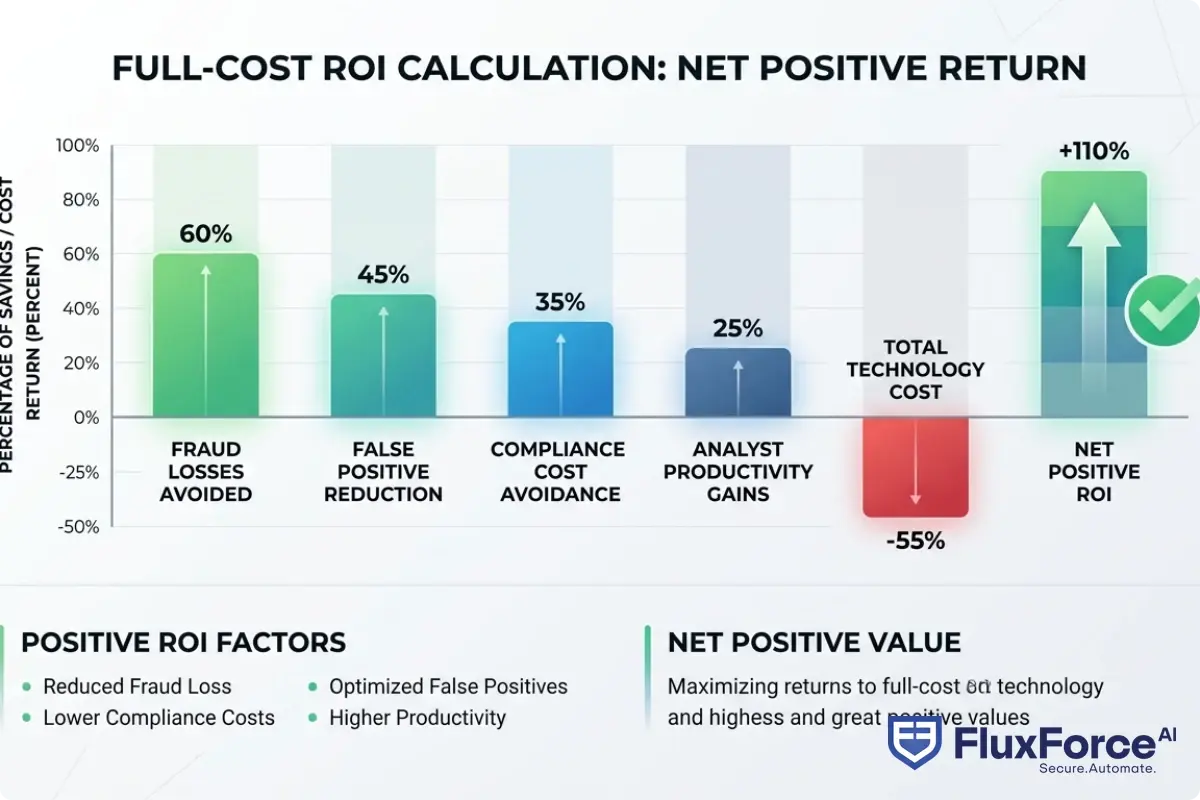

A complete fraud prevention ROI measurement must include: fraud losses avoided, false positive operational cost reduction, compliance fine avoidance, analyst productivity gains, and the technology cost itself (licensing plus integration plus maintenance). Miss any one of these categories and the number is wrong.

The CFO's Framework for Fraud Prevention ROI Measurement

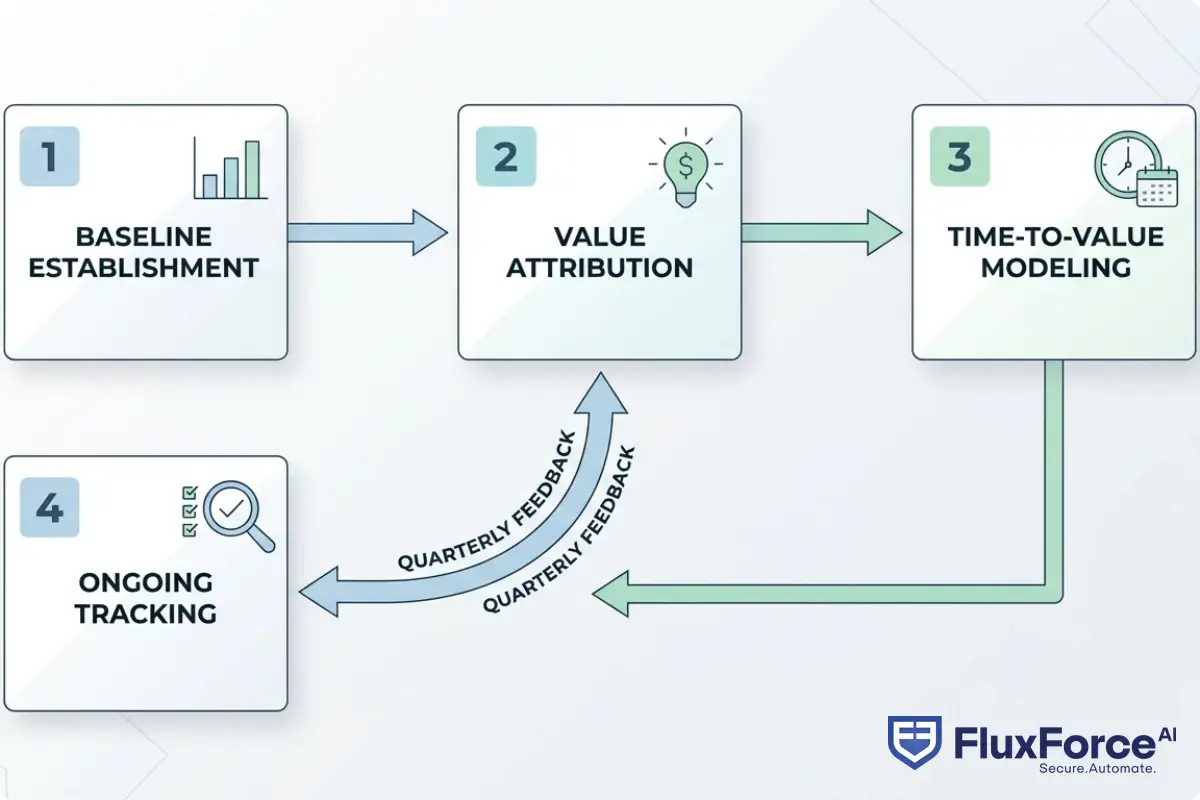

A rigorous fraud prevention ROI measurement framework has four components: baseline establishment, value attribution, time-to-value modeling, and ongoing tracking. The framework applies whether you're evaluating a pure-play fraud tool or a full ai security operations platform.

Step 1: Establish a Fraud Loss Baseline

Before you can measure improvement, you need a defensible baseline. This means total confirmed fraud losses (chargebacks, write-offs, insurance claims), plus an estimate of unreported fraud. Industry benchmarks from FATF's guidance on financial crime detection suggest 20-30% of fraud goes undetected or unreported in traditional monitoring setups. Use 12 months of data, adjusted for any major portfolio changes.

Step 2: Attribute Value Across the Full Stack

An ai security operations platform produces returns across multiple cost centers simultaneously. The attribution model should separate:

- Direct loss prevention: Fraud blocked at transaction time, valued at face value of blocked transactions minus false-positive review cost

- Compliance cost avoidance: Fines avoided, examination prep cost reduction, and audit response time savings

- Operational efficiency: Alert volume reduction, straight-through processing rate improvement, and mean time to investigate reduction

Step 3: Model Time-to-Value Realistically

The honest answer is that ROI timelines vary by deployment model. A cloud-native ai security operations platform with pre-built connectors can show measurable alert reduction within 60-90 days. A custom on-premise build might take 18 months before the baseline is even stable. CFOs should require vendors to provide reference customer time-to-value data, not just projected estimates from a sales deck.

Step 4: Build In Ongoing Measurement Infrastructure

ROI isn't a one-time calculation. Build quarterly review checkpoints into the vendor contract, tied to agreed metrics: false positive rate, investigation time per alert, confirmed fraud rate by channel, and regulatory finding count. How Agentic AI Fraud Agents Cut False Positives by 80% covers the operational impact of AI-driven false positive reduction in detail, including the measurement approach institutions use to track progress.

How a Unified Risk Platform Changes the ROI Equation

A unified risk platform is a single technology layer that consolidates fraud detection, identity verification, compliance screening, and case management into one data model and one workflow engine. The ROI difference versus point solutions is substantial, and it shows up in three places.

First, data latency disappears. In a point solutions stack, transaction signals from one tool take time to propagate to another tool's risk model. In a unified risk platform, every signal is available to every model in real time. This alone can improve detection rates by 15-25% on cross-channel fraud patterns that fragmented tools simply cannot see.

Second, analyst productivity jumps. A single case management interface with full context (transaction history, identity signals, device intelligence, prior alerts) cuts investigation time by 40-60% compared to analysts building context manually across multiple screens. The fraud compliance identity platform model means one login, one workflow, one audit trail.

Third, compliance documentation becomes a byproduct, not a project. When all controls run on one platform with a shared data model, producing the documentation regulators require under NIST's AI Risk Management Framework or the EU AI Act becomes an export function, not a manual exercise.

Vendor Consolidation Fintech: What the Numbers Show

The financial case for vendor consolidation fintech is strongest when you include total cost of ownership over a 3-year horizon. Licensing costs alone typically fall 20-35% when consolidating from five or more point solutions to a single platform. Add integration maintenance savings and the reduction is larger. The less visible gain is risk reduction: fewer integration points means fewer attack surfaces and fewer single-vendor failure modes that can take down a critical control at the worst possible time.

Point Solutions vs Platform: What Financial Institutions Miss in the Comparison

The point solutions vs platform financial services comparison gets made at the feature level too often. Security teams compare individual detection capabilities and conclude that specialized vendors edge out platforms on any single dimension. This is generally true. The question is whether that marginal detection improvement is worth the operational cost of managing the integration, and whether the fragmented data model actually reduces total fraud losses once integration overhead is factored in.

The data suggests it usually doesn't. AI vs. Traditional Fraud Detection: Key Differences Every Risk Officer Should Know explores how consolidated AI approaches outperform fragmented rule-based systems across institutions that have made the switch, with detailed before-and-after metrics.

When Point Solutions Still Make Sense

Point solutions remain the right choice in specific scenarios:

- Highly specialized use cases with no platform equivalent (deep document forensics for trade finance, for example)

- Regulatory requirements that mandate specific vendor certifications the platform doesn't yet hold

- Staged migration where a platform is being phased in alongside legacy tools over 12-18 months

The Total Cost Calculation Model

| Cost Category | Point Solutions (6 tools) | Unified Platform |

|---|---|---|

| Annual licensing | $1.2M | $750K |

| Integration maintenance (FTE) | 3.0 FTE | 0.5 FTE |

| Compliance documentation | 8 weeks/year | 2 weeks/year |

| Analyst efficiency loss | 35% overhead | Baseline |

| 3-Year TCO | ~$5.4M | ~$2.8M |

These figures are illustrative, based on published industry benchmarks. Your actual numbers will vary by institution size and existing stack.

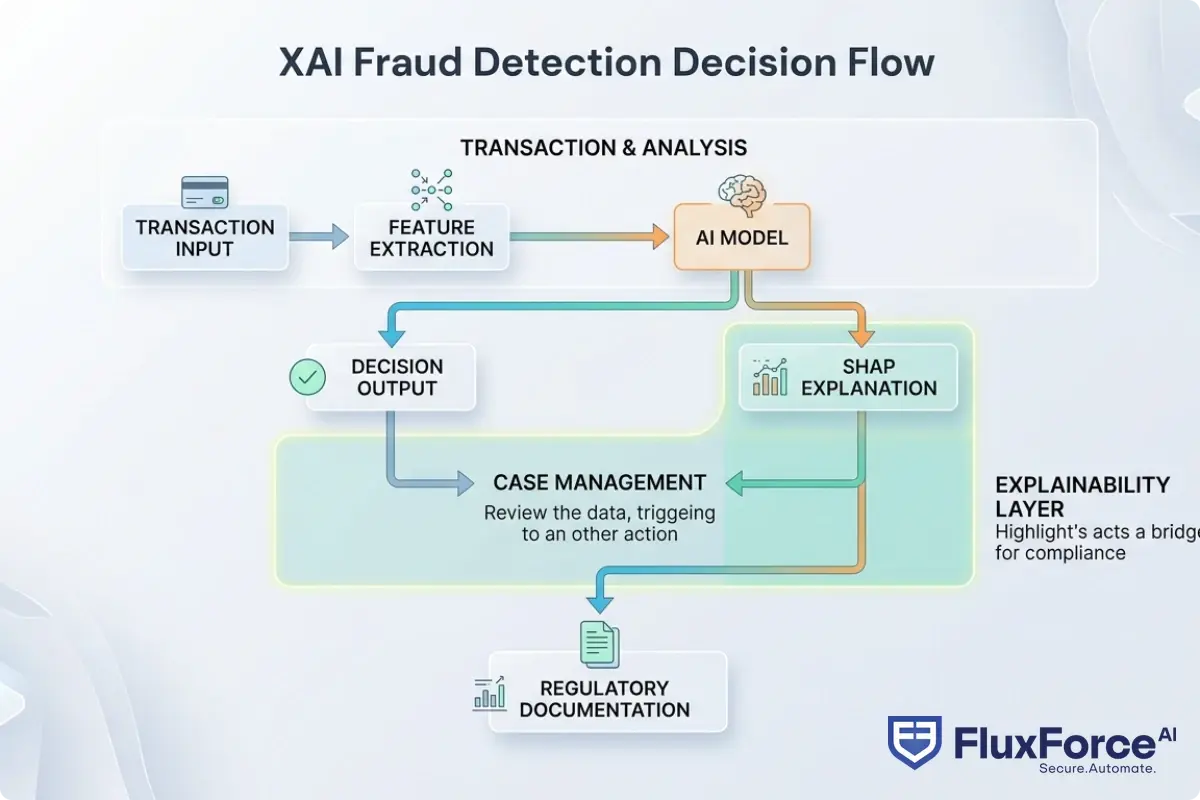

Why Explainable AI Finance Matters for ROI Justification

This gets tricky when you're presenting fraud technology ROI to a board or regulator: you need to explain not just what the system decided, but why. Explainable AI finance isn't only a regulatory requirement under frameworks like the EU AI Act or the OCC's model risk guidance. It's a practical ROI driver because explainability reduces the cost of every model governance activity across the institution.

When a fraud model produces a black-box AI decision, each disputed transaction requires a senior analyst to reconstruct the reasoning manually. When the same model produces a SHAP-based explanation alongside the decision, that review takes minutes instead of hours. Black box AI compliance risk isn't just a regulatory exposure, it's an operational cost multiplier that compounds with every model update cycle.

SHAP Values Explained for Regulators

SHAP values (SHapley Additive exPlanations) assign each input feature a contribution score to a model's output. For a fraud decision, SHAP values might show that 40% of the score came from device risk, 35% from transaction velocity, and 25% from behavioral anomaly. This output is directly usable in regulatory examinations and customer dispute resolution. AI model explainability for regulators is increasingly a baseline expectation, and SHAP values explained for regulators is often exactly how compliance teams satisfy exam requests about specific fraud decision rationale.

The practical ROI implication: institutions running XAI fraud detection report 30-50% reductions in model governance audit time compared to those running opaque ensemble models. Multiply that by the number of model governance cycles per year and the savings are material enough to appear on their own line in a vendor business case.

Explainable AI Compliance: The Regulatory Cost Angle

Under SR 11-7 model risk guidance from the Federal Reserve, DORA, and emerging EU AI Act requirements, explainable AI compliance means maintaining documentation of how each model was trained, validated, monitored, and updated. A platform with built-in explainability produces this documentation as a system feature. A custom-built stack requires a separate governance layer bolted on afterward, adding cost and fragility at exactly the points regulators scrutinize most closely. For a deeper look at compliance automation approaches in practice, see DORA for Digital Banks: Regulatory Compliance Automation Strategy for Compliance Officers.

How AI Agents in Financial Services Accelerate Returns

AI agents in financial services represent the next evolution in fraud prevention ROI. Rather than passive models that score transactions and wait for analyst review, ai agent fraud detection systems take autonomous actions within policy-defined boundaries: blocking transactions, requesting additional verification, updating risk scores, and escalating cases, all without requiring a human in the queue.

A multi agent AI system for fraud typically separates concerns across specialized agents: one agent handles transaction scoring, a second handles identity verification cross-checks, a third manages case prioritization, and a fourth handles regulatory reporting. Each agent is optimized for its specific task, and the system coordinates their outputs into a coherent decision.

The ROI Impact of AI Agent Automation

The measurable ROI impact shows up in three areas:

- Straight-through processing rate: AI agents can resolve a large share of low-risk alerts without analyst involvement. Roll Out Regulatory Compliance Agents in 90 Days Using Agentic AI outlines realistic deployment timelines and automation rates from reference implementations at financial institutions of varying sizes.

- Mean time to respond: Automated fraud agents operate 24/7 with sub-second response times. Human teams cannot match this for volume events like coordinated card fraud attacks that hit thousands of accounts simultaneously.

- Consistency: Unlike human analysts who apply rules inconsistently under high alert volume, AI agents apply the same logic every time, reducing both false positives and missed detections across the full alert queue.

Configurable AI Autonomy and Human in the Loop AI Banking

The honest limitation here is that full autonomy isn't appropriate for all fraud decisions. Configurable AI autonomy means defining clear thresholds: below a certain risk score, the system acts autonomously; above it, the decision routes to a human analyst. Human in the loop AI banking isn't a weakness in the AI system, it's a risk management feature that auditors and regulators expect to see documented in the governance framework.

For high-value transactions, complex identity disputes, and politically exposed person (PEP) screening, human review adds judgment that models can't fully replicate. The ROI calculation should account for this honestly: don't promise full automation in the business case when the compliance program requires human sign-off on certain decision types.

Building the Business Case for Vendor Consolidation Fintech

The vendor consolidation fintech business case is most compelling when it connects technology decisions to balance sheet outcomes that a CFO can defend in a board meeting. Here's a three-frame structure that works.

Frame 1: Cost Structure Improvement

Total contract value reduction, FTE redeployment value, and infrastructure simplification savings. These are the easiest numbers to source and the most credible with finance teams who are skeptical of the ROI story before they've seen the full analysis. Start here to establish credibility before moving to the harder-to-quantify frames.

Frame 2: Revenue Protection

Fraud losses directly reduce net revenue. A 25% reduction in fraud losses on a $50M annual fraud exposure produces a $12.5M revenue protection number. CFOs understand this framing better than abstract detection rate claims, and it translates directly to the P&L in a way that a board can evaluate against other capital allocation options.

Frame 3: Regulatory Risk Reduction

Quantify the expected value of avoided regulatory fines. If your current AML program carries a 5% annual probability of a $10M enforcement action, the expected annual risk is $500K. A platform that demonstrably reduces that probability by half produces $250K in expected value annually, before any other benefit is counted. The ai audit trail automation capability of modern platforms is a direct input to this calculation, since regulators specifically scrutinize audit trail completeness when assessing penalty severity and deciding whether to escalate an examination.

What to Include in the Vendor Scorecard

When evaluating a consolidated fraud compliance identity platform, the scorecard should include:

- Model explainability output format (SHAP, LIME, or equivalent) and regulatory exam readiness

- Configurable AI autonomy thresholds and human override workflows

- API integration depth with existing core banking and identity systems

- AI audit trail automation completeness (every decision, every model version, every configuration change logged immutably)

- Time-to-value evidence from reference customers at comparable institution sizes

For institutions in insurance, the same framework applies with different baseline metrics. Suspicious Claim Detection: AI-Powered Fraud Detection Strategy for Policy Underwriting Managers in Insurance covers the insurance-specific ROI dynamics, including how claims fraud rates translate into the same four-category value framework.

Onboard Customers in Seconds

Conclusion

Fraud prevention ROI measurement doesn't have to be a black-box exercise. The CFO framework outlined here treats fraud technology as a business investment with quantifiable returns across four categories: loss prevention, compliance cost avoidance, operational efficiency, and risk-adjusted regulatory exposure. The shift from point solutions to a unified risk platform changes the ROI math significantly, both by reducing total cost of ownership and by enabling the explainable AI compliance that modern regulators expect from every institution they supervise.

The firms getting the best returns have stopped measuring fraud prevention by incident count alone and started measuring by total cost of risk across the full prevention stack. If your current vendor contracts don't include the metrics to run this analysis, that's the first thing to fix. Build the measurement infrastructure now, and the ROI case for any future technology investment becomes straightforward to make and straightforward to defend.

Frequently Asked Questions

A unified risk platform is a single technology layer that consolidates fraud detection, identity verification, compliance screening, and case management into one shared data model and workflow engine. Rather than running multiple separate tools, a unified risk platform gives fraud and compliance teams one interface, one alert queue, and one audit trail. This structure reduces operational overhead, eliminates data latency between tools, and improves cross-signal fraud detection accuracy compared to fragmented point solutions.

An AI security operations platform applies machine learning and AI agents to fraud and threat detection workflows, automating alert triage, investigation prioritization, and in some cases autonomous response actions. In financial services, these platforms combine transaction monitoring, identity verification, and case management with AI models that learn from historical fraud patterns. The key ROI driver is the platform's ability to increase straight-through processing rates while maintaining or improving detection quality.

Point solutions are standalone tools that address a single function such as transaction monitoring or identity verification, while a platform consolidates multiple functions into one integrated system. The critical difference in financial services is total cost of ownership: point solutions require separate licensing, integration maintenance, and compliance documentation for each tool, whereas a platform centralizes these costs. Platforms also enable cross-signal fraud detection that fragmented point solutions cannot achieve because they share a single data model.

Vendor consolidation in fintech is the process of reducing the number of third-party technology providers by migrating from multiple point solutions to a smaller number of comprehensive platforms. The primary drivers are cost reduction through licensing and integration savings, operational simplicity from fewer vendor relationships and support contracts, and risk reduction from fewer integration points that represent potential failure modes or attack surfaces.

A fraud compliance identity platform is an integrated solution that combines fraud detection, regulatory compliance controls (AML, sanctions screening), and identity verification into a single system. This consolidation eliminates data silos between fraud and compliance functions, reduces duplicate alert review across teams, and produces unified audit trails that satisfy regulatory examination requirements under frameworks like DORA, SR 11-7, and the EU AI Act.

Explainable AI in finance refers to AI models that produce human-readable explanations alongside their decisions, rather than outputting only an opaque risk score. In fraud detection, explainable AI finance tools use techniques like SHAP values to show which input factors drove a particular fraud decision, such as device risk, transaction velocity, or behavioral anomaly. Regulators under model risk governance frameworks like SR 11-7 and the EU AI Act increasingly require this level of transparency for any AI model used in regulated financial processes.

XAI fraud detection is fraud detection powered by explainable AI models that provide interpretable reasoning for each transaction decision. Unlike black-box neural networks, XAI fraud detection systems can articulate why a transaction was flagged, making decisions auditable, defensible in customer dispute resolution, and compliant with model risk governance requirements. Institutions using XAI fraud detection typically report 30-50% reductions in model governance audit time compared to those running opaque ensemble models.

Share this article