Listen To Our Podcast🎧

AI fraud detection explained is not just a buzzword cycle making rounds at fintech conferences. It's a measurable operational shift that separates institutions catching fraud in milliseconds from those still sorting through alert backlogs three days later. Financial institutions face a hard reality: fraud and financial crime costs the global economy an estimated $1.8 trillion annually, according to LexisNexis's True Cost of Financial Crime research, and the attacks keep getting more sophisticated. Synthetic identities, deepfake-enabled account openings, and real-time payment fraud require detection systems that adapt continuously, not just enforce static rules. This guide covers how AI fraud detection actually works under the hood, which technologies are driving adoption, where the genuine benefits sit, and which limitations compliance teams and CISOs should plan around before going live.

How AI Fraud Detection Works

AI fraud detection uses machine learning models trained on historical transaction and behavioral data to assign a risk score to each event in real time. The core processing loop is: ingest event data, extract features, score the event through one or more models, then route the decision to an outcome.

Feature extraction is where most of the differentiation from rule-based systems sits. Unlike rule engines that check a fixed set of conditions (amount above a threshold, new device, foreign IP), ML models weigh hundreds of signals simultaneously. These include behavioral signals like typing cadence, mouse movement, and session duration; device fingerprints; transaction graph relationships that reveal money mule networks; and anomaly scores measuring how far an event deviates from the user's established behavioral baseline.

Most production AI fraud detection systems today use an ensemble approach. A gradient boosted model handles structured transaction features. A graph neural network maps relationships between accounts. A sequence model watches session behavior over time. Each model contributes a sub-score; a meta-model or rule overlay combines them into a final decision. The output is typically one of three buckets: approve automatically, flag for human review, or block. Thresholds for each bucket are calibrated operationally to balance false positive rates against catch rates, which is where the real operational tuning happens after deployment.

Key Technologies Powering AI Fraud Detection

Biometric Identity Verification and Liveness Detection

Biometric identity verification compares a live capture, typically a selfie, against a reference image on a government-issued document to confirm a person is who they claim to be. It is now the standard approach for digital onboarding in banking, insurance, and identity verification fintech platforms that operate without in-person interactions.

The harder problem is liveness detection fraud: confirming the biometric sample comes from a physically present person rather than a photo, a pre-recorded video replay, or an AI-generated deepfake face. Passive liveness detection runs in the background, analyzing micro-textures, light reflection patterns, and depth cues without requiring any user action. Active liveness challenges the user to perform a gesture. The strongest implementations combine both.

Accuracy matters operationally in ways that are easy to underestimate at procurement time. A 0.5% false accept rate on 100,000 daily onboarding events means 500 fraudulent accounts opening every day. Most enterprise-grade systems now target false accept rates below 0.1% on compliant devices, and that threshold should be part of any vendor evaluation scorecard.

Synthetic Identity Fraud Detection

Synthetic identity fraud is the fastest-growing fraud type in financial services. A fraudster combines a real Social Security number (often from a child or a deceased individual) with fabricated names, addresses, and dates of birth to create an identity that passes basic credit bureau checks. These synthetic identities accumulate credit history over months before a bust-out event where maximum credit lines are drawn and abandoned simultaneously.

AI catches synthetic identities by analyzing combinations of weak signals that no single rule would flag: the SSN was issued in a state different from the stated birth location, the phone number was created within 48 hours of the application, the device IP is linked to multiple recently opened accounts across different institutions. The model's ability to weight combinations of indirect evidence is what makes it effective where rule-based systems fail on this specific fraud pattern.

For a detailed look at how real-time detection systems handle this pattern in production environments, Detecting Synthetic Identity Fraud in Real-Time covers the specific architectural decisions that determine whether detection happens at onboarding or months later.

Deepfake Detection in Banking

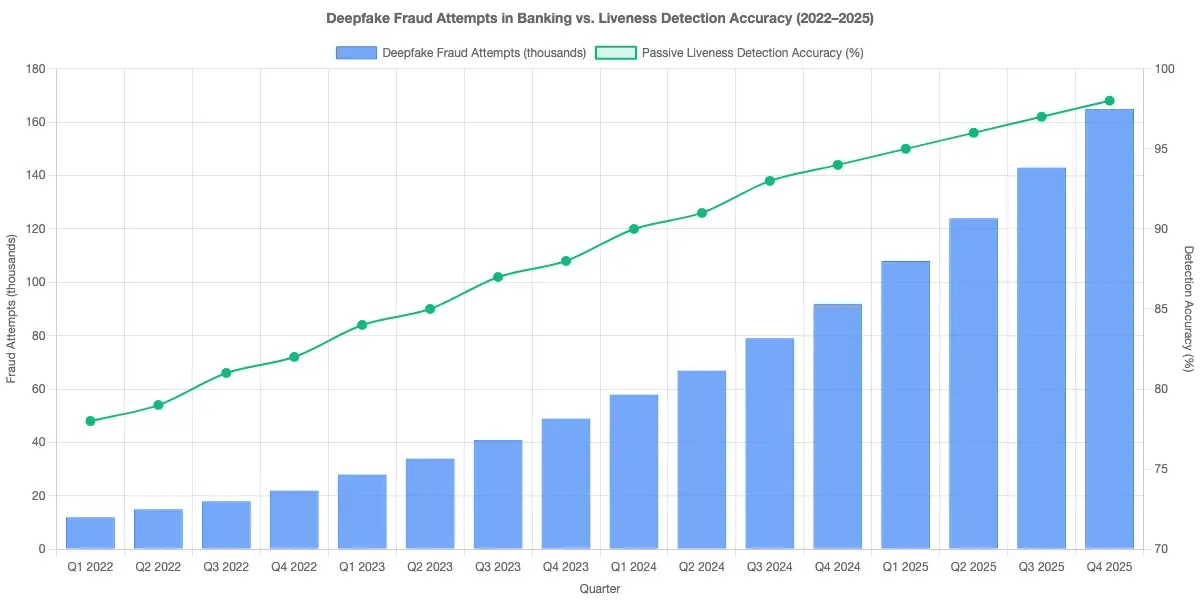

Deepfake detection in banking addresses a threat that became operationally significant around 2022: AI-generated face swaps and voice clones used to bypass video KYC checks or impersonate account holders during verification calls. A fraudster using a deepfake can present a synthetic face that matches a stolen document photo with enough fidelity to fool standard facial comparison algorithms that were not designed with this attack vector in mind.

Detection approaches include analyzing video compression artifacts, inconsistencies in facial geometry across frames, unnatural blinking frequency, and spectral analysis of audio for synthesis markers. The practical challenge is that deepfake generation quality is improving faster than detection models are being updated. No vendor has fully solved this, which means deepfake detection should be one layer in a defense stack, not the sole identity safeguard.

AI Fraud Detection vs. Traditional Rule-Based Systems

Rule-based fraud detection runs on hand-coded logic: if a transaction meets certain criteria, flag it. The practical problem is that rules have to be written and maintained by human analysts, which means they lag behind new fraud patterns by weeks or months. They also generate high false positive rates because they cannot account for individual customer context when applying broad thresholds.

AI fraud detection systems learn from labeled outcomes. When a fraud analyst marks a flagged transaction as legitimate, the model updates its understanding of what normal looks like for that customer segment. This feedback loop is what allows AI systems to maintain accuracy as fraud methods evolve, without manual rule rewrites each time a new attack pattern appears.

The honest comparison here: rule-based systems are more explainable and auditable, which matters during regulatory examinations. AI systems catch more fraud with fewer false positives, but the reasoning behind any individual decision requires interpretability tooling (SHAP values, LIME, or surrogate models) to make it readable to a regulator or auditor. Most production environments use both: AI for probabilistic scoring, rules for hard blocks on confirmed-bad patterns.

For a detailed breakdown of where these approaches diverge on accuracy, cost, and operational burden, AI vs. Traditional Fraud Detection: Key Differences Every Risk Officer Should Know is worth reviewing before finalizing your architecture decision.

The Role of Digital Identity Proofing and KYC Onboarding Speed

Digital identity proofing is the process of verifying that a person is who they claim to be during an online interaction, using document scanning, biometric matching, and database cross-referencing against sanctions lists, PEP databases, and credit bureaus. It sits at the front of the fraud prevention chain: accurate proofing at onboarding substantially reduces downstream fraud risk across the account lifecycle.

KYC onboarding speed is where AI creates the clearest and most measurable business case. Traditional manual KYC review takes 7 to 10 business days at conventional banks. AI-assisted digital identity proofing completes the same set of checks in under 3 minutes for clean applications, running document OCR, liveness detection, sanctions screening, and anomaly scoring simultaneously rather than in sequence.

The tradeoff worth stating plainly: faster onboarding requires higher confidence in automated decisions. Institutions that push automated approval rates too high to reduce friction will see elevated fraud rates in the first 90 days of new customer cohorts. The calibration between speed and accuracy is a business decision with direct P&L consequences, not a technical configuration detail that can be left to engineers alone.

For institutions running regulated onboarding workflows, AML Risk Checks in Policy Issuance: KYC/AML & Identity Verification Strategy for Claims Directors in Insurance covers how identity proofing checks connect with downstream AML screening and compliance requirements.

Identity Verification API: How Integration Actually Works

An identity verification API is the technical interface through which a bank, fintech, or insurer connects its onboarding or transaction monitoring system to an external verification engine. Typical API calls include: submit document images for OCR and authenticity scoring, submit a biometric capture for facial comparison and liveness analysis, and retrieve a verification result with a confidence score and a structured decision code.

Integration architecture matters more than most teams expect going in. Latency is a real operational concern: a verification flow that adds 8 seconds to a mobile signup creates measurable conversion drop-off, particularly on mobile-first products. Well-designed identity verification APIs return decisions in 1 to 3 seconds for standard document types under normal load. Webhook callbacks are generally preferred over polling for asynchronous results, returning a session token immediately while delivering the final result when processing completes.

Rate limiting on the API layer is a security concern that is easy to overlook during integration planning. Fraud rings probe verification endpoints to map which document and data combinations pass. API Rate Limiting: API Security Strategies for CISOs in Banking covers the access control patterns that prevent enumeration attacks on verification endpoints and the monitoring signals that indicate probing activity is underway.

Benefits of AI Fraud Detection for Financial Institutions

The operational benefits are concrete when institutions move from rule-based to AI-driven fraud detection:

- False positive reduction: AI systems routinely cut false positive rates by 40 to 70% compared to rule engines on the same transaction volume. Each false positive costs analyst time and creates a customer friction event that carries churn risk.

- Real-time detection: Scoring happens during a transaction rather than in a batch review cycle hours later, which matters most for real-time payment rails where fraud cannot be recalled after settlement.

- Adaptive detection: The model updates as fraud patterns shift without requiring manual rule rewrites. This is the single biggest operational advantage over static rule engines in fast-moving fraud environments.

- Cross-channel correlation: AI connects signals across mobile, web, call center, and branch channels, catching account takeover attempts that appear legitimate on any single channel viewed in isolation.

- Reduced review queue volume: Institutions using AI-assisted triage consistently report 50 to 60% reductions in cases requiring human analyst review, which directly reduces operational cost per transaction.

For a focused look at how agentic AI fraud systems specifically address false positive volume at scale, How Agentic AI Fraud Agents Cut False Positives by 80% covers the mechanism in detail.

Regulatory and compliance benefits also apply. Accurate fraud detection lowers chargeback rates, which affects payment processing costs and card network standing. For institutions subject to the Digital Operational Resilience Act in the EU, AI-driven fraud systems that maintain decision audit logs contribute to operational resilience documentation requirements that regulators are actively examining.

Real Limitations You Should Know

AI fraud detection is not a complete solution, and treating it as one creates new risk exposure that is easy to miss during initial deployment planning.

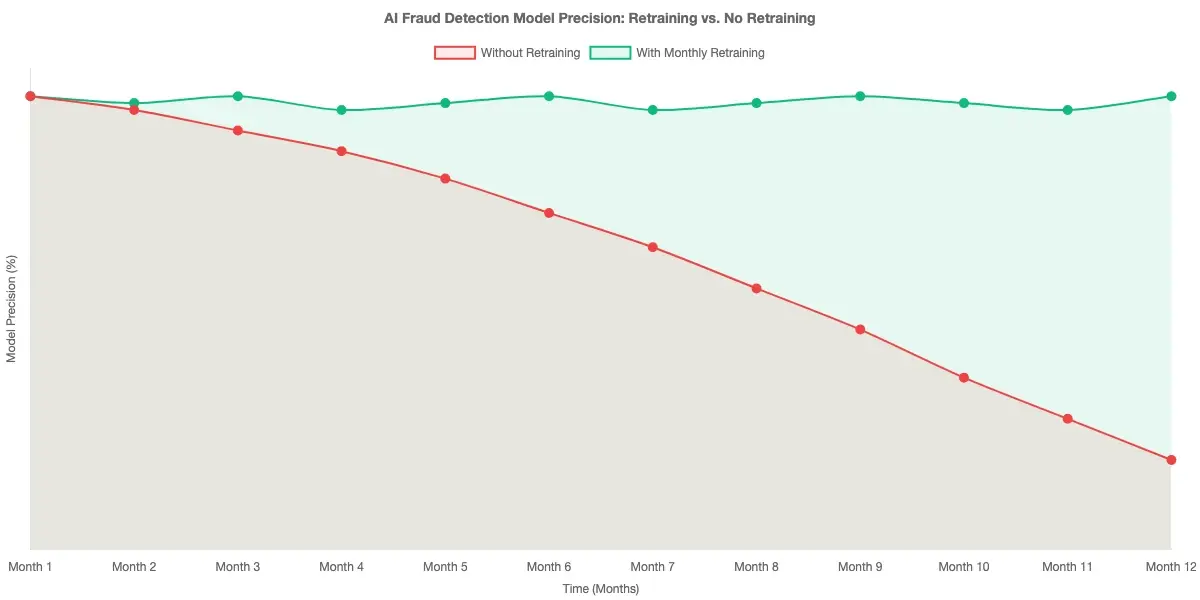

Model drift is the most operationally significant limitation. Fraud patterns change, and a model trained on last year's data degrades in accuracy as new attack types emerge. Without a monitoring program that tracks precision and recall on a rolling basis and triggers retraining when performance drops below acceptable thresholds, degradation often goes undetected until fraud losses spike in a quarterly review. This is not a theoretical concern; it's a documented failure mode at institutions that deployed AI systems without building ongoing maintenance into their operating model.

Explainability gaps create compliance friction at examination time. When a legitimate customer is declined, most regulatory jurisdictions require a specific adverse action reason. Black-box model outputs need interpretability layers to generate human-readable explanations. This is architecturally solvable, but it adds complexity that is frequently scoped out of initial deployments and added later under regulatory pressure, at greater cost.

Adversarial attacks are a genuine threat in high-volume environments. Sophisticated fraud rings probe model behavior by running low-value test transactions to identify which patterns score as low risk. Over time, they route higher-value fraud through those patterns. This is why AI scoring should sit within a zero trust security framework that continuously validates identity and behavior across sessions, rather than operating as a standalone gate that is only checked once at transaction initiation.

The relationship between zero trust principles and fraud prevention is worth understanding explicitly. Banking Access Controls: Zero Trust Security Architecture Strategy for Banking Ops Heads covers how continuous verification in a zero trust architecture reduces the attack surface that AI fraud models are defending, making the overall system more resilient to adversarial probing.

Data quality dependency is frequently underestimated during procurement. AI models inherit the biases in their training data. If historical fraud labels have systematic errors, including over-flagging legitimate transactions from certain customer demographics, the model learns and amplifies those patterns. Auditing training data quality and evaluating model performance disaggregated by customer segment is a prerequisite for responsible deployment, not a post-launch consideration.

AI systems also require more infrastructure investment than rule engines: inference pipelines, model monitoring, retraining workflows, and interpretability tooling all add cost that does not exist with a static rule set. The ROI is typically positive for institutions processing more than 50,000 transactions per day, but smaller operations may find that managed service models are more practical than building and maintaining the full stack in-house.

Onboard Customers in Seconds

Conclusion

AI fraud detection explained at the level that drives operational decisions: it is a combination of machine learning models, behavioral analytics, biometric identity verification, and real-time data enrichment that catches fraud patterns static rules and manual review queues cannot match. The benefits are real and measurable, particularly in false positive reduction, KYC onboarding speed, and adaptive detection as fraud methods evolve. The limitations are equally real: model drift, explainability requirements, adversarial probing, and data quality dependencies all require active ongoing management to sustain the accuracy gains that justified the investment.

Institutions that get sustained value from AI fraud detection treat it as a living operational capability rather than a one-time deployment. That means monitoring model performance continuously, investing in interpretability tooling ahead of regulatory examination cycles, and layering AI scoring within a zero trust security architecture. If you're evaluating where AI fraud detection fits in your security stack, start with your current false positive rate and analyst review volume. Those two numbers define the baseline that AI can improve most predictably, and they give you a clear benchmark for measuring whether a vendor's claims hold up in your specific environment.

Frequently Asked Questions

Identity verification in fintech is the process of confirming that a user's claimed identity matches a real, legitimate individual before granting access to financial products or services. It typically involves document verification (passport, driver's license), biometric matching against that document, and cross-referencing government databases or credit bureaus. Fintech platforms use automated identity verification to meet KYC (Know Your Customer) regulations, reduce onboarding fraud, and comply with AML screening requirements without adding days to the customer sign-up process.

KYC onboarding speed refers to how quickly a financial institution completes the Know Your Customer verification process for a new applicant. With AI-assisted digital identity proofing, clean applications can be verified in under 3 minutes. Manual KYC processes typically take 7 to 10 business days at traditional banks. Faster onboarding reduces customer drop-off at sign-up and improves conversion rates, but requires careful calibration: pushing automated approval rates too high without sufficient accuracy will produce elevated fraud rates in the first 90 days of new customer cohorts.

Biometric identity verification uses unique physical characteristics, primarily facial features in digital financial services, to confirm a person's identity. The process compares a live selfie against a reference image on a government-issued ID using facial recognition algorithms. It provides stronger identity assurance than knowledge-based authentication like security questions and is considerably harder to spoof than passwords. In regulated onboarding contexts, biometric verification is typically combined with liveness detection to confirm the sample comes from a physically present person rather than a photo or replay.

Liveness detection fraud occurs when an attacker attempts to bypass a biometric verification check by presenting a non-live representation of a face: a printed photo, a pre-recorded video, a 3D mask, or an AI-generated deepfake. Liveness detection is the countermeasure, using techniques like passive texture analysis, 3D depth sensing, and challenge-response gestures to confirm the biometric sample comes from a physically present person. Passive liveness runs silently in the background; active liveness asks for a real-time gesture. The most robust implementations layer both approaches to address the full range of presentation attacks.

Digital identity proofing is the end-to-end process of establishing that a person interacting online is who they claim to be, without requiring an in-person interaction. It combines document verification, biometric matching, database checks against sanctions lists and PEP registries, and behavioral signal analysis. NIST SP 800-63-3 defines three identity assurance levels (IAL1, IAL2, IAL3) that financial institutions can reference when setting proofing requirements for different risk tiers. Higher assurance levels require more rigorous evidence and verification steps, trading some onboarding speed for lower identity fraud exposure.

An identity verification API is a programmatic interface that allows an application to submit identity documents, biometric samples, and personal data to an external verification engine and receive a structured decision response with a confidence score and decision code. Banks, fintechs, and insurers use these APIs to integrate third-party verification capabilities into their onboarding and transaction monitoring systems without building the underlying ML models in-house. Key integration considerations include response latency (well-designed APIs return decisions in 1 to 3 seconds), webhook support for asynchronous results, and rate limiting controls to prevent enumeration attacks on the endpoint.

Synthetic identity fraud is a fraud technique where an attacker creates a fictitious identity by combining real and fabricated personal information, typically a real Social Security number paired with an invented name, date of birth, and address. Unlike traditional identity theft, there is no direct victim to report unauthorized activity, which makes synthetic identities harder to detect through conventional means. AI-based synthetic identity fraud detection systems catch these by analyzing combinations of weak signals that no single rule would flag: SSN issuance state mismatches, phone numbers created within hours of the application, and device identifiers linked to clusters of recently opened accounts across institutions.

Share this article