Listen To Our Podcast🎧

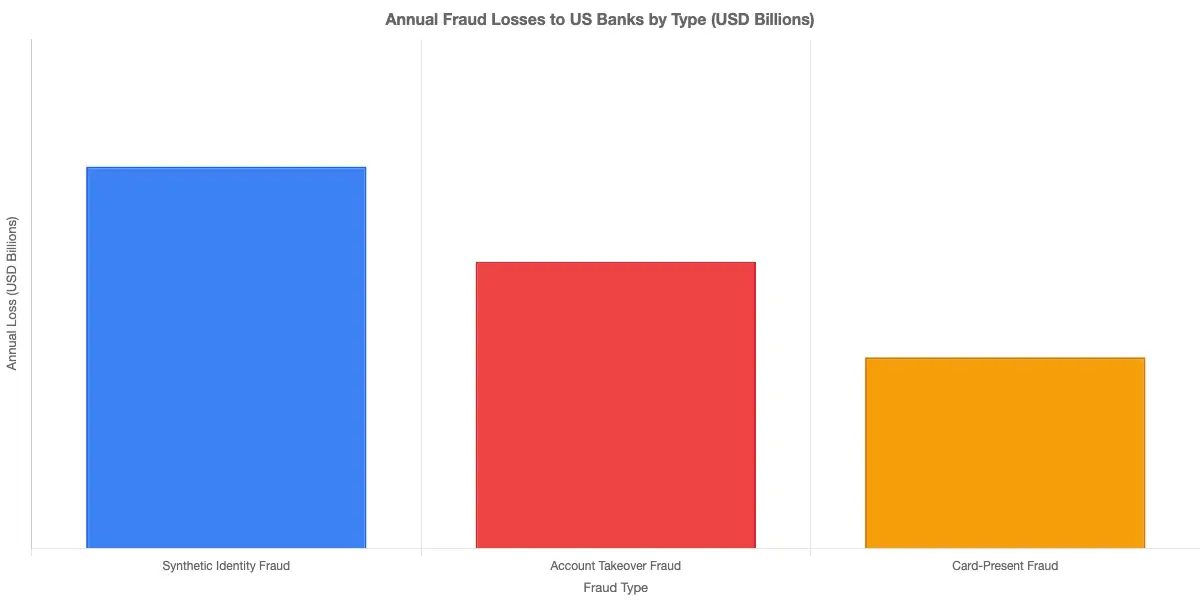

Synthetic identity fraud detection has become the most expensive problem banks can't solve with existing transaction monitoring software. Unlike account takeover, where a criminal steals a real person's credentials, synthetic fraud involves constructing a fictional identity from scratch, often blending a real Social Security Number with fabricated names, addresses, and phone numbers. The resulting identity passes standard KYC checks, builds a credit history over 12-24 months, then executes a "bust-out" that empties every available credit line in days. According to the Federal Reserve, synthetic identity fraud is the fastest-growing financial crime in the United States, costing lenders an estimated $6 billion annually. Most institutions detect these schemes only after funds are gone. This post explains why, and what modern AI systems do differently.

What Is Synthetic Identity Fraud?

Synthetic identity fraud is the deliberate construction of a fictitious person using a mix of real and fabricated data elements to deceive financial institutions. The identity may pass every automated verification check in place today, because it was specifically designed to.

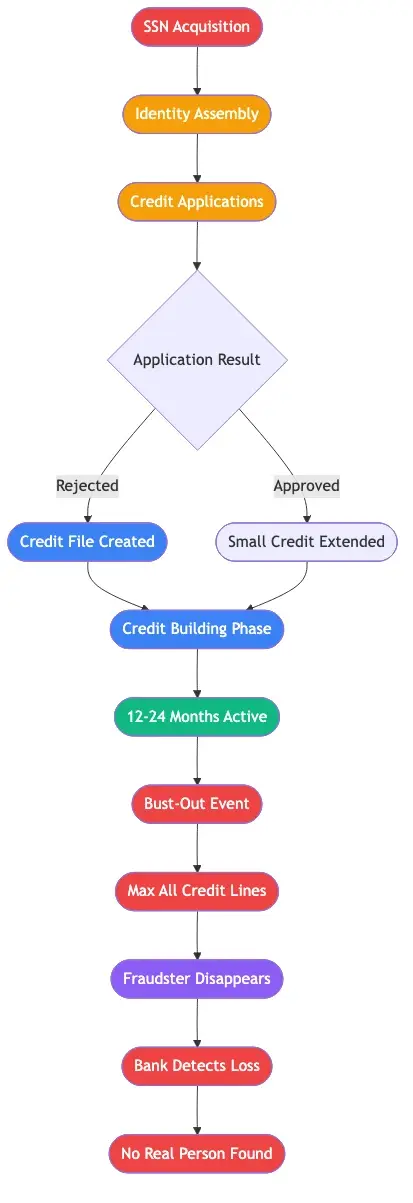

How Synthetic Identities Are Built

The construction follows a predictable sequence. A fraudster acquires a real SSN, often belonging to a child with no credit history, a recently deceased person, or someone who has never used credit. They attach a fabricated name, date of birth, and address to that SSN, then begin applying for credit. Early applications are rejected, but each rejection creates a credit file at the bureaus. Over time, the fraudster adds a secured card and a retail credit line, building a positive payment history.

After 12-24 months of careful credit-building behavior, the synthetic identity looks like a model customer. Credit scores are solid. Payment history is clean. Then comes the bust-out: every available credit line gets maxed in days, and the fraudster disappears. Average loss per synthetic identity in a bust-out ranges from $15,000 to $25,000. Organized rings running 50 identities simultaneously can generate losses exceeding $1 million from a single coordinated attack.

Why Standard KYC Checks Miss the Problem

Standard KYC verification confirms that an SSN is valid, that the name is internally consistent with submitted documents, and that the address exists. It does not confirm that the person submitting those elements actually is who they claim to be. A synthetic identity passes all three checks because the underlying SSN is real, the documents are convincing, and there is no real victim filing a complaint. This is the core detection gap: traditional verification looks at document authenticity but not identity coherence across the full customer lifecycle.

Why Traditional Fraud Detection Falls Short

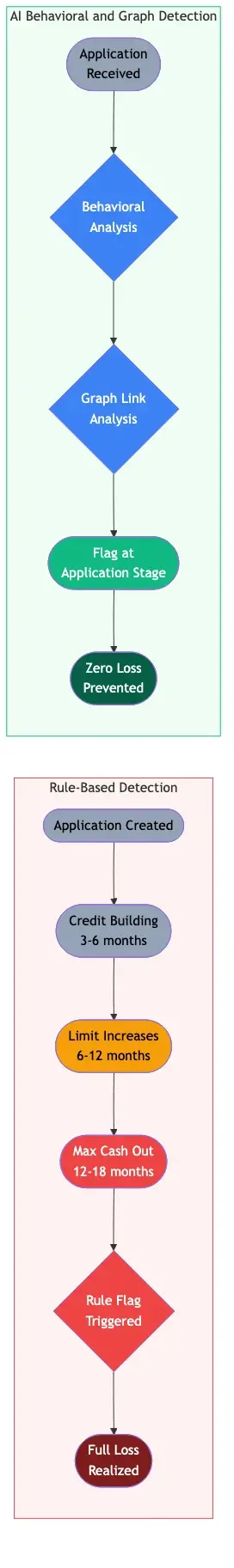

Rule-based transaction monitoring software was designed to catch known deviations: velocity breaches, unusual geographies, atypical amounts. Automated transaction monitoring tools built on static rules cannot detect patterns that evolve over months or span multiple accounts. Synthetic fraud defeats this model before it starts.

The Credit-Building Phase Looks Clean

For most of its operational life, a synthetic identity generates zero rule-based alerts. The fraudster pays on time, maintains low utilization, and never triggers a velocity rule. The account scores as low-risk throughout the entire credit-building phase. The fraud signal only appears at bust-out, at which point the money is already gone. Detection at that stage informs the loss calculation. It does not prevent it.

Data Silos and the Cross-Institution Problem

A coordinated synthetic fraud ring typically operates 20-50 identities simultaneously across multiple banks. Within any single institution, each identity appears isolated and low-risk. Across the banking sector, shared infrastructure (same phone numbers, same device IDs, same addresses across dozens of applications) is a clear signature of coordinated fraud. FinCEN has flagged synthetic identity rings as a priority AML concern precisely because the cross-institution coordination makes traditional detection methods ineffective at scale. Legacy systems simply do not have visibility beyond their own data silos.

How Does AI Detect Fraud? The Science Behind Modern Detection

AI fraud detection explained: AI systems identify fraud by ingesting behavioral signals, network relationships, and transactional data simultaneously, computing anomaly scores across hundreds of dimensions, and making probabilistic judgments that no static rule set could replicate. For synthetic identity fraud detection specifically, this multi-dimensional view changes the outcome.

Machine Learning Fraud Detection: Two Key Approaches

Machine learning fraud detection works through two primary model types. Supervised models train on labeled historical data (known fraud versus legitimate accounts) to classify new events. They perform well when fraud patterns are stable and training data is abundant.

Unsupervised models are more critical for synthetic fraud. They identify unusual clustering and outlier behavior without relying on labeled examples. Since synthetic identities do not match previously seen fraud patterns by design, unsupervised graph analysis is what actually catches them. A graph model maps every shared data element across all accounts: the same phone number attached to 12 different applicants, the same device accessing 20 accounts. These network-level signals are invisible to any rule-based system examining accounts individually.

How Does AI Detect Fraud Step by Step?

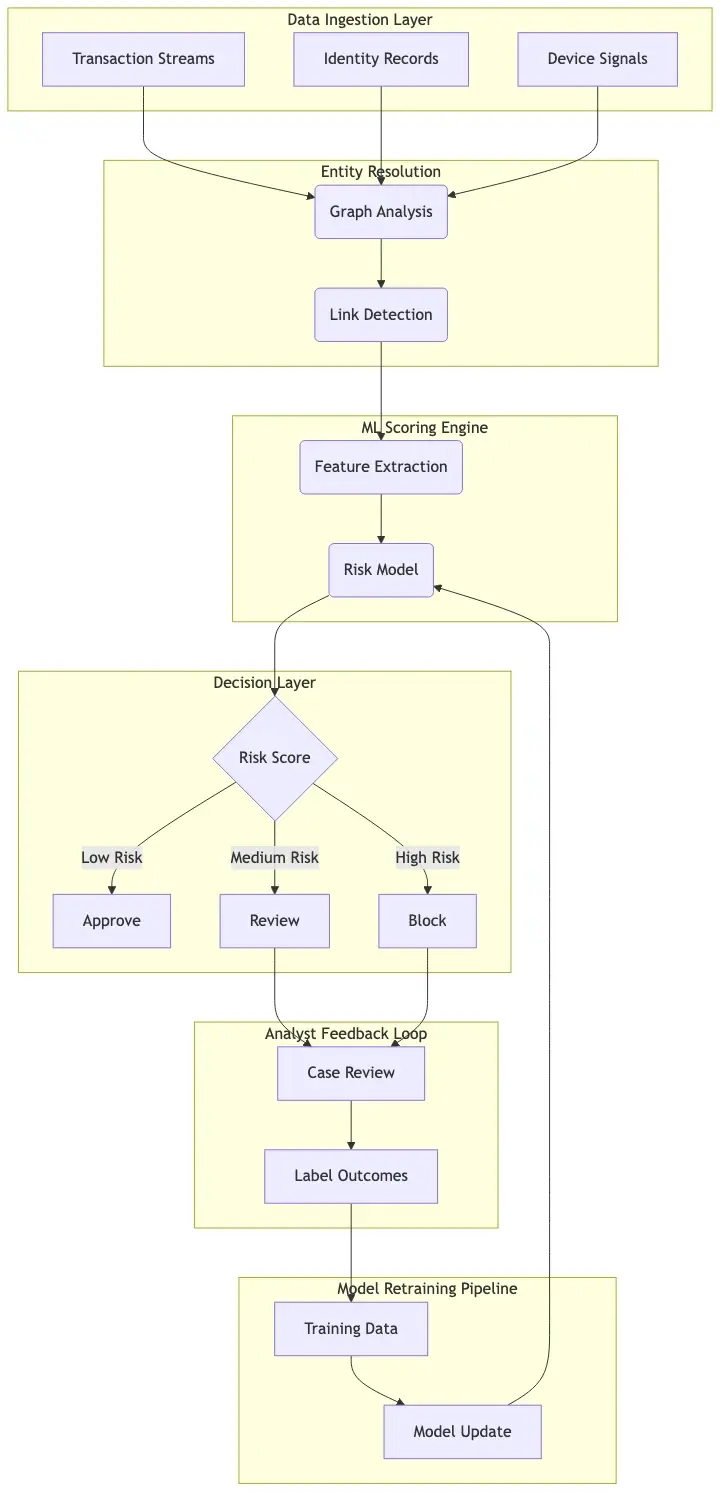

The operational flow for ai fraud detection in banking works like this:

- Ingest all events: Every application, transaction, login, and device event enters the system in real time.

- Build entity graphs: The system links accounts sharing common data elements, revealing hidden relationships across apparently independent customers.

- Score each event: A multi-dimensional fraud probability score is computed, typically in under 100 milliseconds for card transactions.

- Apply decision logic: Events above risk thresholds route to analysts or trigger automated blocks; low-risk events pass transparently.

- Close the feedback loop: Analyst decisions feed back into the model, continuously improving accuracy.

The best fraud detection software combines all five steps into a unified platform rather than stitching together separate tools. The integration between the graph layer and the decisioning layer is where most deployments succeed or break down.

The False Positive Problem Costing Banks Millions

Fraud alert fatigue is the operational crisis that does not appear on fraud loss reports. When a compliance team receives 1,000 alerts per day and 900 are legitimate customer activity, the team does not get better at finding fraud. It gets better at dismissing alerts, including real ones.

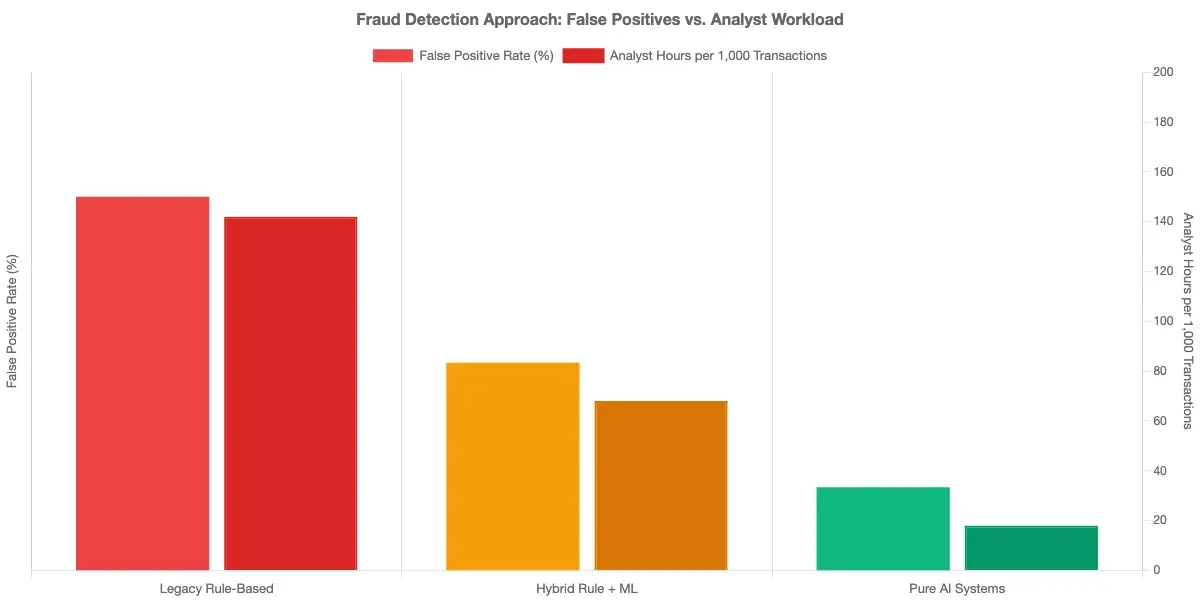

False Positive Rate in Fraud Detection: The Real Numbers

The false positive rate fraud detection average for legacy rule-based systems sits between 80-95%. This is not a marginal inefficiency. It means fraud analysts spend the majority of their day reviewing activity that is not fraud, while actual cases get buried in the volume. Modern AI systems target a false positive rate below 30%. Best-performing deployments reach 10-15%. Going from 90% to 15% means the same analyst team covers roughly five times more genuine fraud risk with the same headcount.

False Positive Cost Fraud: What CFOs Miss

The false positive cost fraud calculation most CFOs use is too narrow. They count fraud losses and analyst headcount; they miss downstream costs. A false positive blocking a legitimate transaction generates a contact center call, costs 8-12 minutes of handle time, and carries a real probability of permanent account closure. Industry research shows 30-40% of customers who experience a false block close their account within 90 days. At scale, that is a retention problem with direct revenue impact that dwarfs analyst labor cost.

The post Reducing False Positives: Rule-Based Systems vs. AI-Driven Solutions covers this cost model in detail, including how to quantify the business case for AI investment based on total cost of ownership.

Real-Time Fraud Detection: Why Speed Defines the Outcome

Real time fraud detection is a technical constraint before it is a strategy choice. Card payment networks require authorization decisions in under 300 milliseconds. If your fraud scoring system cannot compute a result in that window, the transaction approves by default. There is no partial credit for a system that catches the fraud the following morning.

Real-Time Fraud Detection Banks Use in Production

Real time fraud detection banks deploy in card payment environments requires two architectural components: a feature store that serves pre-computed customer context at low latency, and a model inference engine that keeps scoring models in memory to avoid disk reads on every authorization request. Batch approaches, where transaction data is analyzed overnight and alerts generated the next morning, work for AML case management but not for card authorization. Payment fraud prevention requires a separate real-time infrastructure from retrospective AML monitoring. For teams rethinking both programs, the post on Detecting Synthetic Identity Fraud in Real-Time covers the infrastructure trade-offs in practical detail.

AI Fraud Detection Software: Capabilities That Actually Matter

Not all ai fraud detection software addresses synthetic identity fraud equally. When evaluating platforms for this specific threat, three capabilities separate adequate tools from effective ones.

Entity Resolution and Graph Analysis

The foundation of synthetic fraud detection is entity resolution: the ability to unify fragmented identity signals across every application, transaction, and device event into a coherent picture. When a new loan application shares a device ID with 15 prior applications, entity resolution surfaces that connection at application time, before any credit is extended. Graph analysis extends this by mapping relationship networks across all accounts. A single shared phone number connecting 12 accounts is a fraud signal that quantifies how large and coordinated the ring actually is.

For risk teams building this capability from scratch, the AI-Powered Fraud Detection Strategy for Risk Heads outlines how to integrate graph-based detection into an existing fraud operations framework without requiring a full platform replacement.

Behavioral Biometrics as a Detection Signal

Behavioral biometrics captures how users physically interact with digital interfaces: typing rhythm, mouse trajectory, touch pressure on mobile, session navigation patterns. Fraudsters running dozens of synthetic identities through automation scripts generate behavioral signatures that differ measurably from human customers. This signal is especially valuable at account opening and during the credit-building phase, precisely when synthetic identities are hardest to catch through transaction analysis alone. Platforms integrating behavioral biometrics into fraud scoring can flag synthetic accounts months before a bust-out attempt, which is the only intervention that actually prevents the loss.

Sardine vs Unit21: A Practical Platform Comparison

The sardine vs unit21 comparison surfaces frequently in enterprise fraud tool evaluations. Both platforms are purpose-built for financial services but address different parts of the problem.

Sardine focuses on fraud at account opening and transaction authorization. Its graph-based entity resolution and behavioral biometrics are strong for synthetic identity fraud detection, and real-time decisioning runs sub-100ms in production. Unit21 is built around AML case management and automated transaction monitoring workflows. Its rule engine handles complex AML scenarios well, and its analyst interface is designed for investigation workflows rather than real-time decisioning.

| Feature | Sardine | Unit21 |

|---|---|---|

| Synthetic identity detection | Strong (behavioral + graph) | Moderate (rule + ML) |

| Real-time decisioning | Sub-100ms | ~200ms |

| AML transaction monitoring | Limited | Strong |

| Best fit | Fintechs, digital banks | Banks with AML workloads |

| Pricing model | Usage-based | Seat + transaction volume |

Transaction monitoring cost differs meaningfully between them. Sardine's usage-based pricing scales predictably with transaction volume. Unit21's hybrid model grows expensive as analyst seat counts increase during investigation surges. Neither platform alone closes the full gap. Banks serious about synthetic identity detection need capabilities spanning account opening, the credit-building phase, and real-time authorization.

For a broader view of how AI-based approaches differ from legacy systems, AI vs. Traditional Fraud Detection: Key Differences Every Risk Officer Should Know provides the clearest framework available.

How to Reduce False Positives in Transaction Monitoring

Reducing false positives without trading away detection accuracy is the goal every compliance head is chasing. These are the approaches that produce durable results.

How to Reduce False Positives in AML: Segmentation and Feedback

The first intervention is customer risk segmentation. Applying a single alert threshold to all customer accounts is the root cause of most false positive volume. A first-year retail checking customer should not generate alerts at the same threshold as a high-net-worth wire transfer account. Risk-adjusted thresholds reduce false positive volume by 30-50% in most deployments without changing detection rates for genuinely suspicious activity.

The second, higher-leverage intervention is a structured analyst feedback loop. Every time an analyst dispositions an alert as not fraud, that information should update the model's understanding of legitimate behavior for that customer segment. Teams maintaining clean feedback pipelines see false positive rates decline 50-70% within six months. As documented in How Agentic AI Fraud Agents Cut False Positives by 80%, the feedback loop architecture is the single most impactful implementation decision for long-term false positive reduction.

Reduce False Positives Transaction Monitoring with Contextual Scoring

Most false positives occur because a single transaction looks anomalous in isolation. A $5,000 hotel charge in a foreign country looks suspicious without context. With context (the customer travels internationally every July, the device matches their regular mobile app, the billing address is unchanged), it scores as low risk. Contextual scoring models that incorporate historical behavior, device continuity, and session context cut the rate at which legitimate activity triggers alerts.

Payment fraud prevention programs that build individual customer context rather than applying population-level thresholds consistently outperform on both detection accuracy and false positive rates. For compliance teams handling identity verification alongside fraud signals, the KYC/AML identity verification strategy for CISOs provides an integrated framework for combining these data sources. The Consumer Financial Protection Bureau also maintains updated guidance on fraud reporting obligations that inform how institutions should structure alert escalation workflows.

Onboard Customers in Seconds

Conclusion

Synthetic identity fraud detection requires a fundamentally different approach than the rule-based transaction monitoring software most banks currently operate. These identities are engineered to look clean during their most vulnerable phase. By the time traditional systems generate an alert, the bust-out has already happened. Machine learning fraud detection built on entity resolution, graph analysis, and behavioral biometrics catches these schemes during the credit-building phase when intervention is still possible.

The false positive problem is equally consequential. Fraud alert fatigue costs compliance teams millions in analyst labor and erodes detection quality as teams grow numb to noise. AI systems using contextual scoring and structured feedback loops deliver measurably lower false positive rates without sacrificing detection accuracy.

If your current program is catching synthetic fraud after the loss, or drowning your compliance team in false positives from automated transaction monitoring rules, those are solvable problems. The technology exists. The question is whether your fraud detection strategy is built to use it.

Frequently Asked Questions

AI fraud detection is the use of machine learning algorithms, behavioral analytics, and graph network analysis to identify fraudulent transactions, account applications, and suspicious activity in real time. Unlike rule-based systems that flag predefined patterns, AI systems learn from data to detect novel fraud signals, including those produced by synthetic identities during their credit-building phase before a bust-out occurs.

AI detects fraud by analyzing hundreds of data signals simultaneously: transaction amounts, behavioral biometrics, device fingerprints, network relationships between accounts, and historical behavior patterns. The system assigns a fraud probability score to each event, typically in under 100 milliseconds, and routes high-risk events to analysts or automated blocking rules while allowing low-risk events to pass without friction.

AI fraud detection in banking refers to platforms deployed by banks and fintechs to score transactions, applications, and account activity for fraud risk in real time. These systems use supervised and unsupervised machine learning to identify synthetic identities, account takeover attempts, first-party fraud, and payment fraud. They replace or augment legacy rule-based transaction monitoring tools that generate high false positive rates.

AI fraud detection software is a platform combining machine learning scoring, entity resolution, behavioral biometrics, and case management into a unified system for financial institutions. Examples include Sardine, which is strong for account opening and real-time decisioning, and Unit21, which is strong for AML case management and transaction monitoring workflows. The most effective platforms close the feedback loop between analyst decisions and ongoing model retraining.

Machine learning fraud detection uses statistical models trained on historical transaction data to predict whether new activity is fraudulent. Supervised models classify events based on labeled training data. Unsupervised models identify behavioral clusters and outliers without labeled examples, making them effective against new fraud typologies like synthetic identity bust-outs that do not match historical fraud patterns.

Real-time fraud detection is the process of scoring each transaction or account event for fraud risk within milliseconds, before the financial action completes. For card payments, this means generating a fraud decision within the 300-millisecond authorization window required by payment networks. It requires in-memory model inference and pre-computed customer feature stores to achieve the required latency consistently.

The false positive rate in fraud detection measures the proportion of legitimate transactions incorrectly flagged as fraudulent. Legacy rule-based systems typically produce false positive rates of 80-95%, meaning up to 95 out of every 100 alerts require analyst review but turn out not to be fraud. AI-driven systems reduce this rate to 10-30% in production deployments, significantly reducing analyst workload and customer friction from blocked legitimate transactions.

Share this article