Listen To Our Podcast🎧

Fraud as a service dark web marketplaces have turned financial crime from a specialized skill into a commodity anyone can purchase with cryptocurrency. What once required technical expertise, criminal networks, and months of preparation now costs less than a Netflix subscription and ships within 48 hours. Organized crime groups have built genuine businesses around attack-kit distribution, and their customers are targeting your accounts right now.

This post breaks down how FaaS networks operate, what makes them so effective against legacy defenses, and which detection strategies actually stop them.

What Is Fraud-as-a-Service? The Dark Web Economy Explained

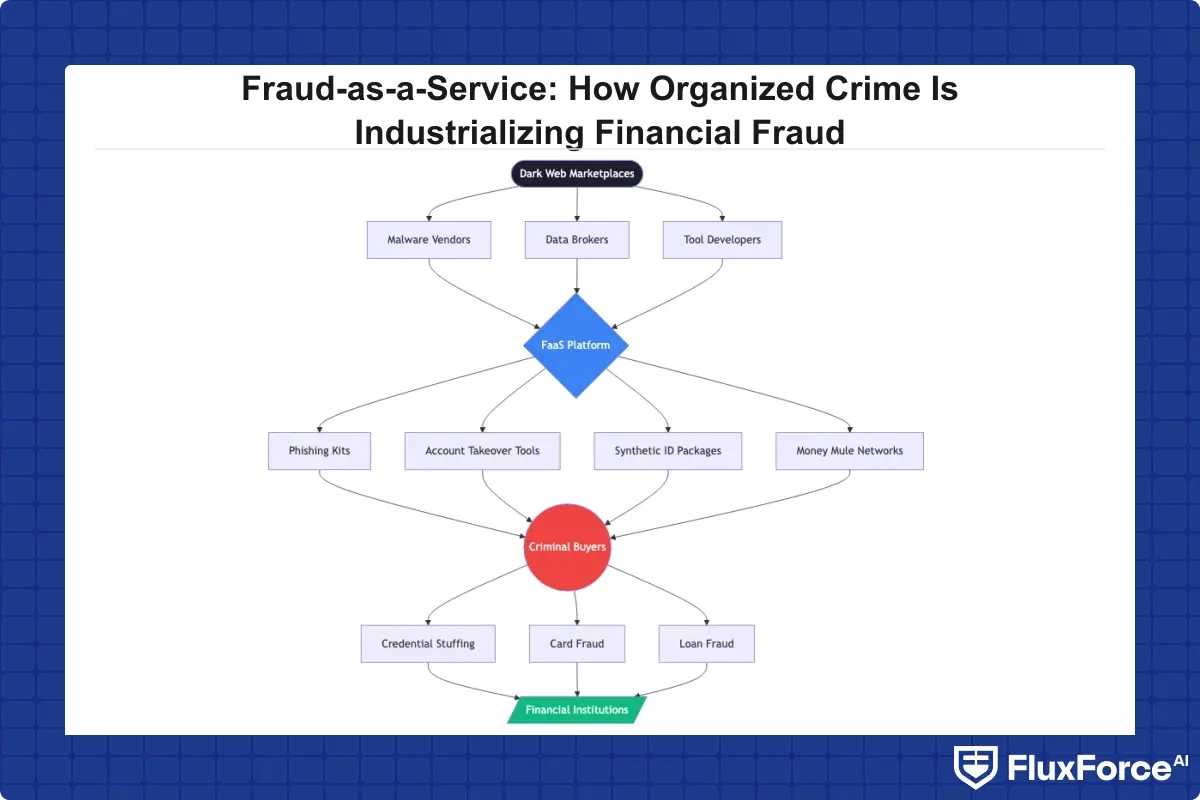

Fraud-as-a-Service (FaaS) is a criminal business model where organized crime groups sell prebuilt fraud tools, stolen data, and operational support to other criminals via dark web marketplaces. The buyer gets everything needed to launch an attack: identity packages, malware kits, money mule networks, and step-by-step tutorials.

The economics are brutal. A full synthetic identity package sells for $10-$25 on major dark web forums. Phishing kits targeting specific banks rent for $200-$500 per month. Account takeover toolkits, complete with session hijacking scripts and anti-detection layers, run $1,000-$3,000 for a "premium" license.

How FaaS Networks Operate on the Dark Web

FaaS vendors operate with the professionalism of legitimate SaaS companies. They post customer reviews, offer tiered pricing, guarantee uptime on their credential-stuffing infrastructure, and even provide refunds for data that does not work. According to Europol's Internet Organised Crime Threat Assessment, dark web service models have become the dominant distribution channel for cybercrime tools, with forum-based reputation systems functioning much like Amazon seller ratings.

The operational structure mirrors a technology company. There are product developers who build and maintain the tools, sales teams managing customer acquisition, support staff handling buyer inquiries, and quality assurance processes verifying that stolen data is fresh and usable.

What Products and Services FaaS Vendors Sell

The FaaS catalog covers the full attack lifecycle:

- Identity packages: Full credit profiles with SSNs, dates of birth, and address histories for synthetic identity fraud construction

- Account takeover kits: Credential stuffing tools pre-loaded with billions of username/password pairs

- Phishing infrastructure: Hosted phishing pages, SMS spoofing services, and vishing scripts targeting specific institutions

- Money mule networks: Cash-out services where FaaS operators handle fund movement for a percentage cut

- Tutorial services: Paid training on how to use the tools without triggering fraud controls

How Organized Crime Builds FaaS Networks on the Dark Web

The shift from opportunistic fraud to industrialized fraud as a service dark web operations represents a fundamental change in threat economics. Criminal organizations have applied supply chain thinking to financial crime, and the results are hard to overstate. Attack kits update faster than compliance teams can tune rules, and the people executing attacks are often several steps removed from the people who built the tools.

The Supply Chain Behind Modern Financial Crime

Traditional fraud required a criminal to personally acquire data, build tools, execute the attack, and launder proceeds. FaaS disaggregates every step. One group specializes in data breaches. Another processes that data into usable identity packages. A third builds the attack infrastructure. A fourth executes the attacks. A fifth handles cash-out. Each group is an expert in its narrow function, making the overall operation far more efficient than any individual actor could achieve.

This specialization has real consequences for detection. When the data breach, the identity construction, the account opening, and the eventual fraud event are separated by months and performed by different actors, tracing a causal chain becomes extremely difficult for compliance teams using conventional monitoring approaches.

Synthetic Identity Fraud: The Backbone of FaaS Attacks

Synthetic identity fraud is the linchpin of most FaaS operations. Unlike traditional identity theft where a real person's credentials are stolen wholesale, synthetic fraud combines real data elements, often a real Social Security number belonging to a child or elderly person, with fabricated information to create a plausible but non-existent identity.

These synthetic identities are then "credit-washed" over 12-24 months, building credit profiles that pass most KYC checks. According to the Federal Reserve's report on synthetic identity fraud, this category costs U.S. financial institutions over $6 billion annually, making it the fastest-growing financial crime in the country. The identity sits dormant until the fraudster chooses to "bust out," maxing all available credit lines in a single coordinated event before disappearing entirely.

Our post on detecting synthetic identity fraud in real-time covers the specific behavioral signals that differentiate synthetic identities from legitimate thin-file customers.

The Five Core Components of a FaaS Operation

Understanding the FaaS architecture helps compliance teams identify where detection opportunities exist. Every FaaS operation, regardless of the target institution or attack vector, is built on five functional layers.

- Data acquisition layer: Stolen databases, skimmed card data, and breached credentials purchased on dark web forums or obtained directly through phishing and malware campaigns targeting staff at financial institutions

- Identity manufacturing layer: Combination of real and fabricated data elements into usable synthetic profiles, followed by credit washing through thin-file credit-building schemes that can take one to two years before activation

- Attack infrastructure layer: Hosting for phishing pages, bot networks for credential stuffing, device emulators to evade device fingerprinting controls, and residential proxy networks to mask IP reputation signals

- Execution layer: The actual fraud events, whether account takeover, new account fraud, loan fraud, or payment fraud, coordinated across multiple synthetic identities to maximize impact before detection

- Cash-out and laundering layer: Money mule networks, cryptocurrency mixers, and merchant fraud schemes that convert stolen funds to clean currency and move them beyond jurisdictional reach

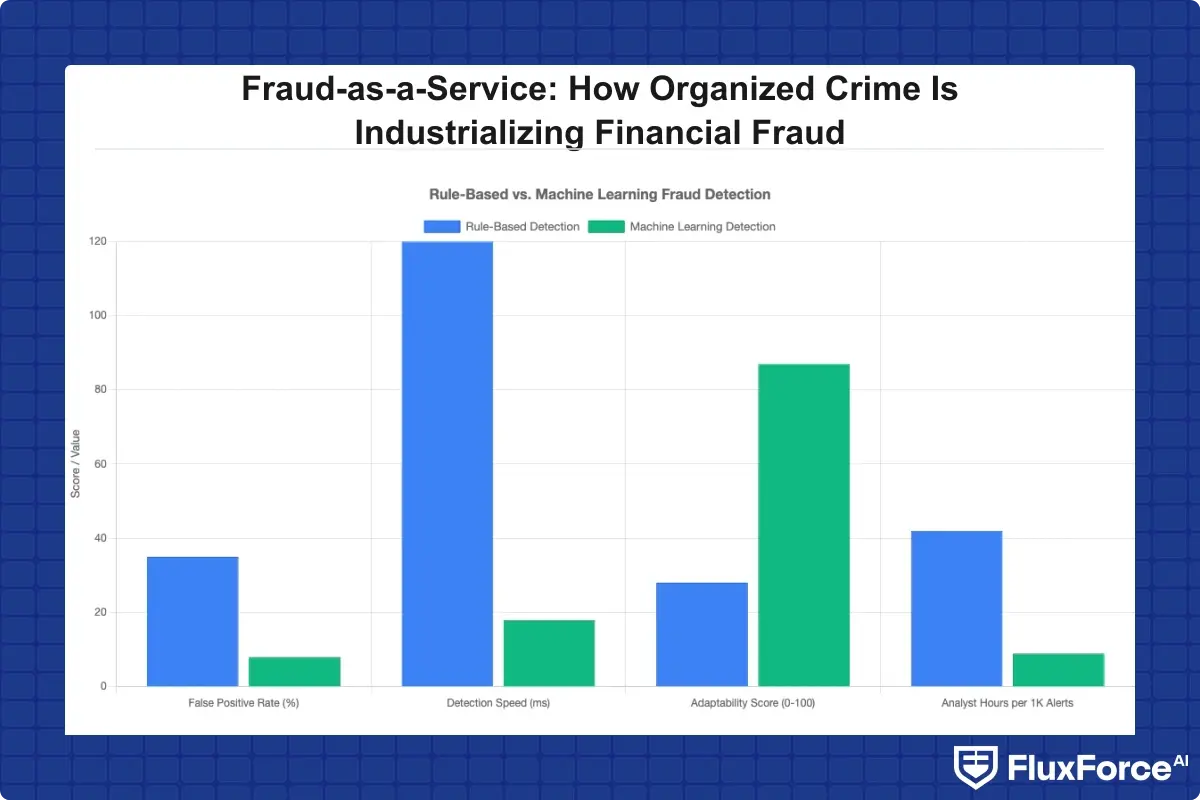

Each layer presents distinct detection opportunities. Rule-based systems tend to catch fraud at the execution layer, often after losses are already realized. Behavioral AI catches signals at the identity manufacturing and attack infrastructure layers, before the fraudster completes the attack sequence.

How Does AI Detect Fraud in Real Time?

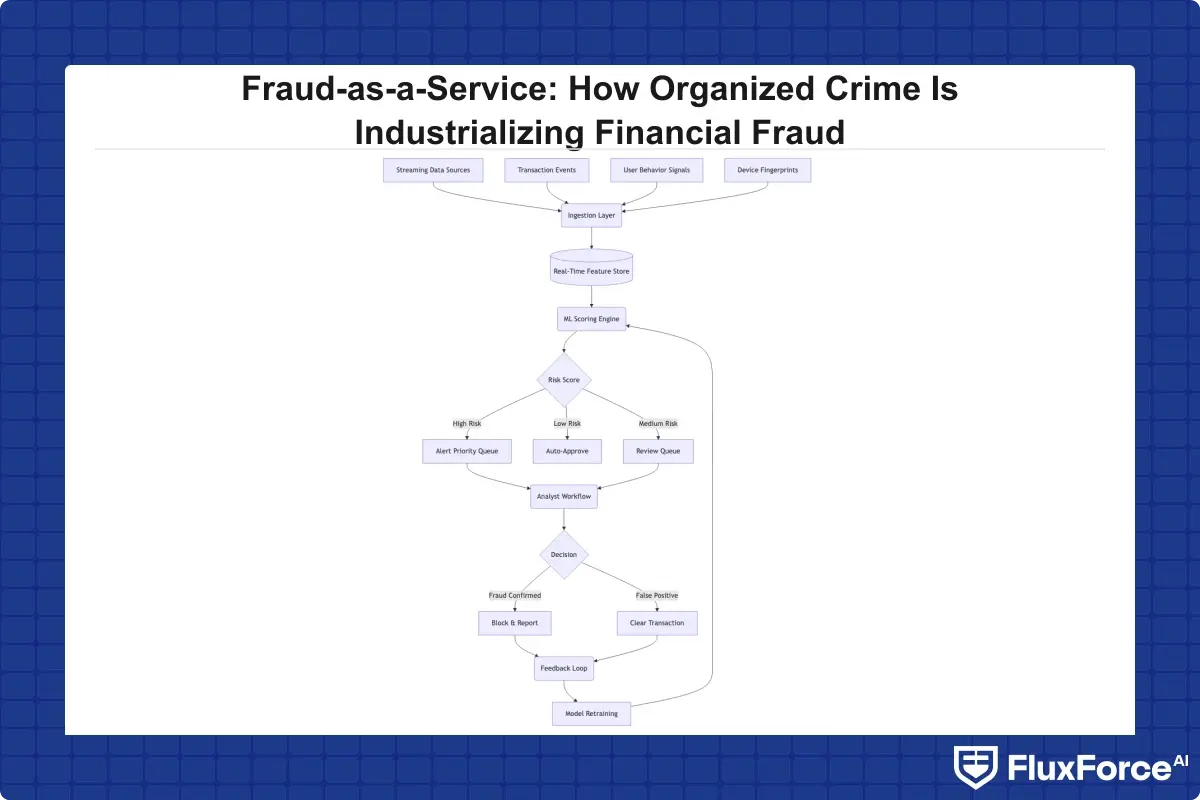

AI fraud detection works by analyzing hundreds of behavioral, transactional, and contextual signals simultaneously to identify anomalous patterns that no human analyst or static rule set could process at transaction speed. The practical explanation of ai fraud detection in banking is that models trained on billions of historical transactions develop a statistical fingerprint of normal behavior for each customer segment, then flag anything that diverges meaningfully from that baseline.

Machine Learning Fraud Detection Models

Machine learning fraud detection uses several model types depending on the specific problem being solved:

- Supervised models (gradient boosting, neural networks) trained on labeled fraud/non-fraud transaction histories to score new transactions in real time

- Unsupervised models (clustering, autoencoders) that identify unusual patterns without pre-labeled training data, particularly useful for detecting novel FaaS attack patterns not present in historical data

- Graph neural networks that map relationships between accounts, devices, IP addresses, and merchants to surface fraud rings operating through multiple synthetic identities simultaneously

- Sequence models (LSTMs, transformers) that detect behavioral anomalies across a customer's transaction history over time rather than evaluating each transaction in isolation

The key advantage of machine learning over rule-based systems is adaptability. FaaS vendors update their attack kits regularly to probe and avoid known detection signatures. Machine learning models that retrain on new data adapt to these changes continuously; static rule libraries do not.

Real-Time Fraud Detection in Banks

Real time fraud detection in banks requires delivering a risk score within 100 milliseconds, which is the typical latency budget for payment authorization. Most real time fraud detection systems achieve this through pre-computed risk scores updated continuously in the background, rather than running full model inference at transaction time.

The architecture typically involves a streaming data layer, a feature store that maintains pre-computed entity features at millisecond latency, and a scoring API that combines those features with transaction-specific signals at decision time. NIST's AI Risk Management Framework provides a useful structure for evaluating whether your real-time scoring architecture meets reliability and fairness standards before deployment.

For a deeper look at how ai fraud detection software integrates with card payment workflows, see our analysis of AI-powered fraud detection strategy for risk heads.

Why Traditional Systems Fail: Fraud Alert Fatigue and False Positives

The honest answer to why legacy systems struggle against FaaS is that rule-based transaction monitoring software was designed for a different threat environment. Rules work when fraud patterns are stable and known. FaaS tools are specifically engineered to probe rule logic and stay just below trigger thresholds, often by testing attacks in small volumes before scaling.

The practical result is fraud alert fatigue. Analysts reviewing thousands of alerts per day, the majority of which are false positives fraud detection teams recognize immediately, lose the ability to concentrate on genuine threats. When 95% of alerts are wrong, the 5% that represent real fraud start getting dismissed as noise alongside everything else.

The Cost of False Positives in Fraud Detection

The false positive cost fraud teams absorb is enormous and consistently underreported. For every dollar of fraud prevented, financial institutions typically spend two to three dollars managing false positive alerts, declined legitimate transactions, and customer service fallout from incorrectly flagged accounts. The false positive rate fraud detection teams accept is often a business decision made under conflicting pressures: set rules too tight and fraud losses rise; set them too loose and analyst costs, customer friction, and transaction monitoring cost all increase simultaneously.

Neither outcome is sustainable. A high false positive rate in fraud detection is not just an efficiency problem. It is a risk problem, because analyst fatigue directly increases the probability that a genuine fraud event gets dismissed alongside the noise.

How to Reduce False Positives in AML

How to reduce false positives in AML starts with understanding why they occur. Most false positives come from three sources: thresholds calibrated to the wrong population baseline, missing contextual signals that would distinguish a genuine anomaly from a legitimate behavioral deviation, and absent entity-level linking that makes separate accounts appear suspicious in isolation when they actually represent a known customer pattern.

Reduce false positives in transaction monitoring with these five steps:

- Build behavioral baselines per customer segment rather than applying universal dollar thresholds across all account types

- Use ML-generated risk scores to prioritize alert queues so analysts work the highest-confidence cases first, not first-in-first-out

- Route analyst dispositions back into model training data so every "not fraud" decision improves future scoring precision

- Implement entity resolution to link accounts, devices, and beneficiaries across your portfolio and identify patterns invisible at the individual account level

- Add device fingerprinting and behavioral biometrics to transaction data before scoring to give models richer signal than ledger data alone provides

Our post on how agentic AI fraud agents cut false positives by 80% covers specific implementation patterns that have worked at scale for mid-market and enterprise financial institutions.

Automated Transaction Monitoring vs. Rule-Based Systems

The choice between automated transaction monitoring and rule-based approaches shapes how effectively your institution responds to FaaS attacks. Rule-based systems are deterministic and auditable. Regulators understand them, and compliance teams can explain every alert rationale to an examiner without difficulty. The problem is brittleness: one parameter change by a FaaS vendor's developers can invalidate months of rule tuning overnight.

Automated transaction monitoring using machine learning adds a behavioral dimension that rules cannot capture. A customer who normally initiates ACH transfers of $2,000-$5,000 to two regular counterparties and then suddenly initiates 15 transfers of $9,800 each to new beneficiaries should score as high-risk regardless of whether any specific rule covers that exact pattern. The behavioral deviation is the signal, and ML models catch it without requiring a rule to have anticipated it.

Transaction Monitoring Software Selection Criteria

When evaluating transaction monitoring software, compliance and risk teams should pressure-test vendors on these specific questions:

- Can the system explain why a specific transaction scored high risk in terms an examiner can verify independently?

- How frequently does the model retrain to incorporate new fraud patterns emerging from the dark web?

- Does the platform ingest device, behavioral, and network signals, or only transaction ledger data?

- What is the documented false positive rate at your volume tier, and how is it measured across alert categories?

- Does the alert logic cover BSA/AML, sanctions, and payment fraud simultaneously or through separate rule sets that require separate tuning?

When comparing platforms like Sardine vs Unit21, the key differentiator is typically the depth of behavioral signal ingestion rather than the breadth of rule libraries. Both platforms offer strong rule management, but their approaches to third-party data enrichment and real-time behavioral scoring differ significantly.

Transaction Monitoring Cost Considerations

Transaction monitoring cost varies significantly between approaches. Rule-based systems carry lower initial implementation costs but higher ongoing operational costs: more analyst headcount, more tuning cycles, and higher rates of missed fraud generating loss provisions that accumulate quietly. Automated monitoring costs more upfront in integration and model training but reduces per-alert cost by 40-60% through improved precision at scale.

The break-even point for most mid-market institutions is 18-24 months post-deployment. For institutions where payment fraud prevention is a primary concern, the total cost of ownership calculation must include fraud losses avoided, not just licensing fees. Purpose-built fraud detection software with integrated behavioral scoring typically delivers better precision at volume than general-purpose monitoring tools bolted onto legacy infrastructure. Platforms like Sardine and Unit21 differ most visibly in their API integration depth and their approach to third-party data enrichment, which directly affects both precision and transaction monitoring cost at scale.

What Financial Institutions Should Do Now Against FaaS Attacks

FaaS operations move faster than annual risk assessment cycles. The institutions that contain losses are those with monitoring systems that adapt at the same speed as the threat, not those with the most comprehensive rule libraries.

Immediate Tactical Steps

Three actions you can take this week without waiting for a technology procurement cycle:

- Audit your current rule library for rules that have not fired in 90 days. Dormant rules indicate either a detection gap in your coverage or a redundancy consuming analyst capacity with no measurable return.

- Review your alert-to-SAR conversion rate by alert type. Alert types with under 1% conversion are generating noise. Calibrate thresholds or retire those rules entirely.

- Pull your false positive rate by customer segment. FaaS attacks cluster by target profile, and segments with high false positive rates often signal an active attack pattern your rules are catching with poor precision.

Strategic Investment Priorities

Institutions building durable defenses against fraud as a service dark web operations are prioritizing three investments over the next 12-24 months:

- Behavioral analytics layers that model customer activity patterns over weeks and months, not just individual transactions evaluated in isolation

- Cross-institution data sharing through programs like FinCEN's 314(b) voluntary information sharing program, which allows institutions to share information about suspected money laundering and fraud activities across organizational boundaries

- Orchestration platforms that connect fraud signals from KYC, automated transaction monitoring, device intelligence, and behavioral biometrics into a single risk decision rather than siloed alert streams

See how AI-powered approaches are reshaping compliance architecture in our analysis of zero trust and agentic AI in banking security, and how the shift from rule-based to ML-based approaches plays out operationally in our breakdown of AI vs. traditional fraud detection methods.

Onboard Customers in Seconds

Conclusion

Fraud as a service dark web operations have industrialized financial crime to a degree that manual processes and static rule sets cannot match. The criminal supply chains selling synthetic identity packages, account takeover kits, and money mule networks are more organized than many fraud teams realize, and they update their methods faster than rule-based systems can adapt.

Institutions that contain FaaS losses share three characteristics: they use automated transaction monitoring with behavioral AI, they run disciplined programs for reducing false positives and improving analyst efficiency, and they treat payment fraud prevention as a continuous operational function rather than a periodic audit cycle. The technology to defend against these threats exists today.

If your current transaction monitoring software was tuned more than 12 months ago without model retraining, assume that some FaaS attack patterns are passing through undetected. Start with a false positive audit by customer segment, review alert conversion rates by type, and evaluate whether your ai fraud detection software reflects attack methodologies from the past 12 months rather than the past decade.

Frequently Asked Questions

Fraud-as-a-Service (FaaS) is a criminal business model where organized crime groups sell prebuilt fraud tools, stolen identity data, and operational support to other criminals through dark web marketplaces. Vendors offer tiered pricing, customer support, and money-back guarantees, operating with the structure of legitimate SaaS businesses. Buyers receive ready-made attack kits covering everything from synthetic identity packages to account takeover scripts and money mule cash-out networks.

AI fraud detection works by analyzing hundreds of behavioral, transactional, and contextual signals simultaneously to identify patterns that deviate from a customer's established baseline. Machine learning models, including supervised classifiers, unsupervised anomaly detectors, and graph neural networks, score transactions in real time by comparing incoming signals against patterns learned from billions of historical events. Unlike static rules, these models retrain continuously, allowing them to adapt when FaaS vendors update their attack kits to evade known detection signatures.

Fraud alert fatigue occurs when analysts process thousands of alerts per shift with very high false positive rates, typically 90-95% of alerts being non-fraudulent. This volume and monotony reduce an analyst's ability to concentrate on genuine threats, increasing the probability that real fraud events get dismissed as noise. The root cause is usually rule-based transaction monitoring software with thresholds calibrated to broad populations rather than customer-specific behavioral baselines.

Reducing false positives in transaction monitoring requires five key changes: building behavioral baselines per customer segment rather than universal thresholds, using ML risk scores to prioritize analyst queues, routing analyst dispositions back into model training as feedback, implementing entity resolution to link accounts and devices across the portfolio, and enriching transaction data with device fingerprinting and behavioral biometrics before scoring. These steps together can reduce false positive rates by 40-80% compared to static rule-based approaches.

Both Sardine and Unit21 are AI-powered fraud and compliance platforms, but they differ primarily in their approach to behavioral signal ingestion and real-time scoring depth. Sardine emphasizes device intelligence and behavioral biometrics layered directly into transaction scoring, making it strong for payment fraud prevention at the transaction level. Unit21 focuses more on configurable rule management and case workflow tooling for AML and compliance operations. For institutions comparing the two, the key decision factor is typically whether behavioral scoring at transaction time or operational case management flexibility matters more for their use case.

Rule-based transaction monitoring systems have lower upfront implementation costs but significantly higher ongoing operational costs due to analyst headcount requirements, frequent tuning cycles, and higher rates of missed fraud generating loss provisions. Automated transaction monitoring using machine learning costs more to implement but reduces per-alert cost by 40-60% through improved precision. Most mid-market financial institutions reach break-even on automated monitoring within 18-24 months when total cost of ownership includes fraud losses avoided, not just licensing fees.

Synthetic identity fraud is difficult to detect with traditional systems because the fraudulent identity is built deliberately to pass KYC checks. By combining a real Social Security number with fabricated personal information and then spending 12-24 months building a legitimate-looking credit history, fraudsters create profiles that meet standard verification criteria. The "bust-out" event, when the fraudster maxes all credit lines and disappears, looks identical to a legitimate customer suddenly experiencing financial distress. Detection requires behavioral AI that tracks long-term activity patterns rather than point-in-time identity verification.

Share this article