Listen To Our Podcast🎧

AI governance financial services has moved from a compliance checkbox to a genuine competitive differentiator, and in 2026, institutions that get it wrong face consequences far beyond regulatory fines. Automated credit decisions, real-time fraud scoring, and AI-driven KYC checks touch millions of customers daily. When these systems produce biased, opaque, or incorrect outputs, the damage to trust is immediate and hard to repair. This post breaks down what effective AI governance looks like in practice, why identity verification fintech is central to the conversation, and how banks and fintechs can build automated systems that regulators, auditors, and customers can actually trust.

Why AI Governance Is a Board-Level Priority in Financial Services

Regulators in the EU, UK, and US have spent the past three years closing the gap between what AI systems do and what institutions are required to explain. The EU AI Act classifies most financial AI as high-risk, requiring pre-deployment conformity assessments and ongoing monitoring. US banking regulators (OCC, Fed, FDIC) have all issued risk management frameworks that specifically call out model risk in automated decisioning.

In 2023, a major US lender paid $365 million in settlements after its automated underwriting system discriminated against protected classes. The system was technically compliant at launch but drifted as market conditions changed. That is the real governance gap: not the initial build, but the ongoing operation. For institutions building AI risk programs, the NIST AI Risk Management Framework provides a practically useful starting point that maps well onto financial regulatory requirements.

The Regulatory Pressure Mounting on Automated Systems

No single regulation governs AI in financial services right now. What you have instead is a patchwork: GDPR's right to explanation, ECOA's fair lending requirements, the EU AI Act's risk tiers, and DORA's operational resilience requirements all overlap in ways that require careful mapping. Institutions that treat these as separate compliance programs spend twice the effort and still have gaps.

A practical governance framework maps each AI model to its applicable regulatory requirements, then assigns ownership, monitoring cadence, and escalation paths. This sounds straightforward and takes about 18 months to do properly for a mid-sized bank.

What Happens When Automated AI Decisions Go Wrong

When an AI system makes a wrong credit or fraud call, three things happen: the customer is harmed, the regulator wants an explanation, and the legal team starts calculating exposure. Most financial institutions cannot quickly produce a clear audit trail showing why a specific automated decision was made. That gap is what modern AI governance frameworks are designed to close.

How Identity Verification Fintech Is Reshaping Customer Onboarding

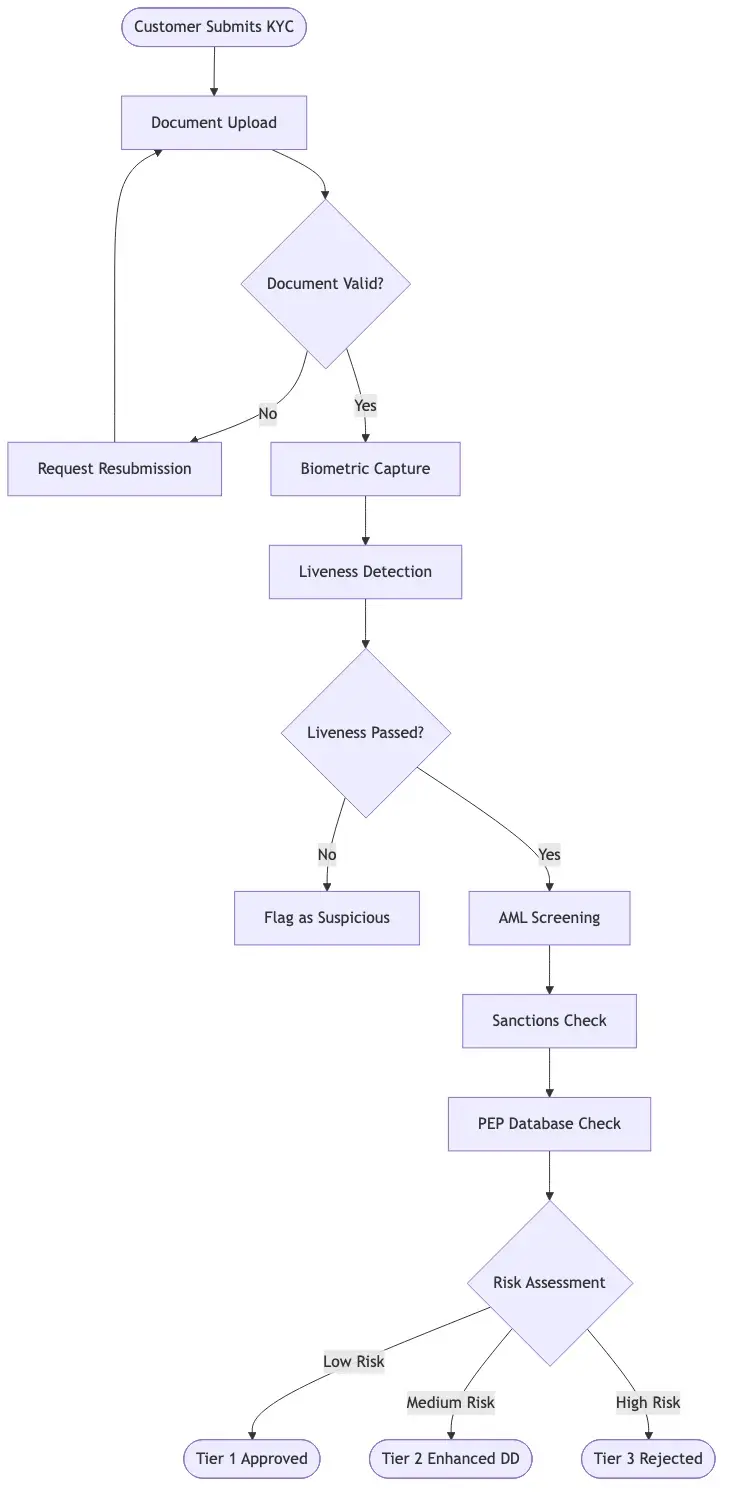

Identity verification fintech is the layer where governance gets most visible to customers. The onboarding flow is the first place a person interacts with a bank's automated systems, and it is where the tension between speed and compliance is sharpest. Banks that have deployed modern digital identity proofing report onboarding times dropping from days to minutes, with measurable gains in top-of-funnel conversion.

KYC Onboarding Speed vs. the Compliance Trade-Off

KYC onboarding speed is not just a UX metric. It directly affects fraud exposure. Move too fast and you skip checks that catch synthetic identities. Move too slow and legitimate customers abandon the process. The institutions getting this right use risk-based tiering: low-risk applicants get automated approval in under 3 minutes, while higher-risk profiles trigger human review.

This tiered approach requires a well-built identity verification API that passes risk scores and verification statuses downstream to decisioning systems without manual hand-offs. Getting that integration right is harder than most teams expect, particularly when the API must interface with legacy core banking infrastructure not designed for real-time data exchange.

Digital Identity Proofing in Practice

Digital identity proofing establishes that a digital identity matches a real-world person through at least three distinct checks: document authenticity, biographic matching, and liveness detection. Each check has its own failure mode. Document fraud tools miss high-quality counterfeits. Biographic matching produces false rejections for certain demographic groups at higher rates. Liveness detection can be fooled by sophisticated deepfake video.

Effective AI governance means understanding these failure modes and monitoring them continuously. For a detailed breakdown of verification strategy built for identity-first compliance, see our post on KYC/AML and identity verification strategy for CISOs.

Biometric Identity Verification and the Liveness Detection Problem

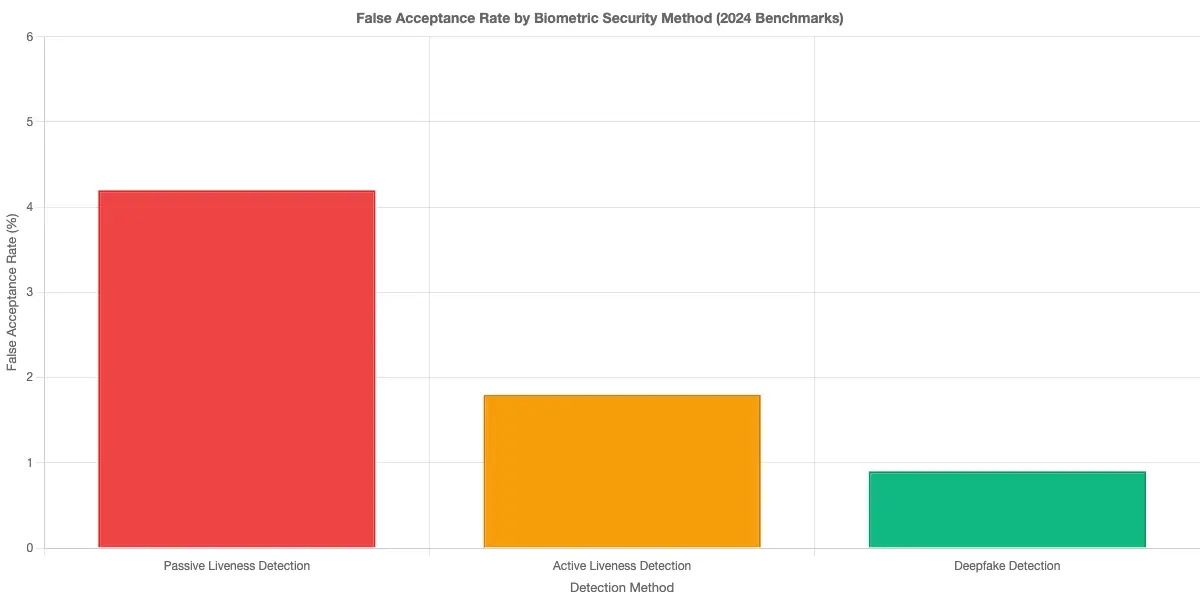

Biometric identity verification has become the default standard for digital onboarding: it is faster than manual document review and harder to fake than knowledge-based authentication. But the technology is only as reliable as the liveness detection layer protecting it.

How Liveness Detection Fraud Prevention Actually Works

Liveness detection fraud prevention verifies that the person presenting biometric data is physically present, not submitting a photo, video replay, or synthetic media. Passive liveness checks analyze micro-expressions, skin texture, and 3D depth cues from a standard camera feed. Active liveness checks ask for a specific action, such as blinking or turning the head, that is harder to spoof.

Passive liveness now achieves false-acceptance rates below 0.1% for most commercial implementations under controlled test conditions. Real-world performance against adversarial attacks using AI-generated video is harder to benchmark and varies considerably by vendor. Any AI governance program should include scheduled adversarial testing of liveness detection, not just reliance on vendor benchmarks.

Deepfake Detection in Banking Environments

Deepfake detection banking is one of the faster-evolving areas in financial security. As generative AI tools have made convincing face-swap video accessible to non-technical fraudsters, banks can no longer assume that a live video feed is genuine. Academic research on synthetic media shows that human reviewers miss AI-generated video more than 30% of the time under realistic conditions.

Current deepfake detection tools analyze artifacts in video compression, eye reflection patterns, and facial geometry inconsistencies that are imperceptible to humans but detectable algorithmically. These tools work well against off-the-shelf deepfake generators but face a genuine cat-and-mouse challenge as generation tools improve. Scheduled adversarial testing of deepfake detection systems must be part of any financial institution's AI governance calendar.

Synthetic Identity Fraud Detection and Why Traditional Checks Fall Short

Synthetic identity fraud is the fastest-growing category of financial fraud in North America. The Federal Reserve estimates that it costs US financial institutions over $6 billion annually. Unlike traditional identity theft, which uses a real person's stolen credentials, synthetic identity fraud combines real and fabricated data to create a plausible but fictitious identity. For teams working on detection architecture, our analysis of detecting synthetic identity fraud in real-time covers the technical layers in more depth.

How Synthetic Identity Fraud Detection Works

Synthetic identity fraud detection requires moving beyond single-point checks at onboarding. A synthetic identity typically passes document checks because the underlying documents are often real, just belonging to different people or assembled from multiple sources. Where synthetic identities fail is in behavioral patterns over time: credit file velocity, address change frequency, and the absence of a normal credit history arc.

Effective synthetic identity fraud detection combines three layers: onboarding verification (document and biometric checks), network analysis (shared attributes across applications), and behavioral monitoring (accounts building credit in suspicious patterns). Most institutions have the first layer. Far fewer have operationalized the third.

Where Traditional KYC Checks Fall Short

Traditional KYC checks were designed for a world where identity fraud required physical documents. They are not built to detect identities assembled from data breaches, fabricated Social Security numbers assigned to minors, or AI-generated supporting documents. The check passes, the account opens, and the fraud materializes 18 months later when the synthetic identity maxes out credit lines and disappears.

This is where linking KYC with AML monitoring becomes essential. A platform that handles KYC and AML automation as an integrated workflow gives compliance teams visibility across the full identity lifecycle, not just the onboarding moment. Continuous transaction monitoring alongside onboarding checks is the most practical defense against synthetic fraud at scale.

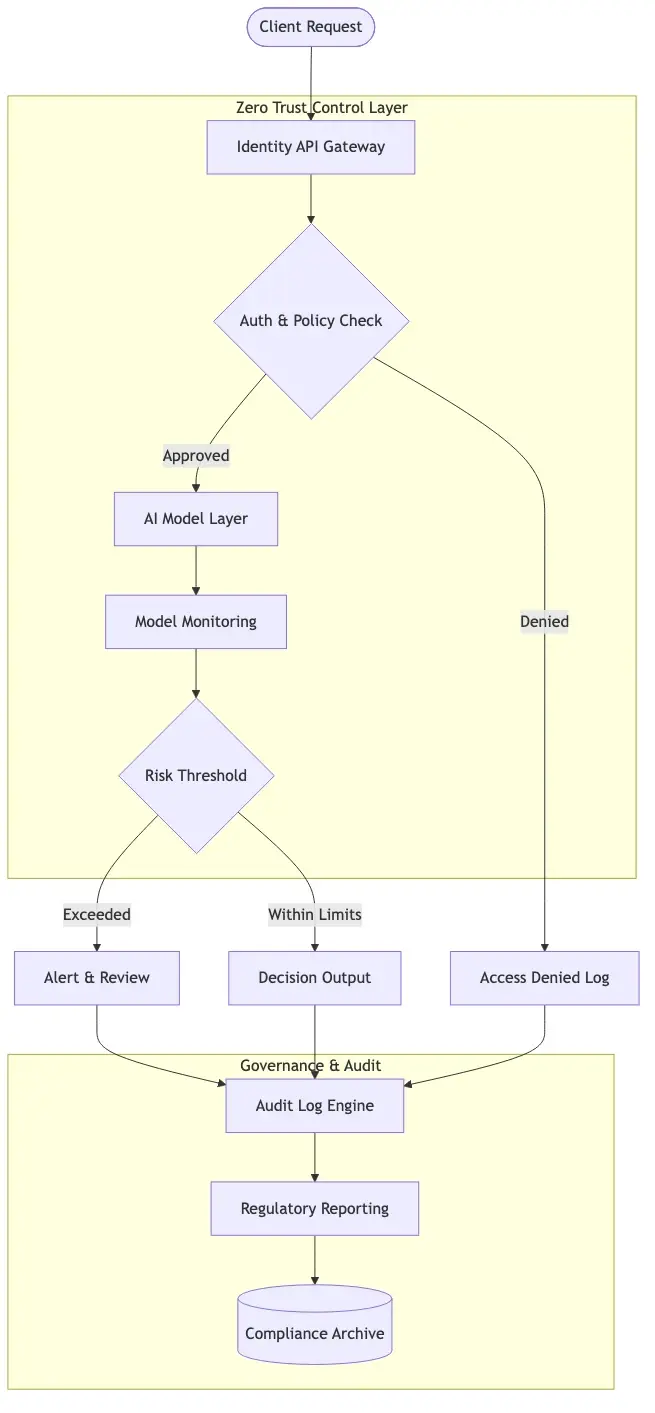

Building a Zero Trust Security Framework for Financial AI

Zero trust financial services is increasingly the architectural assumption that AI governance frameworks are built on. The core principle is that no user, device, or system should be inherently trusted because it sits inside the network perimeter. Every action requires verification. For financial AI systems, authentication and authorization checks apply to the AI models themselves, not just the humans using them.

Zero Trust Financial Services Architecture in Practice

Implementing a zero trust security framework for financial AI involves three concrete changes: micro-segmentation of data access so models see only the data they need, continuous authentication of API calls rather than one-time session-level access, and behavioral baselines for model outputs that trigger alerts when output distribution shifts unexpectedly.

The third change is the most operationally demanding. It requires instrumenting AI models to emit metrics that a monitoring system can baseline and alert on. Most ML platforms do not do this by default, and retrofitting production systems is non-trivial. But it is the change that catches model drift before regulators do. See our deep-dive on zero trust security architecture for banking operations for implementation specifics.

Identity Verification API as a Governance Control Point

An identity verification API is more than a technical integration point. In a well-governed AI stack, it is a control layer that enforces policy, logs decisions, and provides the audit trail that regulators require. Every verification call should return not just a pass/fail result but a structured record: what documents were checked, what biometric score was returned, what risk tier was assigned, and what downstream action was triggered.

Without that structure, compliance teams reconstruct decisions from fragmented logs after the fact, which is expensive and often incomplete. The identity verification API design is a governance decision, not just an engineering one.

How AI Governance Frameworks Address Explainability and Bias

Explainability is the part of AI governance that most technology teams underestimate until a regulator asks for it. For credit and fraud decisions, 'the model said so' is not an acceptable explanation. Institutions need to articulate, in plain language, what factors influenced a specific decision and why.

Regulatory Explainability Requirements for Financial AI

Under ECOA and the Fair Credit Reporting Act in the US, adverse action notices must provide specific reasons for credit denials. Under GDPR Article 22, customers have the right not to be subject to solely automated decisions with significant effects. The EU AI Act requires high-risk AI systems to maintain logs sufficient to trace inputs and outputs of each use. The EU AI Act's full framework is outlined at the European Commission's regulatory AI framework page for teams mapping their obligations.

These requirements together mean financial institutions need decision-level explainability for AI outputs, not just aggregate model performance metrics. This is achievable with SHAP values or LIME analysis, but it requires upfront design investment and ongoing infrastructure that most teams do not budget for until a regulator asks.

Auditing Automated Decisions and Catching Bias Early

Bias in financial AI models is usually unintentional and often invisible until you look for it. A credit model trained on historical loan performance data will reproduce the biases in that data unless specific debiasing steps are taken during training and validated at deployment. Standard accuracy metrics do not reveal this: a model can be 95% accurate overall while systematically underserving a specific demographic group at a rate that would fail a fair lending exam.

Effective AI governance includes disaggregated performance reporting, breaking down model accuracy and error rates by demographic segment quarterly, not just at the annual model review. For compliance teams managing automated reporting alongside these monitoring programs, our post on banking compliance reporting and automation covers the data infrastructure side in practical detail.

Practical Steps to Implement AI Governance in Financial Services

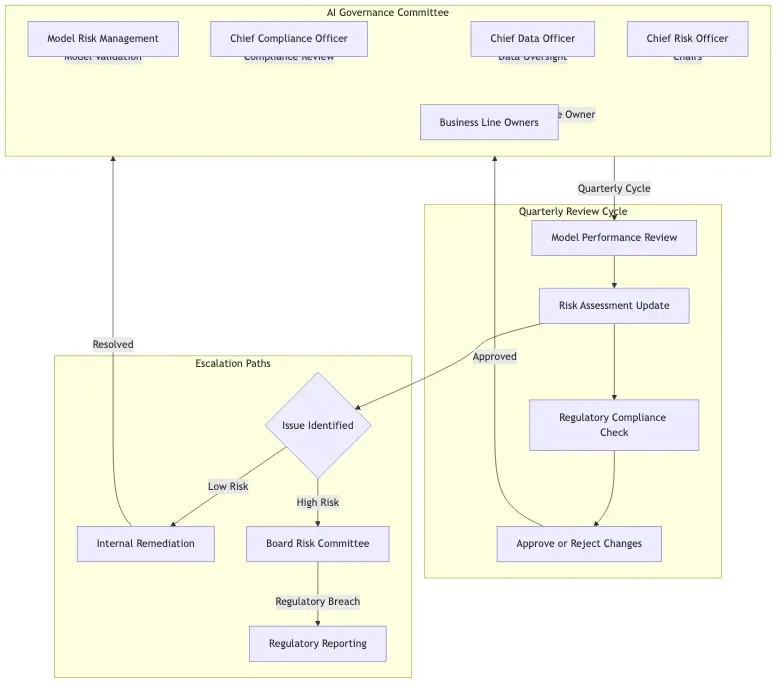

Getting AI governance right is fundamentally an organizational problem as much as a technical one. The technology to monitor, explain, and audit AI models exists. What most institutions lack is clear ownership, defined processes, and the budget to sustain them.

Building the Right Governance Committee Structure

A functional AI governance committee in a financial institution typically includes: the Chief Risk Officer or delegate, the Chief Data Officer, a senior compliance representative, the Head of Model Risk Management, and at minimum one business line owner for each high-risk AI application. The committee needs decision-making authority, not just advisory standing, and should meet at least quarterly with documented minutes.

Core responsibilities are approving new AI deployments, reviewing monitoring reports, escalating regulatory inquiries, and overseeing the model risk inventory. Without a standing inventory of all AI models in production, you cannot govern them. Most organizations discover significant gaps in their inventory the first time they build one seriously.

Continuous Monitoring and Model Drift Management

Model drift is the quiet challenge in AI governance. A credit model that was fair and accurate at launch can gradually become less accurate as economic conditions shift or applicant composition evolves. Without continuous monitoring, this drift is invisible until something goes wrong.

Practical monitoring tracks three things: prediction distribution shift (is the model scoring applicants differently than before?), feature drift (are input variables behaving differently than at training?), and outcome monitoring (are predictions still aligning with observed results?). The institutions doing this well treat model monitoring as a production engineering problem, with dedicated dashboards, automated alerts, and defined runbooks. The ones struggling still treat it as a periodic audit exercise.

Onboard Customers in Seconds

Conclusion

AI governance financial services is not a solved problem, and the regulatory requirements, the threat landscape around synthetic identity fraud, and the complexity of managing a growing AI portfolio all keep evolving. But institutions that treat governance as an operational discipline are building real advantages: faster time-to-market for new AI features, lower fraud losses from continuously monitored detection systems, and better regulatory relationships because they can actually explain their decisions.

Start with inventory: map every AI system making consequential decisions. If you are further along, the next frontier is real-time explainability and automated bias monitoring. The investment pays back faster than most teams expect, and the cost of not investing is increasingly hard to defend to a board watching the regulatory environment tighten.

Frequently Asked Questions

Identity verification fintech refers to technology platforms and APIs that financial institutions use to confirm a person's claimed identity is genuine during digital onboarding or transactions. These systems combine document scanning, biometric matching, liveness detection, and database checks to validate identities without requiring in-person interaction. Modern identity verification fintech solutions can complete a full KYC check in under 90 seconds for low-risk applicants.

KYC onboarding speed measures how quickly a financial institution can complete the Know Your Customer verification process for a new customer, from document submission to account approval. Institutions using automated identity verification typically complete KYC onboarding in 2 to 5 minutes for low-risk applicants, compared to 2 to 5 business days for manual review processes. Faster onboarding reduces customer drop-off during signup and directly improves conversion rates.

Biometric identity verification is the process of confirming a person's identity by comparing their physical characteristics, such as a facial scan, against a reference document or database record. In digital banking, this typically means capturing a selfie or short video during onboarding and comparing it to the photo on a government-issued ID using facial recognition algorithms. Biometric verification is more reliable than knowledge-based checks because physical characteristics are significantly harder to steal or replicate than passwords.

Liveness detection fraud refers to attempts by bad actors to bypass biometric identity checks by submitting photos, pre-recorded videos, 3D masks, or AI-generated deepfake media instead of a genuine live face. Anti-spoofing technology, also called liveness detection, is built into biometric systems to distinguish between a real person and a spoofing attempt. Passive liveness detection analyzes subtle visual cues without requiring user action, while active liveness detection asks users to perform specific movements to confirm physical presence.

Digital identity proofing is the end-to-end process of verifying that someone claiming an online identity is who they say they are, using digital methods rather than in-person verification. A complete digital identity proofing workflow includes document authenticity verification, biographic data matching, biometric face comparison, and liveness confirmation. Regulatory frameworks like NIST SP 800-63 define three Identity Assurance Levels that specify what level of proofing is required for different categories of access.

An identity verification API is a programmable interface that allows financial applications to trigger and receive results of automated identity checks without building the underlying verification technology themselves. These APIs accept document images and biometric data, run them through verification engines, and return structured results including match scores, risk ratings, and compliance flags. A well-designed identity verification API also maintains detailed logs of each call, creating the audit trail regulators require for AI governance.

Synthetic identity fraud is a form of financial fraud where bad actors create fictitious identities by combining real information, such as a genuine Social Security number, with fabricated data like a false name and address. These synthetic identities are often aged over months or years to build a credit history before being used to obtain loans or credit lines that are then defaulted. Unlike traditional identity theft, the person whose partial data was used may not realize they are affected because the synthetic identity does not directly impersonate them.

Share this article