Listen To Our Podcast🎧

AI agents financial crime investigation is no longer a concept being piloted in innovation labs. As of 2026, it's operational infrastructure at hundreds of financial institutions, quietly replacing analyst queues that once took weeks to clear.

The numbers explain the urgency. Financial crime losses globally exceeded $3.1 trillion in 2025, according to estimates compiled by the Financial Action Task Force, with synthetic fraud, account takeover, and money laundering schemes growing faster than compliance teams can scale. Traditional investigation workflows, built on rule-based alerts and manual case review, weren't designed for this volume.

This post breaks down exactly how AI agents are changing that, covering biometric identity verification, liveness detection, deepfake attacks in banking, and why synthetic identity fraud detection is where these systems prove their worth most clearly.

The Scale of Financial Crime AI Agents Must Address in 2026

Financial crime has shifted in ways that matter. The attacks that compliance officers worry about in 2026 aren't the ones that showed up in 2019 training materials. Fraud rings now use industrialized tooling: generated synthetic identities, AI-cloned voices, and deepfake video that passes first-line human review. The common thread is speed. Fraudsters move faster than manual workflows can respond.

Why Traditional Investigation Methods Fall Short

Rule-based systems flag transactions that match predefined patterns. The problem is that sophisticated fraud rarely matches patterns from three years ago. When a synthetic identity fraud ring opens 4,000 accounts over 18 months using slightly varied biographical data, no static rule catches it. Each individual account looks clean.

Manual investigation has a different bottleneck. A typical AML analyst reviews 30 to 50 cases per day. When a mid-size bank generates 2,000 alerts weekly, the math doesn't work. Cases backlog, and by the time an investigator reaches a suspicious account, the money has moved.

The Cost of Slow Detection

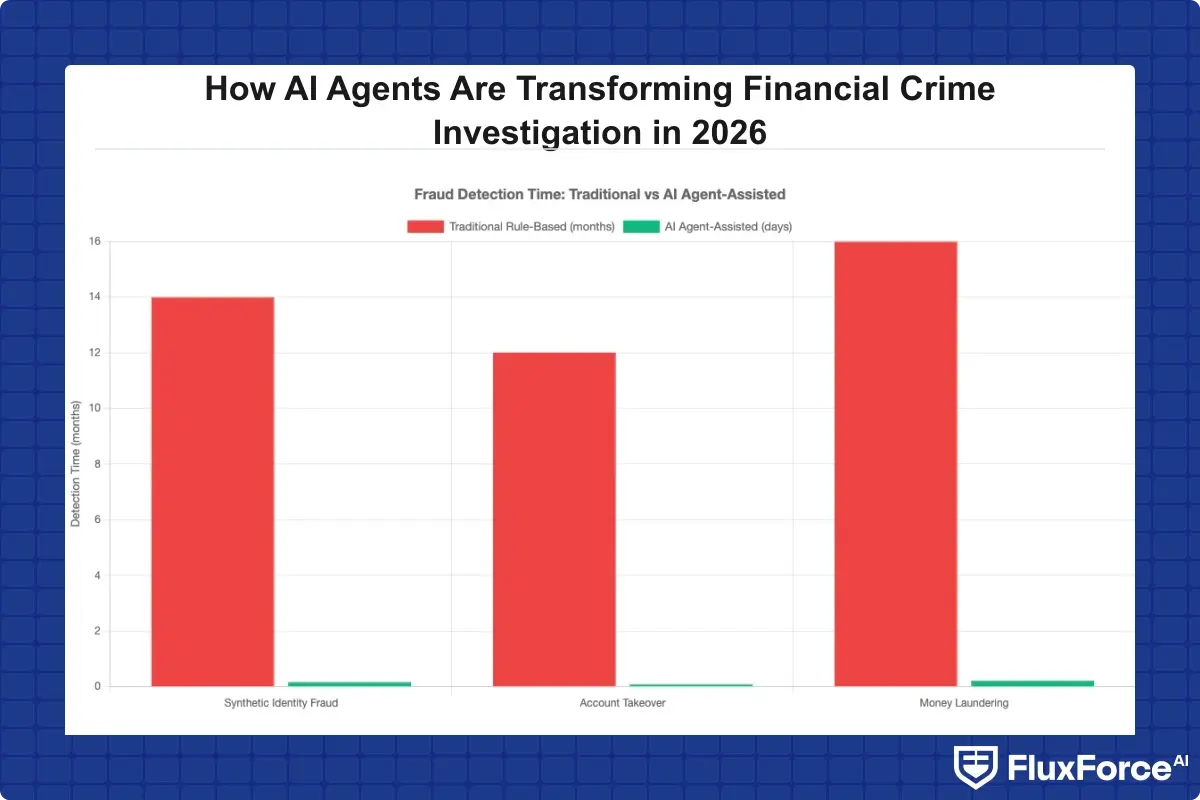

The delay between fraud occurrence and detection averages 14 months for synthetic identity fraud, according to data cited by FinCEN. In that window, a single synthetic identity can generate $50,000 to $200,000 in losses before triggering a manual review.

Speed matters. AI agents financial crime investigation systems close that detection gap from months to hours by continuously scanning transaction behavior, cross-referencing external data feeds, and escalating only the cases that genuinely need human judgment.

What Are AI Agents in Financial Crime Investigation?

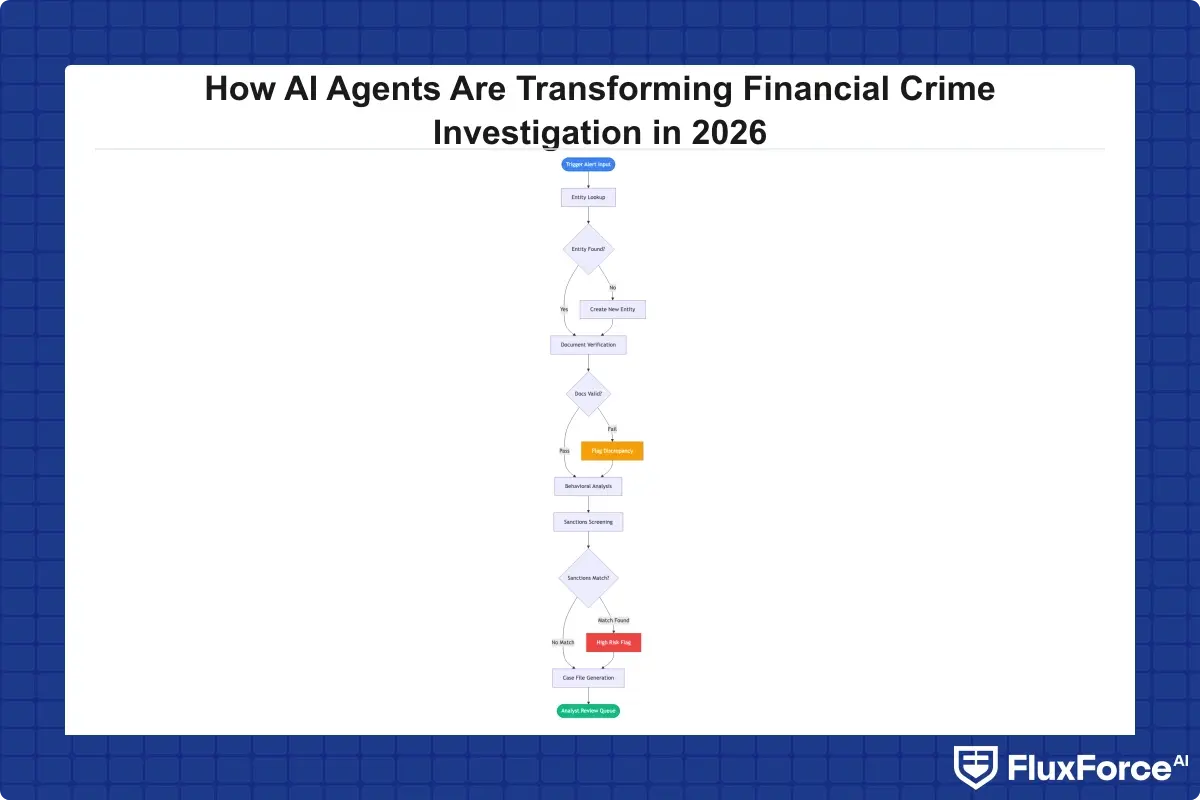

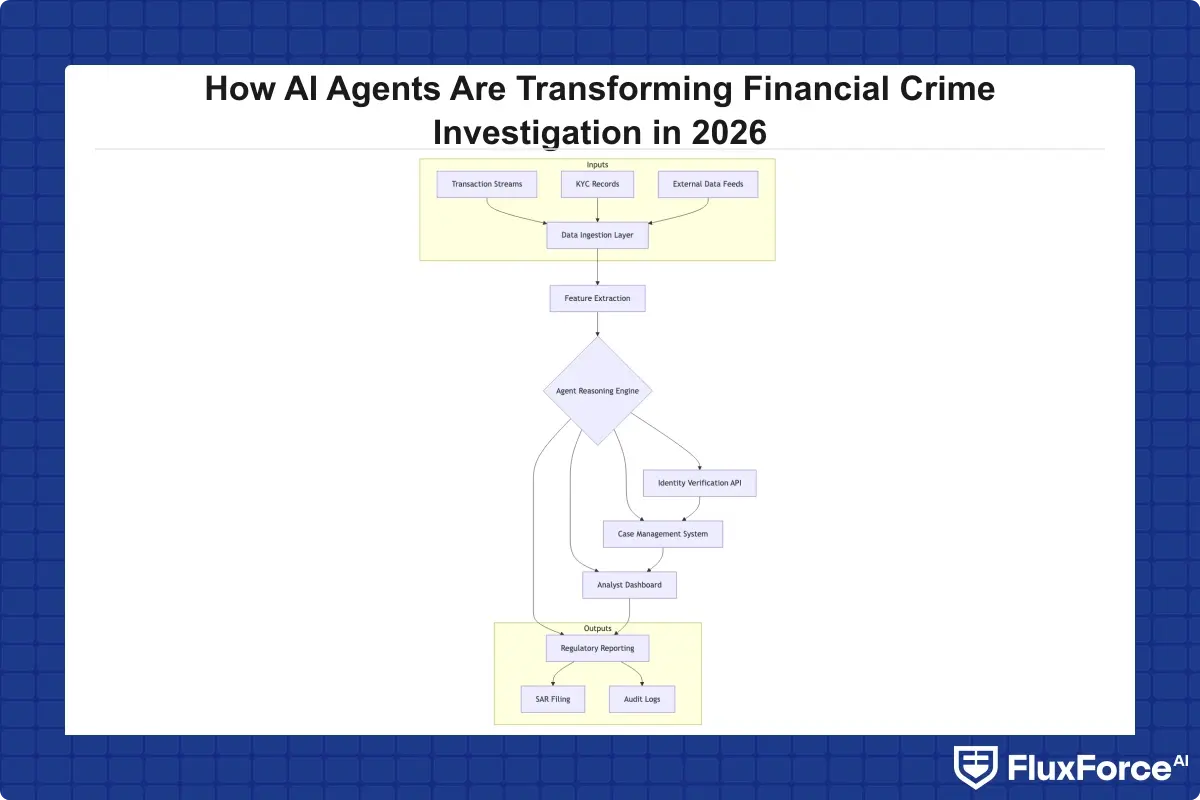

AI agents in financial crime investigation are autonomous software systems that can reason, plan multi-step actions, and execute investigation workflows without continuous human direction. They differ from traditional machine learning models in one critical way: they don't just score a transaction and stop. They gather additional evidence, query external APIs, run follow-up checks, and produce a structured case file ready for analyst review.

How AI Agents Differ from Rule-Based Systems

A rule-based system asks: "Does this transaction match a known bad pattern?" An AI agent asks: "What do I know about this entity, what's missing from the record, and what should I check next to reach a confident conclusion?"

That difference matters enormously when you're dealing with identity verification fintech workflows at scale. Rule-based systems create false positive rates that can reach 95% in high-volume environments, meaning analysts spend the majority of their time clearing cases that aren't actually suspicious. AI agents that reason over the full entity context reduce that rate significantly, as documented in cases where institutions moved from rule-based alerts to agentic investigation pipelines.

Core Capabilities That Make AI Agents Effective

Three capabilities separate effective AI agents from glorified alert filters:

- Memory across sessions: An AI agent can recall that a customer's onboarding document was flagged for review six months ago, even if that flag never escalated.

- Tool use: Agents call identity verification APIs, sanctions screening databases, and behavioral analytics platforms as part of a single investigation workflow.

- Explainability: Unlike black-box models, well-designed agents produce a reasoning trail that compliance officers can read and regulators can audit.

The third point is often underestimated. Regulators don't just want accurate decisions. They want documented, defensible ones.

Biometric Identity Verification and AI-Powered KYC

Biometric identity verification has moved from premium feature to table stakes in the identity verification fintech space. In 2026, any serious KYC stack includes facial recognition, document liveness checks, and behavioral biometrics as layered controls.

The shift was driven by one clear problem: knowledge-based authentication is broken. Security questions, SMS OTPs, and static passwords all have documented bypass methods. Biometrics add a dimension that's significantly harder to spoof, at least when implemented correctly.

Liveness Detection Fraud Prevention

Liveness detection fraud prevention is where the arms race gets technical. Liveness detection is the process of confirming that a biometric sample, typically a selfie or short video, comes from a live person rather than a photograph, mask, or pre-recorded clip. When liveness detection works, it closes one of the most common KYC bypass routes.

The challenge is that liveness detection has known attack surfaces. Deepfake video quality improved substantially through 2024 and 2025, and some first-generation liveness systems couldn't distinguish between a real face and a high-quality deepfake. Modern systems use 3D depth mapping, infrared analysis, and randomized challenge-response sequences to make liveness detection fraud attacks significantly harder. Still, no system is perfect, which is why layering with behavioral signals and digital identity proofing matters.

For a deeper look at how identity verification fits into insurance and lending workflows, the KYC/AML & Identity Verification Strategy for Claims Directors covers practical implementation patterns for regulated industries.

Digital Identity Proofing at Scale

Digital identity proofing is the process of verifying that someone claiming an identity actually owns it, using government-issued documents, biometrics, and authoritative data sources. According to NIST Special Publication 800-63, identity proofing is classified into three assurance levels, with financial services typically requiring Level 2 or Level 3 depending on product risk.

At scale, digital identity proofing creates a throughput problem. Manual document review takes 3 to 7 minutes per application. At 10,000 applications per day, that's more than 500 hours of human review time daily. AI-assisted proofing systems handle document classification, fraud signal extraction, and data matching automatically, flagging only exceptions for human review. The result is KYC onboarding speed measured in seconds rather than business days.

Synthetic Identity Fraud Detection: Where AI Agents Excel

Synthetic identity fraud is arguably the hardest fraud category to catch with traditional methods. A synthetic identity is constructed from real and fabricated information, often using a real Social Security Number combined with a fake name and address. The identity passes basic KYC checks because parts of it are genuine.

Detecting synthetic identity fraud in real-time requires a fundamentally different approach from verifying a real person's documents. AI agents are well-suited here because synthetic identity fraud detection depends on pattern recognition across entity networks, not just individual record checks.

How Synthetic Identity Fraud Works in Practice

A typical synthetic identity fraud operation follows a predictable lifecycle:

- Create a synthetic identity using a valid SSN, often belonging to a child or someone with a thin credit file

- Build credit history slowly over 12 to 18 months with secured cards and small loans

- Apply for maximum credit across multiple lenders simultaneously, the so-called bust-out stage

- Default on all accounts and disappear

Each step individually looks legitimate. The fraud only becomes visible when you see the network: the same device fingerprint used across 40 applications, addresses appearing across hundreds of accounts, or credit behavior matching known bust-out velocity signatures.

AI Agents Spotting What Humans Miss

AI agents running synthetic identity fraud detection cross-reference device signals, behavioral biometrics, application velocity, and entity linkage graphs simultaneously. A human analyst reviewing a single application file can't see that the applicant's phone number appeared on 23 other applications in the past 60 days. An AI agent can, and it flags the entire network rather than just the single account in front of it.

This network-level thinking is where AI agents financial crime investigation creates the most concrete ROI. Banks using agentic fraud investigation have reported reducing synthetic identity charge-offs by 40 to 60% within 18 months of deployment.

Deepfake Detection in Banking: A Growing Priority

Deepfake detection banking is no longer a theoretical concern. In 2025, several high-profile incidents involved fraudsters using AI-generated video to impersonate executives and customers in identity verification flows. One documented case involved a deepfake video call used to authorize a $25 million wire transfer, an event that prompted multiple regulators to issue guidance on video-based identity verification.

The technical response has been multi-layered. Modern deepfake detection banking systems use:

- Spectral analysis: Examining pixel-level inconsistencies that appear in AI-generated video

- Physiological signals: Subtle rPPG (remote photoplethysmography) signals from skin that deepfakes often fail to replicate accurately

- Behavioral consistency checks: Comparing speech patterns and facial micro-expressions against enrollment data

No single technique is sufficient. The most effective implementations combine passive deepfake detection with active liveness challenges and out-of-band verification.

Zero Trust Security Framework for Financial Services

Zero trust financial services means treating every authentication attempt as potentially compromised, regardless of whether it originates from inside or outside the network perimeter. The zero trust security framework applies to identity verification as much as it applies to network access control. Every session, every document submission, and every biometric check is verified independently rather than inherited from a prior trusted state.

For financial institutions implementing zero trust security architecture in banking, the operational shift is significant. It means moving away from a "verify once at onboarding" model toward continuous verification throughout the customer relationship. AI agents make this practical by running continuous risk scoring in the background without adding friction to the user experience.

Identity Verification API Integration

An identity verification API provides the technical interface through which financial platforms connect to document verification, biometric matching, sanctions screening, and watchlist databases. Modern identity verification API architectures are modular: a bank can plug in liveness detection from one provider, document OCR from another, and sanctions screening from a third, all orchestrated through a single workflow.

The practical advantage of API-based architecture is speed and flexibility. When a new fraud vector emerges, the affected module can be swapped without rebuilding the entire verification stack. For institutions building this capability, pairing identity verification API access with a well-designed digital identity proofing strategy makes the difference between a system that scales and one that creates new bottlenecks.

How AI Agents Improve KYC Onboarding Speed

KYC onboarding speed is a competitive variable in 2026. Customers compare onboarding experiences across financial products, and a 3-day wait for account approval is a conversion killer when competitors offer same-session approval.

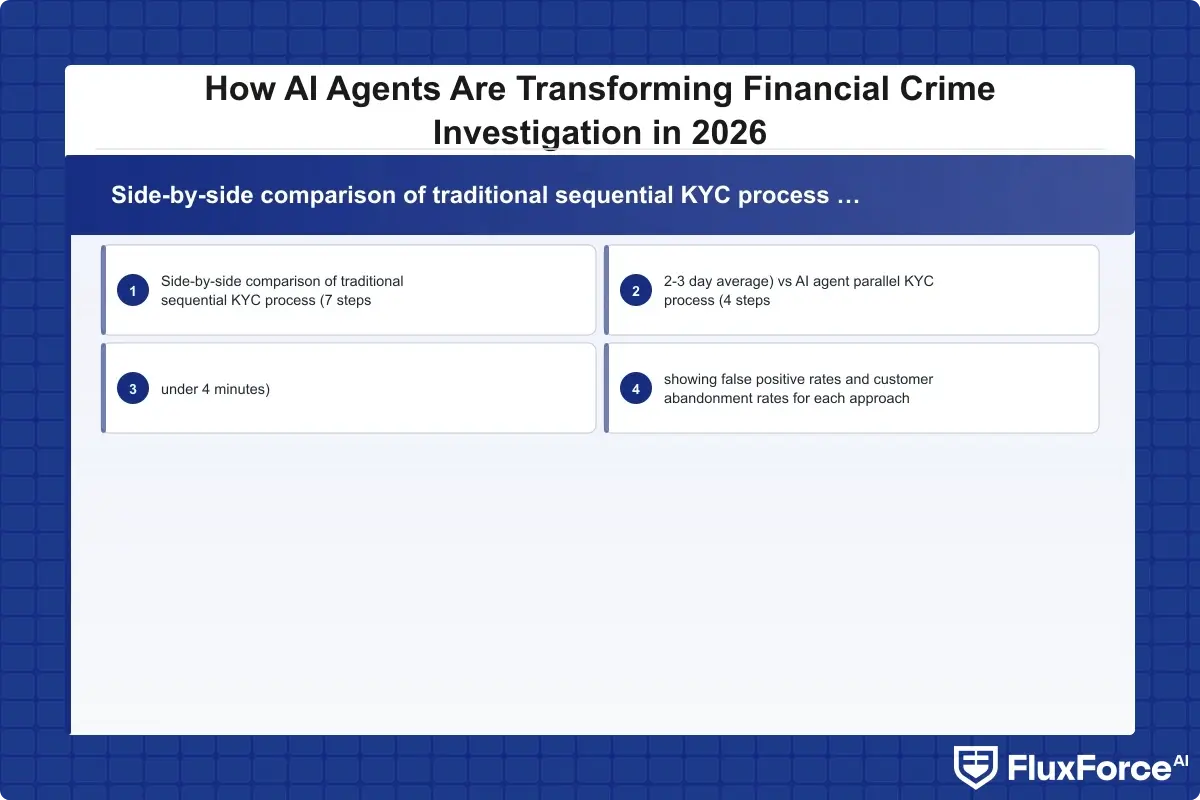

AI agents improve KYC onboarding speed by running verification steps in parallel rather than sequentially. Traditional KYC workflows look like a relay race: document check, then biometric check, then sanctions screening, then manual review. Agentic systems run all checks simultaneously and only escalate cases where checks conflict or confidence thresholds aren't met.

Reducing False Positives Without Slowing Compliance

The tension between fraud prevention and customer experience often comes down to false positives. A false positive is a legitimate customer incorrectly flagged as suspicious. High false positive rates mean legitimate customers get stuck in manual review queues, increasing onboarding time and abandonment rates significantly.

How Agentic AI Fraud Agents Cut False Positives by 80% documents this dynamic in detail. The key insight is that AI agents can incorporate context that binary rule systems ignore: prior behavior, device reputation, behavioral biometrics during the application process, and network relationships to other known-good accounts. An applicant who has been a customer for three years at a partner institution isn't the same risk as a first-time applicant with no credit footprint, even if both trigger the same rule-based alert.

Banks that have deployed KYC/AML automation through agentic systems report onboarding times dropping from 2 to 3 business days to under 4 minutes for straightforward cases, while escalation rates to manual review fall by 70%.

Zero Trust Financial Services: Continuous Verification

Zero trust financial services moves identity verification from a one-time gate at account opening to an ongoing process throughout the customer relationship. The practical implementation involves continuous signals: transaction patterns, device fingerprints, location consistency, and behavioral biometrics captured during app sessions.

When something changes, the system responds proportionally. A customer logging in from their usual device and location gets frictionless access. The same customer logging in from a new device in an unfamiliar country gets a step-up verification challenge. The response is automated, consistent, and documented, which is exactly what regulators want to see.

Applying Zero Trust Security Framework in Practice

The zero trust security framework in financial services rests on three principles: verify explicitly, use least privilege, and assume breach. The third principle is the one most institutions underinvest in.

Assuming breach means designing investigation and response workflows as if a compromise has already occurred, and using AI agents to detect the indicators before damage accumulates. For supply chain and logistics contexts, Zero Trust in Global Trade covers how the same framework applies outside pure banking environments.

This model directly addresses the 14-month synthetic identity fraud detection gap mentioned earlier. Continuous verification catches behavioral drift, like a customer whose spending pattern suddenly shifts to categories associated with bust-out fraud, months before a traditional investigation would surface it.

What Financial Institutions Should Prioritize Now

The honest answer is that most institutions aren't behind on the technology. They're behind on the implementation discipline. AI agents for financial crime investigation are available and mature. The gap is usually in data quality, integration architecture, and staff training on how to work alongside agentic systems rather than in front of them.

Three things tend to unblock progress most reliably:

- Start with a specific high-volume workflow: Synthetic identity fraud detection or liveness fraud prevention are good starting points because the ROI is measurable and the baseline data is usually already available.

- Fix data quality before adding AI: An AI agent is only as good as the signals it can access. If your transaction monitoring system isn't capturing device fingerprints or behavioral biometrics, the agent has nothing to reason over.

- Design for auditability from day one: Regulators will ask how the AI reached its conclusions. Build explanation logging into the architecture before deployment, not after your first regulatory examination.

Onboard Customers in Seconds

Conclusion

AI agents financial crime investigation is shifting from competitive differentiator to operational necessity. The fraud patterns that defined 2022 have been replaced by AI-generated synthetic identities, deepfake detection banking challenges, and bust-out schemes that exploit the gaps between disconnected rule-based systems.

Financial institutions that close those gaps by adopting biometric identity verification, continuous liveness detection fraud controls, and agentic investigation workflows will detect fraud faster, reduce false positives, and improve KYC onboarding speed without compromising compliance. Those that don't will find their manual processes overwhelmed by attack volumes they weren't designed to handle.

The technology is ready. The implementation requires discipline around data quality, identity verification API integration, and regulatory auditability. Institutions that treat AI agents as a strategic layer rather than a point solution will be best positioned to keep pace with how financial crime evolves through the rest of this decade.

Frequently Asked Questions

**Identity verification fintech** refers to technology platforms and solutions that financial institutions use to confirm customer identities during onboarding and ongoing transactions. These systems combine document verification, biometric identity verification, sanctions screening, and behavioral analytics to meet KYC and AML regulatory requirements. Modern identity verification fintech stacks are API-driven and can complete core checks in seconds rather than days.

**KYC onboarding speed** is the time it takes a financial institution to complete all identity verification and compliance checks required to activate a new customer account. Traditional manual KYC processes average 2 to 5 business days. AI-assisted systems that run document verification, liveness detection, and sanctions screening in parallel can reduce this to under 4 minutes for straightforward applications, significantly improving conversion rates and customer experience.

**Biometric identity verification** is the process of confirming a person's identity by matching their unique physical characteristics, such as facial features, fingerprints, or voice patterns, against a stored reference. In financial services, it typically involves comparing a live selfie or short video against a government-issued ID document. It is almost always paired with liveness detection to prevent spoofing attacks using photographs or pre-recorded video.

**Liveness detection fraud** occurs when attackers attempt to bypass biometric verification systems by presenting a photograph, pre-recorded video, or AI-generated deepfake instead of a live person. Modern liveness detection fraud prevention systems counter this by using 3D depth mapping, infrared sensors, and randomized challenge-response prompts that require real-time physical responses. No single technique eliminates the risk entirely, which is why layered controls including behavioral biometrics are recommended.

**Digital identity proofing** is the process by which a financial institution verifies that a person's claimed identity is legitimate using digital channels rather than in-person verification. According to NIST Special Publication 800-63, identity proofing involves collecting identity evidence, validating it against authoritative sources, and verifying the evidence belongs to the person presenting it. Financial services typically require Identity Assurance Level 2 or Level 3, depending on the risk profile of the product being offered.

An **identity verification API** is a programmatic interface that allows financial platforms to connect to external identity verification services, including document authentication, biometric matching, sanctions screening, and fraud signal databases. Modern identity verification API architectures are modular, allowing institutions to integrate specialized providers for each verification layer without building the capability in-house. This design also makes it easier to swap components when new fraud vectors emerge.

**Synthetic identity fraud** is a type of financial fraud where criminals construct a fake identity by combining real information, such as a valid Social Security Number, with fabricated details like a false name or address. Because part of the identity is real, synthetic identities often pass basic KYC checks. Effective synthetic identity fraud detection requires AI-powered pattern analysis across entity networks, looking for velocity signals, shared device fingerprints, and behavioral anomalies across multiple accounts simultaneously rather than evaluating each application in isolation.

Share this article